Do We Need More Training Data or More Complex Models?

Do we need more training data? Which models will suffer from performance saturation as data grows large? Do we need larger models or more complicated models, and what is the difference?

Recently, I came across Do We Need More Training Data?, by Xiangxin Zhu, et. al. The paper, a journal version of a conference paper originally published in 2012, evaluates the performance of classic mixture models for object recognition tasks as the amount of training data is varied. Given the subsequent transformation of the field as convolutional neural networks trained on massive datasets have outperformed all other systems on object recognition tasks, a reading of this paper seems especially relevant.

The authors consider the task of object recognition. They question "whether existing detectors will continue to improve as data grows, or saturate in performance due to limited model complexity". To this end, they vary number of training examples and the number of mixtures measuring performance using mixture models on several object detection tasks. As might be expected, they find that the optimal model complexity is greatest when the number of training images is greatest. The paper elaborates on their algorithm and contains a variety of experiments, but given the subsequent triumph of other methods, the relevant idea for this post is the fundamental question, do we need more data? We can go further and ask, what methods benefit from more data? Given more data, what methods are best? How are these questions answered differently in the setting where computational power is constrained (vs. boundless)?

What Makes a Classifier Complex?

Zhu et al. consider recent improvements on benchmarks in object recognition and whether they ought to be attributed to to improved algorithms or larger available training datasets. They question whether increasing amounts of data will improve performance absent "more complex models", citing a paper by Halevy, Norvig an Pereira, titled "The Unreasonable Effectiveness of Data". In the Halevy paper, the authors argue that linear classifiers trained on millions of specific features outperform more elaborate models that try to learn general rules but are trained on less data.

One should note that two different notions of complexity are at play here. One straightforward notion is the number of free parameters in the model. The other, which Halevy et al. term elaborateness refers to how complicated a model is in a more intuitive sense. When the Halevy paper uses the word complex (only four times) it is in precisely the same way as the word elaborate. In the Halevy paper, an algorithm that is not elaborate is described as simple. While the elaborateness is not formally defined, it refers neither to the size of the model or the dimensionality of the feature space. They clearly state that linear classifiers (however high dimensional) are not elaborate. Presumably, other straight-forward algorithms like nearest neighbor could be considered simple.

In this sense, logistic regression over an n-gram models with bigrams or trigrams are considered equally elaborate, while a system that tries to explicitly model syntactic or semantic relationships between words and phrases is decidedly elaborate. In contrast, the Zhu paper appears to use the word complex ambiguously, at times referring to a quantifiable "complexity" corresponding to the number of mixtures and otherwise in reference to Halevy's complexity which is agnostic to the number of features.

For the rest of this post I'll use simple and elaborate in the sense used by Halevy et al. and small to large to describe the size/dimensionality of the model. In the case of a linear separator, this will correspond to the number of features and for nearest neighbors, this would be the number of training examples. To avoid confusion, I'll try to avoid the word complex altogether when possible.

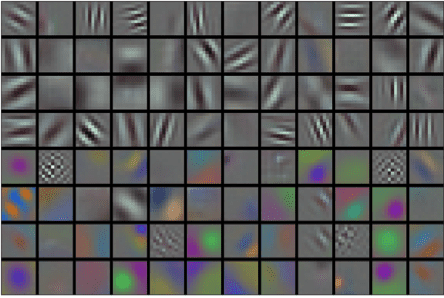

It's unclear from a reading of Zhu and Halevy's paper how precisely they might characterize deep neural networks. They are clearly high-dimensional, but needn't make very specific domain assumptions, depending upon the architecture. Certainly, compared to more traditional computer vision methods, relying hand-engineered features, they might be considered conceptually simpler. On the other hand, the models are clearly harder to describe than a simple linear classifier, and the optimization problems they present are undoubtedly complicated. The pretraining strategies used to initialize the networks might also be described as elaborate.

The importance of the definitional question regarding simplicity cannot be overstated. Halevy describes "simple" systems which achieve results via memorization. For example, speech translation systems trained corpuses containing trillions of words memorize exact word sequences rather than modeling general associations. Such models are exponentially large in n (as in "n"-gram), specifically v^n, where v is the size of the vocabulary (likely over a million words!). When we ask if we need more data or different models, whether we mean larger parameter vectors or fundamentally more complicated ideas represent distinct questions.

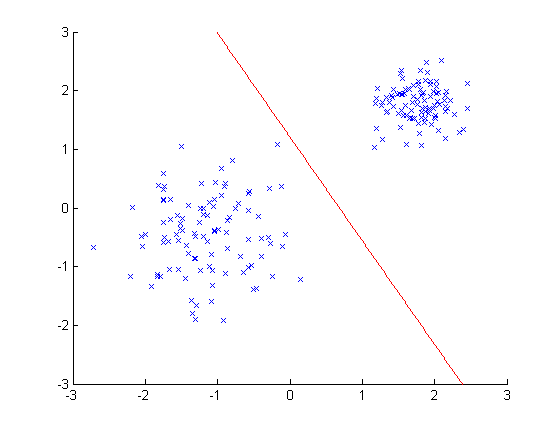

Given this discussion, it's worth rehashing the intuition that as data sets grow large, nearest neighbor is the ideal algorithm for many tasks. Under modest smoothness assumptions and given boundless training data and unlimited computational power, nearest neighbor classifiers should perform arbitrarily well. But despite its conceptual simplicity, the algorithm produces gigantic models. Specifically, they divide the feature space into as many regions as there are training examples. In fact, the models are by definition as large as the training set itself. This is in contrast to a linear classifiers, which only divide the feature space into two half-spaces, and have as many parameters as features (independent of the number of examples).

The State of the ArtHalevy's article addresses a comparatively simpler domain than object detection. It is feasible to memorize a high proportion of the word sequences (for modest n) likely to be encountered. Images, however are unlikely to ever contain an identical set of pixels. Still, deep learning has made it clear that additional data can be put to use, albeit with the help of larger models. Precisely what manner of model "complexity" is required to utilize data effectively (high-dimensional or complicated theoretically) seems highly dependent on specific application/task and just how large the data in question. It's obvious that models must have many degrees of freedom in order to express complicated hypotheses. Additionally, it seems clear that a model must contain nonlinearities in order to closely model a nonlinear world. On the other hand, given limitless data and computation, even vision tasks could presumably be solved with nearest neighbor approaches.

Turning again to the current state of the art in object detection: precisely how the marginal benefit to performance diminishes as a function of increased training data in today's deep learning systems is an open question. The research community would benefit from a thorough empirical characterization. Given sufficient data to saturate performance, and insufficient data to render memorization viable, what next steps would improve these models? If given a dataset 100x the size of Imagenet and computers 100x faster, should models contain more convolutional layers? More fully connected layers? More filter maps per layer? Or do we just need more data?

Zachary Chase Lipton is a PhD student in the Computer Science Engineering department at the University of California, San Diego. Funded by the Division of Biomedical Informatics, he is interested in both theoretical foundations and applications of machine learning. In addition to his work at UCSD, he has interned at Microsoft Research Labs.

Zachary Chase Lipton is a PhD student in the Computer Science Engineering department at the University of California, San Diego. Funded by the Division of Biomedical Informatics, he is interested in both theoretical foundations and applications of machine learning. In addition to his work at UCSD, he has interned at Microsoft Research Labs.

Related:

- (Deep Learning’s Deep Flaws)’s Deep Flaws

- Differential Privacy: How to make Privacy and Data Mining Compatible

- Geoff Hinton AMA: Neural Networks, the Brain, and Machine Learning