3 Approaches to Data Imputation

Learn about data imputation and 3 ways in which to implement it using Python.

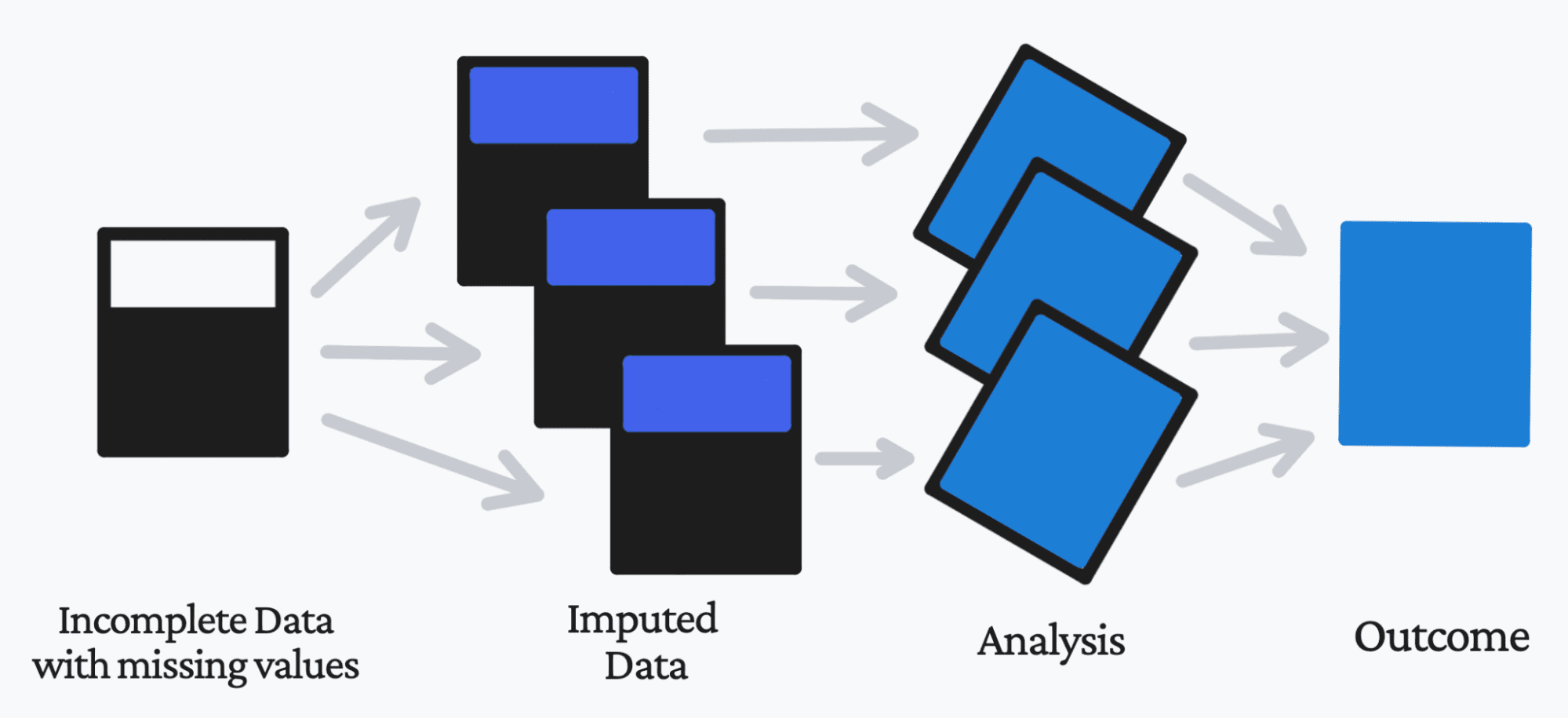

Image by Author

When working with data, it would be amazing if your data was without missing values.

Unfortunately, we don’t live in a perfect world, especially when it comes to data. Therefore, you will need to find a solution for these missing data points.

This is where data imputation comes into the picture.

What is Data Imputation?

Data imputation utilizes various statistical methods in the process of replacing your missing data values with a substitute value. This allows you, the business, and the customer to be able to retain the most out of the data and provide insightful knowledge.

If you substitute a data point, it is called ‘unit imputation’. If you substitute a component of a data point, it is called ‘item imputation’.

So why do we impute the data, rather than just remove it?

While it would be the easier option to remove the data points that have missing values, this is not always the best option. Not only will you reduce the size of your dataset by removing the missing values, but this can lead to further issues of incorrect and untrustworthy analysis.

Why is Data Imputation Important?

Missing data does not give us a great scope of the dataset and can reduce the reliability of the overall outcome. Missing data can:

1. Conflicting when using Python Libraries.

There are specific libraries that struggle and are naturally incompatible to handle missing data. This can cause delays in your workflow/process as well as lead to further errors.

2. Issues with the dataset

If you remove missing values, it will cause a decrease in the size of your dataset. However, keeping missing values in your dataset can have a major effect on the variables, the correlation, the statistical analysis, and the overall outcome.

3. Final outcome

Your priority when working with data and models is to ensure that the final model is free from errors, efficient, and trustworthy to be used in real-world cases. Missing data will naturally lead to bias in the dataset, leading to incorrect analysis.

So how can we substitute these missing values? Let’s find out.

Approaches to Data Imputation

1. SimpleImputer using mean

class sklearn.impute.SimpleImputer(*, missing_values=nan, strategy='mean', fill_value=None, verbose='deprecated', copy=True, add_indicator=False)

Using Scikit-learn’s SimpleImputer class, you can impute missing values using a constant value, or using the mean, median or most frequent of each column in which the missing values are present.

For example:

# Imports

import numpy as np

from sklearn.impute import SimpleImputer

# Using the mean value of the columns

imp = SimpleImputer(missing_values=np.nan, strategy='mean')

imp.fit([[1, 3], [5, 6], [8, 4], [11,2], [9, 3]])

# Replace missing values, encoded as np.nan

X = [[np.nan, 3], [5, np.nan], [8, 4], [11,2], [np.nan, 3]]

print(imp.transform(X))

Output:

[[ 6.8 3. ]

[ 5. 3.6]

[ 8. 4. ]

[11. 2. ]

[ 6.8 3. ]]

2. SimpleImputer using the most frequent

Using the same class as above, I mentioned that you could use mean, median, or most frequent. Most frequent works with categorical features, that are either string or numerical. It replaces the missing value with the most frequent value within that column.

This is how you can do this using the same example as above:

# Imports

import numpy as np

from sklearn.impute import SimpleImputer

# Using the most frequent value of the columns

imp = SimpleImputer(missing_values=np.nan, strategy='most_frequent')

imp.fit([[1, 3], [5, 6], [8, 4], [11,2], [9, 3]])

# Replace missing values, encoded as np.nan

X = [[np.nan, 3], [5, np.nan], [8, 4], [11,2], [np.nan, 3]]

print(imp.transform(X))

Output:

[[ 1. 3.]

[ 5. 3.]

[ 8. 4.]

[11. 2.]

[ 1. 3.]]

3. Using k-NN

If you don’t know what k-NN is, it is an algorithm that makes predictions on the test data set by calculating the distance between the current training data points. It assumes that similar things exist within close proximity.

A simple and easy way to use k-NN for imputation is by using the Impyute library. For example:

Before imputation:

n = 4

arr = np.random.uniform(high=5, size=(n, n))

for _ in range(3):

arr[np.random.randint(n), np.random.randint(n)] = np.nan

print(arr)

Output:

[[ nan 3.14058295 2.2712381 0.92148091]

[ nan 3.24750479 1.35688761 2.54943751]

[4.47019496 0.79944618 3.61855558 3.12191146]

[3.09645292 nan 0.43638625 4.05435414]]

After imputation:

import impyute as impy

print(impy.mean(arr))

Output:

[[3.78332394 3.14058295 2.2712381 0.92148091]

[3.78332394 3.24750479 1.35688761 2.54943751]

[4.47019496 0.79944618 3.61855558 3.12191146]

[3.09645292 2.39584464 0.43638625 4.05435414]]

Wrapping Up

At this point you have learned what Data Imputation is, its importance and 3 different ways to approach it. If you are still interested in learning more about missing values and statistical analysis, I highly recommend reading Statistical Analysis with Missing Data by Roderick J.A. Little and Donald B. Rubin.

Nisha Arya is a Data Scientist and Freelance Technical Writer. She is particularly interested in providing Data Science career advice or tutorials and theory based knowledge around Data Science. She also wishes to explore the different ways Artificial Intelligence is/can benefit the longevity of human life. A keen learner, seeking to broaden her tech knowledge and writing skills, whilst helping guide others.