Containers: The Enabler of YARN

The evolution of a data-center operating system is discussed along with the underlying challenges and approaches being followed. Containers play a big role in enabling the required abstraction and deliver additional benefits.

By Dinesh Subhraveti, July 28, 2014.

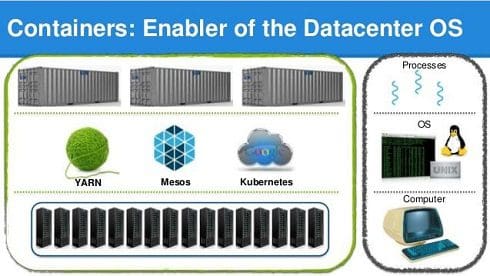

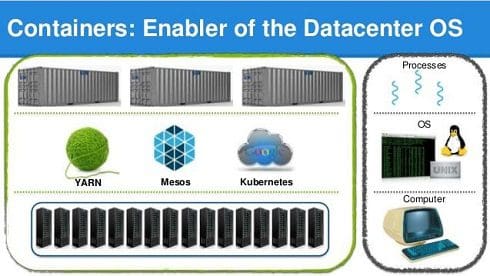

In the emerging model of the datacenter as a computer, several projects, including YARN, Mesos and more recently Kubernetes, are undertaking the effort of building an operating system for this “new computer.” These new operating systems in turn need the equivalent of the early multi-user time sharing systems to support a multi-tenant environment of diverse application and user ecosystems over the distributed resources of a data-center.

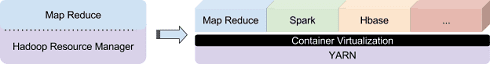

Hadoop itself is evolving from its MapReduce roots into a data-center operating system for running and managing scalable data-centric applications. A variety of applications such as Spark and Hbase have already been implemented on Hadoop-YARN, and initiatives such as Slider, Twill and Spring YARN framework are explicitly chartered to accelerate this evolution. Transitioning from a dedicated resource manager for a single application to a shared platform that can securely host a variety of independent applications requires a built-in mechanism to isolate the tasks of individual applications from one another and the host. Indeed, providing isolation among applications is one of the immediate challenges that need to be overcome for this transition to be successful.

Transitioning from a dedicated resource manager for a single application to a shared platform that can securely host a variety of independent applications requires a built-in mechanism to isolate the tasks of individual applications from one another and the host. Indeed, providing isolation among applications is one of the immediate challenges that need to be overcome for this transition to be successful.

Current approaches used by YARN to resolve conflicts among applications are incomplete. While YARN can use “control groups” to provide a rudimentary level of performance isolation, it does not have mechanisms for isolating run-time environments, software dependencies, or security contexts. An ideal solution would provide strong isolation that extends beyond compute and memory resources to all potential sources of interference between the applications, such as system configuration, software environment, file system etc. It should also be able to seamlessly integrate with YARN without requiring a disruptive new model or imposing unacceptable overhead.

Containers not only meet the requirements perfectly, but also offer a number of additional advantages with one simple and elegant solution. The rest of this article introduces containers and the advantages they deliver in the context of YARN.

Containers

Containers are essentially isolated abstractions of the underlying operating system, its resources and their names. The keyword is abstraction. Unlike traditional virtual machines that need an additional layer of the guest operating system to virtualize the application, containers are a native operating system abstraction that doesn't need any substantial state. It makes them extremely lightweight and scalable. Since they look and feel exactly like private instances of the operating system, they are completely transparent to the applications. They require no new interfaces to be adopted. Containers are particularly suited for Hadoop because they are able to provide strong isolation with almost imperceptible run time overhead and startup latency.

Docker builds on containers by providing a repository of container images that represent self-contained application packages. These can be readily instantiated as application containers on any platform regardless of what software is installed and how it is configured.

Containers for YARN

The powerful combination of Docker containers and YARN delivers many benefits.

Security. YARN is typically deployed as a multi-tenant environment in large organizations with multiple groups sharing a common IT-managed cluster. Tasks from different tenants could potentially be scheduled on the same host. Containers securely isolate those tasks by limiting the privilege scope of a task to the container in which it runs. Root in the container is distinct from root on the host. Even though the root in a container could run privileged operations, it only affects the container counterparts of the host resources but not the host directly. Specific Linux Capabilities possessed by the task, devices accessible to it, etc. are adjusted for each container.

When combined with Software Defined Networking techniques, containers isolate the network traffic of different tenant applications. Then the tasks of one customer would not be able to maliciously or unintentionally snoop the traffic of another tenant.

Performance isolation. Containers provide resource accounting and enforce resource limits on the processes running within them to prevent applications from stepping on each other. For fine-grain control, resource limits associated with CPU, memory and I/O bandwidth can be tuned on-the-fly as decided by the resource manager.

Higher utilization by co-scheduling CPU and I/O bound jobs. In a multi-tenant environment, applications have varying resource needs. While some tasks are compute-intensive, others could be I/O-bound. When the tasks of an I/O bound job are scheduled on a node, its compute resources go unused and vice versa. Due to the security risk of co-locating the tasks of different tenants on a shared machine, the idle resources are not allocated to other tenants even if they are able to utilize them. Containers prevent such resource under utilization by securely isolating tasks from one another, so that they can be safely co-scheduled on the same host.

Consistency. Distributed YARN applications consist of tasks that need to run on different cluster nodes deployed with an identical host environment. Any discrepancies may cause application misbehavior. Containers ensure that all the tasks of an application run in a consistent software environment defined by the container and its image, regardless of the state of the host. For example, an application could run in an Ubuntu environment making use of Ubuntu-specific software, while the host itself runs RHEL.

Isolation of software dependencies and configuration. YARN is designed to be modular, with well-defined interfaces between applications and its core. This allows applications to be built as independent binaries, which often rely on third party software. For example, an application that predicts consumer spending based on linear regression might have a dependency on Matlab. Since the tasks of an application could be potentially scheduled to run on any host in the cluster, these software dependencies would have to be installed on all the cluster nodes. A variety of applications all sharing the same YARN cluster can quickly clutter the nodes with their respective software dependencies. Installing all dependencies across all hosts is an unscalable approach. In some cases, the software dependencies and their versions may be mutually conflicting.

With applications encapsulated in Docker containers, software dependencies and the system configuration required for them can be specified independent of the host and other applications running on the cluster.

Reproducible and programmable mechanism to define application environments. Docker supports a mechanism to programmatically build out a consistent environment required for YARN applications. The build process can be run offline with its products stored in the central repository of container images. At the time of deployment, the image bits are quickly streamed into the cluster without incurring the overhead of runtime configuration.

Quick provisioning. The central repository of container images decouples software state and configuration from the hardware, enabling a relatively stateless base platform to be rapidly provisioned for a YARN application, by automatically pulling the right container image on demand. When the job finishes the containers are simply removed, returning the cluster to its pristine state.

Realizing these benefits requires extensions to Docker as well as to YARN, and bringing them together through right interfaces. We are grateful to both communities for their enthusiastic support for the needed contributions. These new features not only benefit YARN and Docker but also other ambitious efforts addressing the problem of data center resource management through containers such as Mesos and Kubernetes.

Dinesh Subhraveti is responsible for the multi-tenancy and virtualization infrastructure at Altiscale. He developed the notion of Operating System level virtualization as a part of his Ph.D., which later came to be known in the industry as Containers. Published in OSDI 2002, his work showed for the first time that enterprise applications can be virtualized and live-migrated. Dinesh applied that research to drive industry's first Container virtualization product for enterprise Linux applications at Meiosys, the company behind Linux Containers that IBM acquired in 2005.

Dinesh Subhraveti is responsible for the multi-tenancy and virtualization infrastructure at Altiscale. He developed the notion of Operating System level virtualization as a part of his Ph.D., which later came to be known in the industry as Containers. Published in OSDI 2002, his work showed for the first time that enterprise applications can be virtualized and live-migrated. Dinesh applied that research to drive industry's first Container virtualization product for enterprise Linux applications at Meiosys, the company behind Linux Containers that IBM acquired in 2005.

He authored over 35 patents and papers in the areas of virtualization, storage and operating systems, and holds a B.E. degree in computer science from BITS-Pilani, India and M.S., M.Phil., and Ph.D. degrees in computer science from Columbia University, New York. He also had teaching experience both from his time at Columbia, as well as multiple meet ups and industry conferences around the world.

Related:

In the emerging model of the datacenter as a computer, several projects, including YARN, Mesos and more recently Kubernetes, are undertaking the effort of building an operating system for this “new computer.” These new operating systems in turn need the equivalent of the early multi-user time sharing systems to support a multi-tenant environment of diverse application and user ecosystems over the distributed resources of a data-center.

Hadoop itself is evolving from its MapReduce roots into a data-center operating system for running and managing scalable data-centric applications. A variety of applications such as Spark and Hbase have already been implemented on Hadoop-YARN, and initiatives such as Slider, Twill and Spring YARN framework are explicitly chartered to accelerate this evolution.

Transitioning from a dedicated resource manager for a single application to a shared platform that can securely host a variety of independent applications requires a built-in mechanism to isolate the tasks of individual applications from one another and the host. Indeed, providing isolation among applications is one of the immediate challenges that need to be overcome for this transition to be successful.

Transitioning from a dedicated resource manager for a single application to a shared platform that can securely host a variety of independent applications requires a built-in mechanism to isolate the tasks of individual applications from one another and the host. Indeed, providing isolation among applications is one of the immediate challenges that need to be overcome for this transition to be successful.

Current approaches used by YARN to resolve conflicts among applications are incomplete. While YARN can use “control groups” to provide a rudimentary level of performance isolation, it does not have mechanisms for isolating run-time environments, software dependencies, or security contexts. An ideal solution would provide strong isolation that extends beyond compute and memory resources to all potential sources of interference between the applications, such as system configuration, software environment, file system etc. It should also be able to seamlessly integrate with YARN without requiring a disruptive new model or imposing unacceptable overhead.

Containers not only meet the requirements perfectly, but also offer a number of additional advantages with one simple and elegant solution. The rest of this article introduces containers and the advantages they deliver in the context of YARN.

Containers

Containers are essentially isolated abstractions of the underlying operating system, its resources and their names. The keyword is abstraction. Unlike traditional virtual machines that need an additional layer of the guest operating system to virtualize the application, containers are a native operating system abstraction that doesn't need any substantial state. It makes them extremely lightweight and scalable. Since they look and feel exactly like private instances of the operating system, they are completely transparent to the applications. They require no new interfaces to be adopted. Containers are particularly suited for Hadoop because they are able to provide strong isolation with almost imperceptible run time overhead and startup latency.

Docker builds on containers by providing a repository of container images that represent self-contained application packages. These can be readily instantiated as application containers on any platform regardless of what software is installed and how it is configured.

Containers for YARN

The powerful combination of Docker containers and YARN delivers many benefits.

Security. YARN is typically deployed as a multi-tenant environment in large organizations with multiple groups sharing a common IT-managed cluster. Tasks from different tenants could potentially be scheduled on the same host. Containers securely isolate those tasks by limiting the privilege scope of a task to the container in which it runs. Root in the container is distinct from root on the host. Even though the root in a container could run privileged operations, it only affects the container counterparts of the host resources but not the host directly. Specific Linux Capabilities possessed by the task, devices accessible to it, etc. are adjusted for each container.

When combined with Software Defined Networking techniques, containers isolate the network traffic of different tenant applications. Then the tasks of one customer would not be able to maliciously or unintentionally snoop the traffic of another tenant.

Performance isolation. Containers provide resource accounting and enforce resource limits on the processes running within them to prevent applications from stepping on each other. For fine-grain control, resource limits associated with CPU, memory and I/O bandwidth can be tuned on-the-fly as decided by the resource manager.

Higher utilization by co-scheduling CPU and I/O bound jobs. In a multi-tenant environment, applications have varying resource needs. While some tasks are compute-intensive, others could be I/O-bound. When the tasks of an I/O bound job are scheduled on a node, its compute resources go unused and vice versa. Due to the security risk of co-locating the tasks of different tenants on a shared machine, the idle resources are not allocated to other tenants even if they are able to utilize them. Containers prevent such resource under utilization by securely isolating tasks from one another, so that they can be safely co-scheduled on the same host.

Consistency. Distributed YARN applications consist of tasks that need to run on different cluster nodes deployed with an identical host environment. Any discrepancies may cause application misbehavior. Containers ensure that all the tasks of an application run in a consistent software environment defined by the container and its image, regardless of the state of the host. For example, an application could run in an Ubuntu environment making use of Ubuntu-specific software, while the host itself runs RHEL.

Isolation of software dependencies and configuration. YARN is designed to be modular, with well-defined interfaces between applications and its core. This allows applications to be built as independent binaries, which often rely on third party software. For example, an application that predicts consumer spending based on linear regression might have a dependency on Matlab. Since the tasks of an application could be potentially scheduled to run on any host in the cluster, these software dependencies would have to be installed on all the cluster nodes. A variety of applications all sharing the same YARN cluster can quickly clutter the nodes with their respective software dependencies. Installing all dependencies across all hosts is an unscalable approach. In some cases, the software dependencies and their versions may be mutually conflicting.

With applications encapsulated in Docker containers, software dependencies and the system configuration required for them can be specified independent of the host and other applications running on the cluster.

Reproducible and programmable mechanism to define application environments. Docker supports a mechanism to programmatically build out a consistent environment required for YARN applications. The build process can be run offline with its products stored in the central repository of container images. At the time of deployment, the image bits are quickly streamed into the cluster without incurring the overhead of runtime configuration.

Quick provisioning. The central repository of container images decouples software state and configuration from the hardware, enabling a relatively stateless base platform to be rapidly provisioned for a YARN application, by automatically pulling the right container image on demand. When the job finishes the containers are simply removed, returning the cluster to its pristine state.

Realizing these benefits requires extensions to Docker as well as to YARN, and bringing them together through right interfaces. We are grateful to both communities for their enthusiastic support for the needed contributions. These new features not only benefit YARN and Docker but also other ambitious efforts addressing the problem of data center resource management through containers such as Mesos and Kubernetes.

Dinesh Subhraveti is responsible for the multi-tenancy and virtualization infrastructure at Altiscale. He developed the notion of Operating System level virtualization as a part of his Ph.D., which later came to be known in the industry as Containers. Published in OSDI 2002, his work showed for the first time that enterprise applications can be virtualized and live-migrated. Dinesh applied that research to drive industry's first Container virtualization product for enterprise Linux applications at Meiosys, the company behind Linux Containers that IBM acquired in 2005.

Dinesh Subhraveti is responsible for the multi-tenancy and virtualization infrastructure at Altiscale. He developed the notion of Operating System level virtualization as a part of his Ph.D., which later came to be known in the industry as Containers. Published in OSDI 2002, his work showed for the first time that enterprise applications can be virtualized and live-migrated. Dinesh applied that research to drive industry's first Container virtualization product for enterprise Linux applications at Meiosys, the company behind Linux Containers that IBM acquired in 2005.

He authored over 35 patents and papers in the areas of virtualization, storage and operating systems, and holds a B.E. degree in computer science from BITS-Pilani, India and M.S., M.Phil., and Ph.D. degrees in computer science from Columbia University, New York. He also had teaching experience both from his time at Columbia, as well as multiple meet ups and industry conferences around the world.

Related: