Coding Ethics for AI & AIOps: Designing Responsible AI Systems

AI ops has taken Human machine collaboration to the next level where humans and machines are not just coexisting but are collaborating and working together like team members.

By Manisha Singh, Advanced Analytics & Tech Strategist

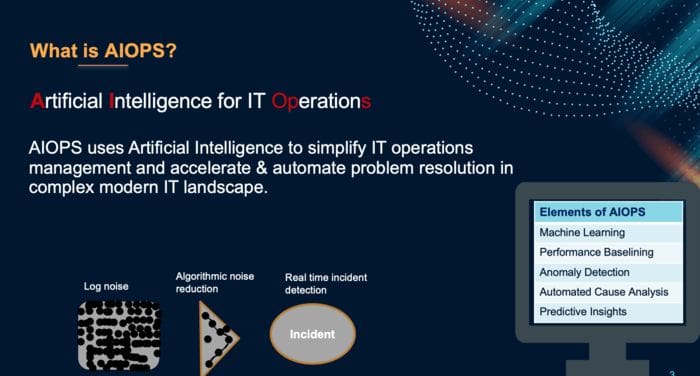

WHAT IS AIOPS ?

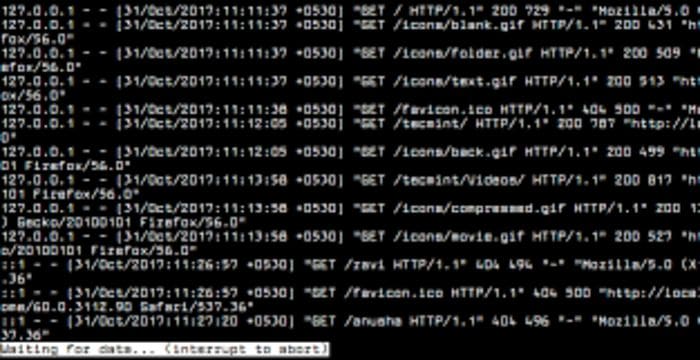

Can you relate to this image ? This is a typical log file that support / dev teams have struggled - manually reading the logs line by line to resolve an outage/anomaly. Such was the era of traditional IT operations where : Process was time consuming, correlation between different layers of platform and multiple log files was difficult; Results could vary & valid for a particular time duration; Results could be lost and history wasn't saved and Thus this approach did not scale.

This consumed multiple resources from support teams, Dev, Infra over emergency calls running several hours gazing at several Dashboards & tools wondering where to start ? Earlier, we followed a REACTIVE approach where for example we would wait for a cpu metric to breach the 80% threshold before we could act.

What if we could use the power of logs that we don't really read now and let the machine analyse the trends. What if the machine could predict the fault that tomorrow @8PM this platform will suffer high cpu. Such is the power of AIOPS......

AIOps can help predict and prevent anomalies much before the user is impacted. It creates realtime baselines of normal behaviour and alert on deviations. It also provides automated root cause analysis for issues so we know where to narrow down on problem areas. It can automatically correlate between different layers - infra, OS, application and database to provide you a single meaningful incident and filtering noise. This greatly reduces MTTR for outages.

AI ops has taken Human machine collaboration to the next level where humans and machines are not just coexisting but are collaborating and working together like team members.

So AIOPS has great potential and being adopted for lot of use cases at a fast pace.

RESPONSIBLE AI

This raises the need for real, honest dialog about how we build responsible AI systems. How do we enforce human checks & balances on these machines. This responsibility lies with all the engaged citizens.

What responsible means ? it means to be able to justify Automated Decisions.

Designing Responsible AI systems

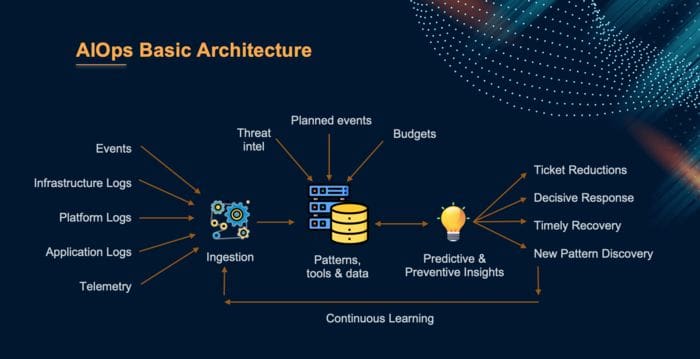

We must start with answering the important questions around Policy, Technology and process as shown below :

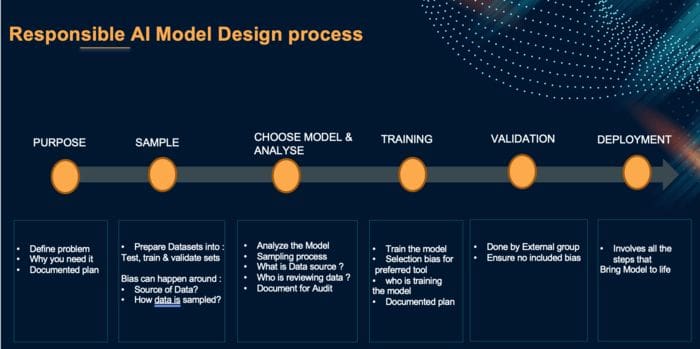

Here is a Design pipeline showcasing the important elements of the overall AI Model design process. We always start with purpose i.e what we want out of the Model. Then comes the sampling process where we explore data and prepare 3 data sets : Train, Test and Validation sets. As a thumb rule: we keep 70% for Training, 20% for Testing and 10% for validation. here are chances of Bias resulting from what sources of data is used and how data is sampled . The data could be skewed and not evenly distributed. Then comes the Model selection and Analysis stage where we chose model as applicable to our use case and Analyse the data sources, sampling process and who is reviewing the model. Then is Training stage where we train the model. Here again there are chances of Selection Bias resulting from preferred tool and Bias from who is training the model. Then is the Validation stage which must be performed by an external group and ensure there is no included bias. Finally there is Deployment stage which involves steps where we deploy model in production.

RISKS POSED BY AI ACROSS INDUSTRY

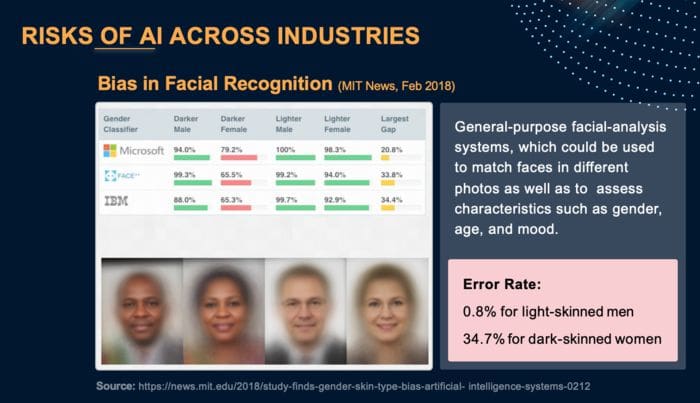

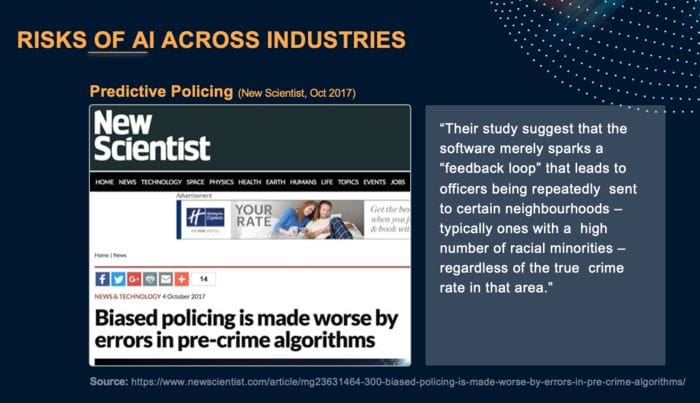

There are various risks posed by AI in the industry. For example Bias in facial recognition by general purpose facial analysis was found biased towards fair skinned. Also, racial bias in the predictive policing as illustrated.

ETHICAL & RESPONSILE AI PRINCIPLES FOR RISK REMEDIATION

AI is embedded in everyday life, we can build trustworthy systems only by embedding thick principles into AI applications and processes. When it comes to being Responsible, there is no one clear policy or framework. We must focus on the following AI principles to mitigate the risks posed by AI:

Fairness. means the Model should treat everyone in a fair manner without preference or discrimination and without causing any harm.

Robustness. Means the models deployed must be robust against unseen data. When running in a prod environment, models would be exposed to unseen situations and so they should output intended results in all situations. Besides, they should also be robust against cyber attacks.

Lawfulness. Model must act in accordance with law and meet regulatory compliances.

Safety and Data security. of individuals must be of prime importance and should be ensured at all costs. Model should be safe and shouldn't harm anyone both physically & mentally.

Human in Loop. The amount of human involvement in the automated decision would depend on level of Risk severity of use cases.

AI EXPLAINABILITY

Explainability in AI means that we should be able to explain and justify AI's automated decision.

'AI as a black box is a myth'. Here i want to highlight the concept of Ai as a black box is a myth. No matter how complicated a model is , it must be clear and understood. We can leverage several technologies/techniques/tools to probe such black box models in order to validate and measure their Response.

Transparency is important from a customer's aspect. There should be transparency on the following :

(a) Working of Model Transparency. Working of the model i.e code, data, predictions and outcomes must be transparent. (b) process transparency. Development process and all its aspects like governance, controls, design considerations must be transparent and known.

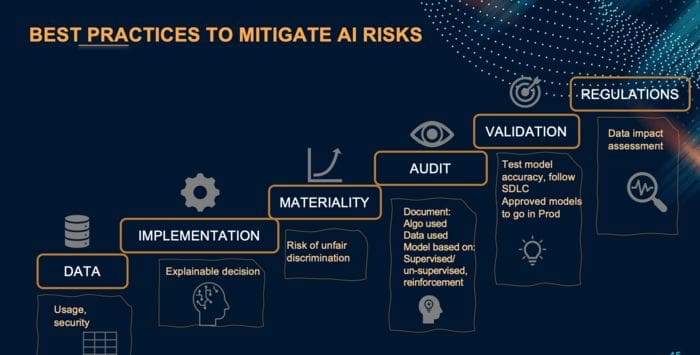

BEST PRACTICES FOR AI RISK REMEDIATION

To sum it all up, we must bear in mind the below mentioned aspects while executing an AI project.

All in all, adoption of AI & AIOPS is a mindset change and to leverage this technology for the good, we must embrace it with an open mind and willingness to change. All engaged citizens should contribute towards responsible AI for our society's good.

RESOURCES & TOOLS FOR DESIGNING RESPONSIBLE AI SYSTEMS

You may use following resources, tools and techniques to design responsible AI systems based on the AI principles we discussed earlier.

(a) FairnessComparison. Extensible test-bed to facilitate direct comparisons of algorithms with respect to fairness measures. Includes raw & preprocessed datasets.

https://github.com/algofairness/fairness-comparison

(b) Themis-ML. Python library built on scikit-learn that implements fairness-aware machine learning algorithms.

https://github.com/cosmicBboy/themis-ml

(c) FairML. Looks at significance of model inputs to quantify predictiondependence on inputs.

https://github.com/adebayoj/fairml

(d)Aequitas. Web audit tool as well as python lib. Generates bias report for given model and dataset.

https://github.com/dssg/aequitas

(e)Fairtest. Tests for associations between algorithm outputs and protected populations

https://github.com/columbia/fairtest

(f)Themis. Takes a black-box decision-making procedure and designs test cases automatically to explore where the procedure might be exhibiting group-based or causal discrimination

https://github.com/LASER-UMASS/Themis.

(g)Audit-AI. Python library built on top of scikit-learn with various statistical tests for classification and regression tasks.

https://github.com/pymetrics/audit-ai

Reference

1. Videos About AI Ethics. https://www.fast.ai/2021/08/16/eleven-videos/

2. The LinkedIn Fairness Toolkit (LiFT). https://github.com/linkedin/LiFT

3. FairML: Auditing Black-Box Predictive Models: https://github.com/adebayoj/fairml

4. An embedded ethics approach for AIOps Dev: https://www.sciencedaily.com/releases/2020/09/200901112221.htm

5. Where Responsible AIOps meets Reality: https://arxiv.org/pdf/2006.12358.pdf

Bio: Manisha Singh is an Advanced Analytics & Tech Strategist, Keynote Speaker, Author, and Engineering Blogger. Manisha heads an AIOps and Big Data Engineering Team.

Original. Reposted with permission.

Related:

- Towards a Responsible and Ethical AI

- Demystifying AI: The prejudices of Artificial Intelligence (and human beings)

- Including ModelOps in your AI strategy