Data Warehousing with Snowflake for Beginners

This tutorial provides only a brief synopsis of the data warehouse in Snowflake, which we will go through in more detail.

By Boga Mounika, Technical Content Writer at HKR Trainings

Snowflake is software as a service cloud-based data warehousing on AWS. Snowflake offers a data warehouse that is faster, easier to set up, and significantly more adaptable than typical data warehouse systems. This tutorial provides only a brief synopsis of the data warehouse in Snowflake, which we will go through in more detail.

What is a Data Warehouse in Snowflake?

Snowflake is the first database of analytics to be developed entirely in the cloud and supplied as a data warehouse as a service (DWaaS). It's compatible with cloud systems like Google, Azure, and AWS. There is no need to manage, configure, or install any hardware (real or virtual) or software because it runs fully on infrastructure for the public cloud. It's perfect for data lakes, data science, data warehousing, data engineering, and data application development. Its architecture and data sharing capabilities, however, make it unbeatable.

Connecting to Snowflake

Snowflake could be connected to other services in various ways:

- User Interface based on Web

- JDBC and ODBC drivers

- Native connectors

- Command-line clients

- Connectors from third parties like BI and ETL tools

Data Loading in Snowflake

The basics of Snowflake data loading are covered in this section. Snowflake allows you to load data in one of four options.

- Bulk loading with SnowSQL

- Snowpipe will be used to automate data loading in bulk

- Limited data WebUI

- Externally sourced data can be bulk loaded using third-party applications

Let's have a look at SnowSQL first.

1. Build Loading using SnowSQL

The data loading in bulk is done in two stages: firstly, files staging and then loading data. We'll concentrate on data loading from CSV files in this section.

Files Staging: Data files are being uploaded to a location where Snowflake could access them is known as staging files. After that, load your data is loaded into tables from the stage files. You can stage files on internal places called stages in Snowflake. Internal stages offer secure data file storage without the need for external storage.

Data Loading: To put data into a snowflake, you'll need a virtual warehouse. Each file's data is extracted and inserted as rows in the database by the warehouse.

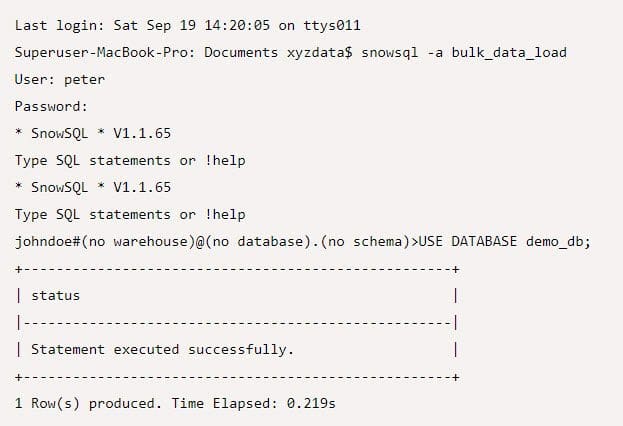

In the demo database demo_db, a SnowSQL SQL client would be used to load CSV files from a local workstation into a table named contacts. We'll utilize the name to keep the files in internal staging before loading them.

Make use of the database demo_db:

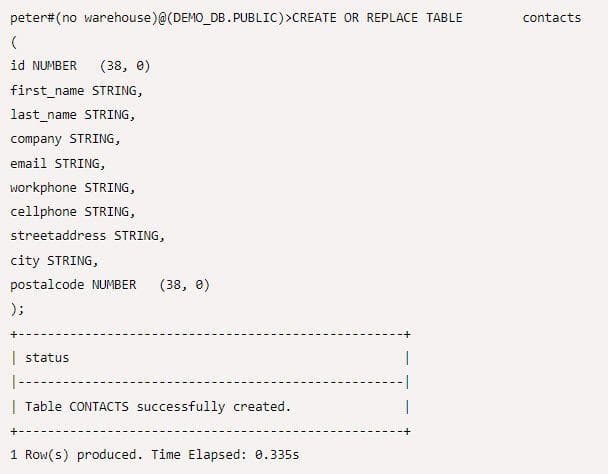

The below SQL was used to create the tables:

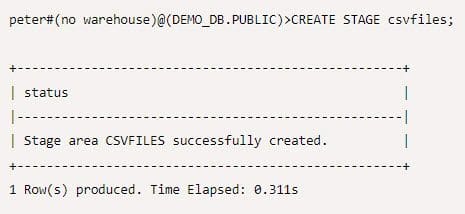

After that, make a CSV file for internal stage:

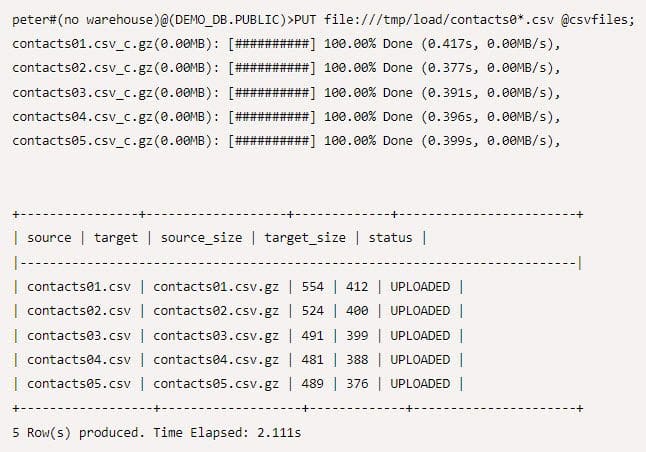

To stage data in csv files, use the PUT command. The wildcard “contacts0*.csv” is used for loading multiple files, and the @ symbol specifies where the files should be staged - in this scenario, @csvfiles.

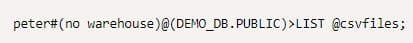

Use the LIST command to verify that the CSV files are staged:

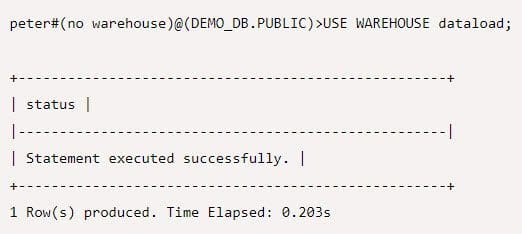

Specify a virtual warehouse to utilize for loading the files from staged files into a CONTACTS table:

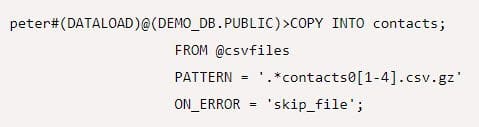

Fill a Snowflake table by loading the staged files:

INTO indicates the data in the table's location to be loaded, PATTERN specifies the data files that will be loaded, and ON ERROR notifies the command if errors occur.

Using SQL you could query your table now if the load is successful.

2. Snowpipe

We can utilize Snowpipe to bulk load data into Snowflake from externally staged files, as we explained earlier in the post. Snowpipe makes use of the COPY command, which has extra features that allow you to automate the procedure. It removes the requirement for a virtual warehouse by using external computing resources for continuous data loading.

3. Third-party tools - ELT/ETL

Third-party data loading solutions such as ELT/ETL could also be utilized for large data loading. Snowflake offers an ever-growing ecosystem of data-loading apps and services from various sources externally.

4. Web Interface

The web interface is the last option for data load. You can import a limited quantity of data into Snowflake by simply selecting the table that you use and the button “load” to be clicked. It streamlines loading by merging data load and staging into a single process, and it discards staged files automatically after loading.

Conclusion

Cloud data warehousing is gaining a lot of momentum these days, and tools like Snowflake are proving to be more productive than traditional-based solutions. Companies may improve their performance and estimate future growth by implementing Snowflake into their operations.

Boga Mounika works as a content writer at HKR Trainings. Boga has experience in handling technical content writing and aspires to learn new things to grow professionally, and is an expert in delivering content on market-demanding technologies like AlterYX, PTC Windchill, Arcsight, Looker, Snowflake, and more.