4 Factors to Identify Machine Learning Solvable Problems

The near future holds incredible possibility for machine learning to solve real world problems. But we need to be be able to determine which problems are solvable by ML and which are not.

As per the latest research, AI has a great potential to contribute $15.7 trillion to the global economy by 2030. The world is already seeing widespread adoption of AI in commercial use ever since digital acceleration induced by pandemic. IBM leaders project a 90% increase in adoption rate within the next 18-24 months. And it totally resonates with the ubiquitously found AI everywhere:

- AI will decide whether a candidate is suitable for a job

- AI finds the good risk loan candidates in the credit scoring model

- We are dependent on AI to find frauds in a financial transaction

- Out preferences of video and news feed is curated by an AI

- And the list goes on.

Are We Using AI To Its True Potential?

Data is generated at an enormous rate and is undoubtedly the new currency. A lot of firms are sitting on heaps of data and find themselves in a race against time to get the competitive edge. Everyone is working on building quick prototypes but is missing out on a foundation question – whether they really need AI to solve that problem, rather every problem. It looks and sounds fancy to sell your product as an AI-powered solution, but the reality is different.

Source: Technology cartoon psd created by jcomp - freepik plus edits from author

Hence comes the need of upskilling ourselves with what are the problems that AI/ML can solve.

Google has succinctly captured the characteristics of what problems are good for ML.

It is imperative to not jump onto the solution i.e., architecting the algorithm, presuming data and its attributes, data volume, and evaluation metrics to solve a problem.

As much time you spend on understanding the problem in the initial days or weeks of the projects, the chances of delinquencies reduce to a great degree. Focus on the problem statement and get the requirements lucidly. Make sure that you have understood the business ask before proposing the possible ways to solve the problem.

Once you have assessed the business’s exposed nerve, check what are the challenges of solving it from conventional techniques. Businesses have been widely using heuristic rules for ages, what is unique about the offered problem that needs an AI solution.

Broadly it comes down to four major factors: Pattern, Environment, Validation, and Dimension.

Pattern

Let’s start with ‘pattern’ first.

A problem that requires examining data and finding patterns will find AI in its solution. Now that you have understood the problem in its entirety, start working on some potential solutions that best address your problem. Note that you will not hit a jackpot with the first few iterations – generally, these initial reiterations involve scratching the surface and exposing the weak points of your baseline model, and setting the motion.

You don’t just iterate through the model but through the data exploration cycles as well.

As you iterate your way through the different versions of the model in search of the best model, the documentation will reveal your “tangible efforts” across different experiments and their suitability for the problem. But what is not shown on the paper is your knowledge bank, it is getting richer with all the failures that eventually lead to the best model. This knowledge bank will distinguish your intuitiveness in identifying ML solvability as a data scientist.

Next comes the biggest weapon that can win this AI war – the data. Is it available in the first place – if not then you will find yourself in soup. You will wander to get the closest publicly available data or simulate one for your business case. In either case, the model’s ability to solve the problem is just a proxy to the model built from actual data (which is not yet available to you, unfortunately).

If data is available, then the predictive power of the model depends on getting the right attributes carrying a good signal. And the buck does not stop here - the next concern is to evaluate how much data is needed to get the first model version out? Well, it depends on how complex a task ML needs to solve? If the features are easily segregating the different classes of the target variable (assuming classification ML), lesser data will also do the job like in ‘Iris toy data’.

Always remember that ML can do only as good as the data supplied to it and learn from the statistical associations. Not only do you need to input good signal attributes but removing the features that add noise will also help the model to learn faster and better.

You have built many hypotheses and run many experiments, but how do you select the best model. In fact, what is the best model? A model with a good generalizability quotient on previously unseen data is what everyone adores. That defines how robust the model is. Running experiments is easy but finding a robust model isn’t. Create an evaluation dataset that best represents what your model will get to see and perform on when deployed in production. Run your best model on this unseen evaluation dataset to find if it is truly the best.

Environment

You will have a tough time building ML models in an environment that is highly dynamic. Let me explain with an example. Suppose you are building a stock price prediction model where stock prices change for various reasons – sentiment-driven, quarterly and annual releases, macroeconomic factors, news-based, foreign investments, near derivative expiry movements, FED announcement, and many more.

Source: Business vector created by pch.vector - freepik plus edits from author

Realistic expectation from the model is to check whether and to what extent a reasonable human can achieve that outcome. What I mean by reasonable is that an average human can pick the stock patterns and trade profits as compared to a seasonal stock market expert who has learned the nuances and intuitions by failing and learning many times. Exogenous environments, in general, are difficult to model and require extensive domain knowledge to build an ML model.

Validation

Before you start modeling, understand how the model performance translates into business metrics.

Scientific metrics like Precision, Recall, RMSE, ROC-AUC can evaluate the model on unseen data to judge the goodness of an ML model, but does it reverberate well with the business.

For example: What impact does an 80% Precision have on conversion rate and business revenue in turn?

Also, there must be a way to validate the model predictions. Get an understanding of consensus on how the model output will be evaluated by a group of human experts.

Dimensions

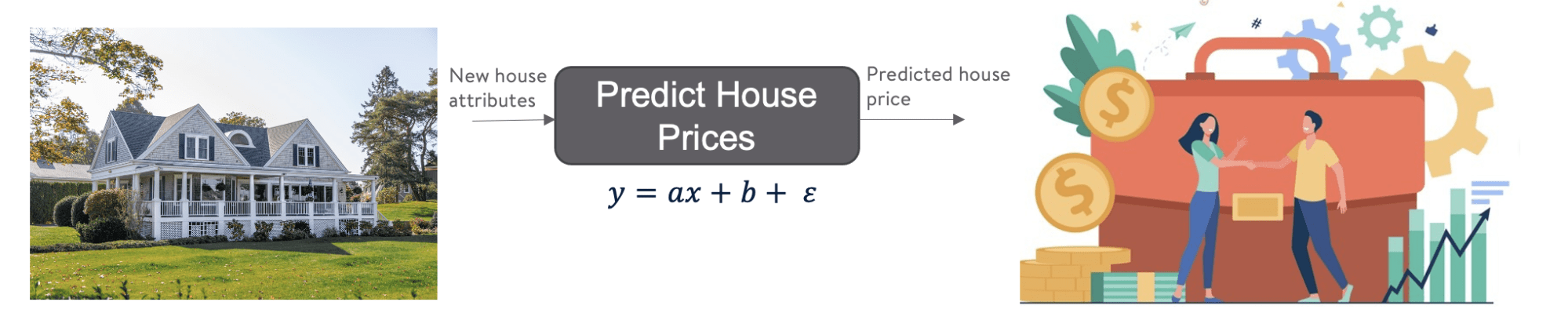

Imagine you want to buy a house and estimate the market value of the house to evaluate whether it is overpriced or underpriced. One way to estimate the price is to correlate it with the area - the greater the area, the higher the price. It can give an approximate which might not be very accurate.

Next, you look for the other factors like the number of rooms in a house or how old it is i.e., the year it was built to get a closer estimate. Similarly, you hunt other factors like - does this house have a school nearby and is surrounded by medical facilities to get better price prediction.

Source: Finance vector created by pch.vector and Source: Unsplash- plus edits from author

Now as the dimensions grew i.e., as you considered a greater number of relevant factors that go into deciding the house price, the better your estimate became. But it becomes humanly difficult to perform such calculations with increasing factors. In such cases, ML has the advantage of mining data associations on a huge dataset as compared to human experts.

In this article, we have learned the importance of identifying good ML solvable problems. We also talked about how the problem-first and not the solution-first traits are important to understand whether ML will serve good use to the given problem. Later the article explains how and why the pattern is crucial to use ML, why ML is not exclusively useful for constantly changing environments, how ML is apt for increasing dimensions, and how to set up a mechanism to validate the model output.

Vidhi Chugh is an award-winning AI/ML innovation leader and an AI Ethicist. She works at the intersection of data science, product, and research to deliver business value and insights. She is an advocate for data-centric science and a leading expert in data governance with a vision to build trustworthy AI solutions.