Everything You Need to Know About Data Lakehouses

Learn everything you need to know about data lakehouses.

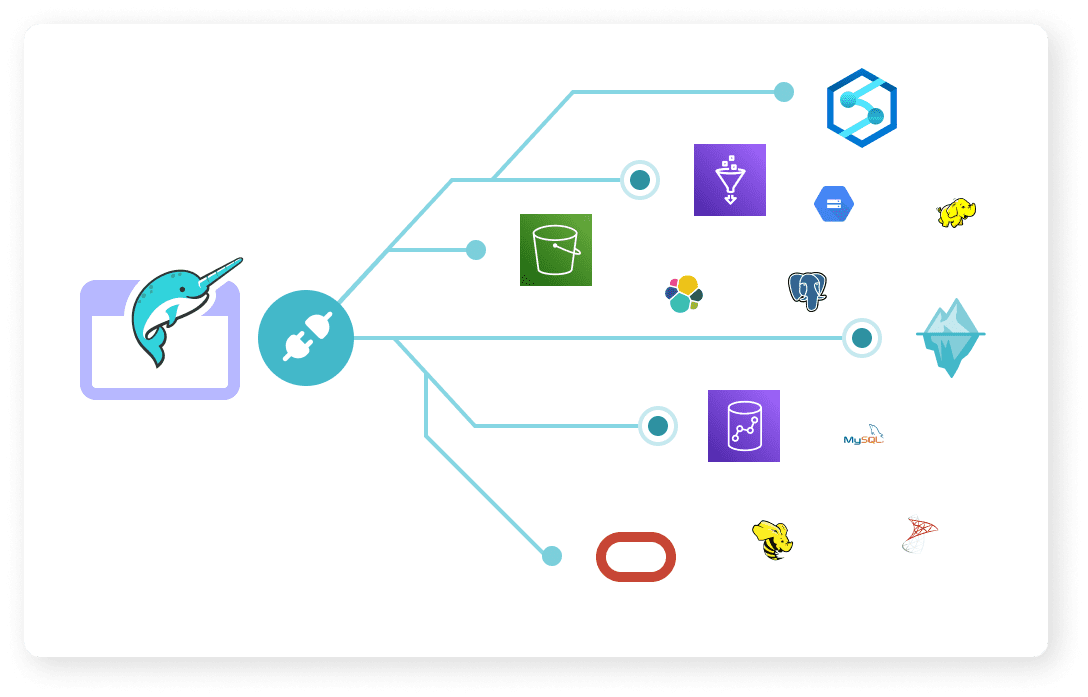

Source: Dremio

Could you consider data lakehouse a new buzzword in the world of tech? It seems as though we could. We first started off with using data warehouses, which refer to an information storage architecture that can be analyzed to help in the decision-making process.

Data warehouses date back to the 1980s and have been beneficial in many different aspects of the world of business. However, the era of Big Data soon came around the corner, when unstructured raw data made up nearly 80-90% of available data and information in many organizations.

This is where data lakehouses became the next big thing as the data warehouse was unable to handle unstructured data and its model is based on being structured.

As a part of this article, I have teamed up with Dremio, an organization which offers "a lakehouse platform for teams that know and love SQL." Dremio was founded in 2015, and solely specialized in data lakehouses. They have worked with bringing their technology to a variety of organizations including Unilever, Deloitte, Nokia, and have different cloud and technology partners such as AWS. Dremio has gone on to become a leader in the data lakehouse space.

"Data lakehouses combine the scalability and flexibility of data lakes with the performance and functionality of data warehouses. Data lakehouses are the best of both worlds and the future of data management for analytical purposes."

— Mark Lyons, Dremio.

Their team has been helpful and answered a series of questions we posed in order to help you understand all you need to know about data lakehouses.

A Discussion with Mark Lyons of Dremio

Editor's note: The following questions have been answered by Mark Lyons, Vice President of Product Management at Dremio.

What is a data lakehouse?

Data lakehouses are fairly new to the technology market with it being invented in 2010, and from there rapidly gained mainstream adoption. Data lakehouses have the ability to be used for data analytics and process unstructured data, unlike data warehouses.

What does a data lakehouse do?

Lakehouses enable companies to run all their analytical workloads from data exploration to mission-critical business intelligence dashboards on a single copy of data (typically Apache Parquet format which is the most performant) as it lives in cloud object storage.

How do data lakehouses work?

Data lakehouses are the 2.0 data lake with many of the same goals but improved technology which solves historic shortcomings of the data lake also known as the “data swamp”. Data lakehouses is more than files in object storage - it’s bigger than that.

Data lakehouses have the ability to add table formats that enable warehouse functionality such as consistent inserts, updates & deletes to underlying data optimization like file compaction to prevent the well-known “small files” problem and more.

What are the essential features of a data lakehouse?

Important features include:

- Multi-Engine Support (Machine learning, SQL, Streaming, etc.)

- Insert, update & deletes on industry-standard table format such as Apache Iceberg

- Atomic transactions and data consistency guarantees like a traditional Database Management System (DBMS)

- Metadata (Hive metastore, Project Nessie, AWS Glue Catalog, etc.)

- Vendor agnostic

- Standard client-side integrations to support data consumers using notebooks or dashboard tools

- Auto-scaling

- Software or SaaS for users to choose what best fits their needs.

What is the relationship between data lakehouses and data warehouses?

Data lakehouses have similarities and differences with data warehouses. Data lakehouses enable SQL engines (Dremio, Hive, Athena, Spark SQL, etc.) to provide Data Manipulation Language (DML) functions on industry-standard table formats with database level guarantees for transactions and data consistency.

This makes the Data lakehouse equivalent to the capabilities of a Data warehouse but the story goes even further when you look at the differences. Data lakehouses support other engines for use cases beyond SQL such as machine learning or streaming on the same data. They are also vendor agnostic, which means that they are and all of the data used is kept in open formats adhering to the open data architecture philosophy.

Why are lakehouses becoming so popular?

With a data lakehouse architecture, data consumers can use their favorite tools to analyze data immediately - this is the big plus! Data engineers save a lot of time and money required to load data into a warehouse for others to use as well as maintaining the associated infrastructure.

In addition, data lakehouses eliminate vendor lock-in and lock-out that cloud data warehouses are notorious for. Data in cloud object storage is stored in open, vendor-agnostic formats like Apache Parquet and Apache Iceberg, so no vendor has leverage over the data.

In the lakehouse space, companies naturally benefit from competition and innovation. They have flexibility in choosing compute engines to process the type of data that is being used today, and easily try new compute engines as they emerge in the future.

Conclusion

Dremio’s aim is to shatter a 30-year paradigm that holds every company back. They wanted to eliminate certain barriers, help companies become innovative and accelerate time to insight, and put control back into the hands of the user.

I hope that this interview with Mark Lyons from Dremio has helped you better understand what data lakehouses are, why they entered the market, how they work, their important features, and how they differ from data warehouses.

We would like to thank Mark Lyons and Dremio for their participation in the creation of this article.

Nisha Arya is a Data Scientist and Freelance Technical Writer. She is particularly interested in providing Data Science career advice or tutorials and theory based knowledge around Data Science. She also wishes to explore the different ways Artificial Intelligence is/can benefit the longevity of human life. A keen learner, seeking to broaden her tech knowledge and writing skills, whilst helping guide others.