Image by Author

If you thought you heard all you could about ChatGPT, well you’re wrong. OpenAI has made its ChatGPT and Whisper models available on its API, allowing developers to have access to AI-powered language and speech-to-text capabilities.

Let’s take a step back first. Some of you may not know what ChatGPT or Whisper is. So let me give you a simple breakdown.

What is ChatGPT?

ChatGPT is an AI-based chatbot system launched by OpenAI in November 2022. It uses Generative Pre-trained Transformer 3 (GPT-3) and an autoregressive language model that produces human-like text. It is a language-processing AI model that is trained so that it can predict what token is next.

Examples of what ChatGPT can do is

- Write long content from articles to papers.

- Write short-length poems and limericks

- Break down complex topics into layman’s terms

- Help you plan and organize meetings, holidays, and more.

- Personalized communication

If you would like to know more about ChatGPT, check out these articles:

- ChatGPT: Everything You Need to Know

- ChatGPT as a Python Programming Assistant

- The ChatGPT Cheat Sheet

ChatGPT API

The ChatGPT model family has been extended as OpenAI release: gpt-3.5-turbo. This new model will be priced at $0.002 per 1k tokens, making it 10x cheaper than the existing GPT-3.5 models.

GPT models traditionally use unstructured text, which is then represented as a sequence of ‘tokens. However, with ChatGPT, the model uses a sequence of messages along with metadata.

What is Whisper?

In September 2022, OpenAI introduced Whisper - an automatic speech recognition (ASR) system. The speech-to-text model is open-sourced and has been given a lot of praise from the developer community.

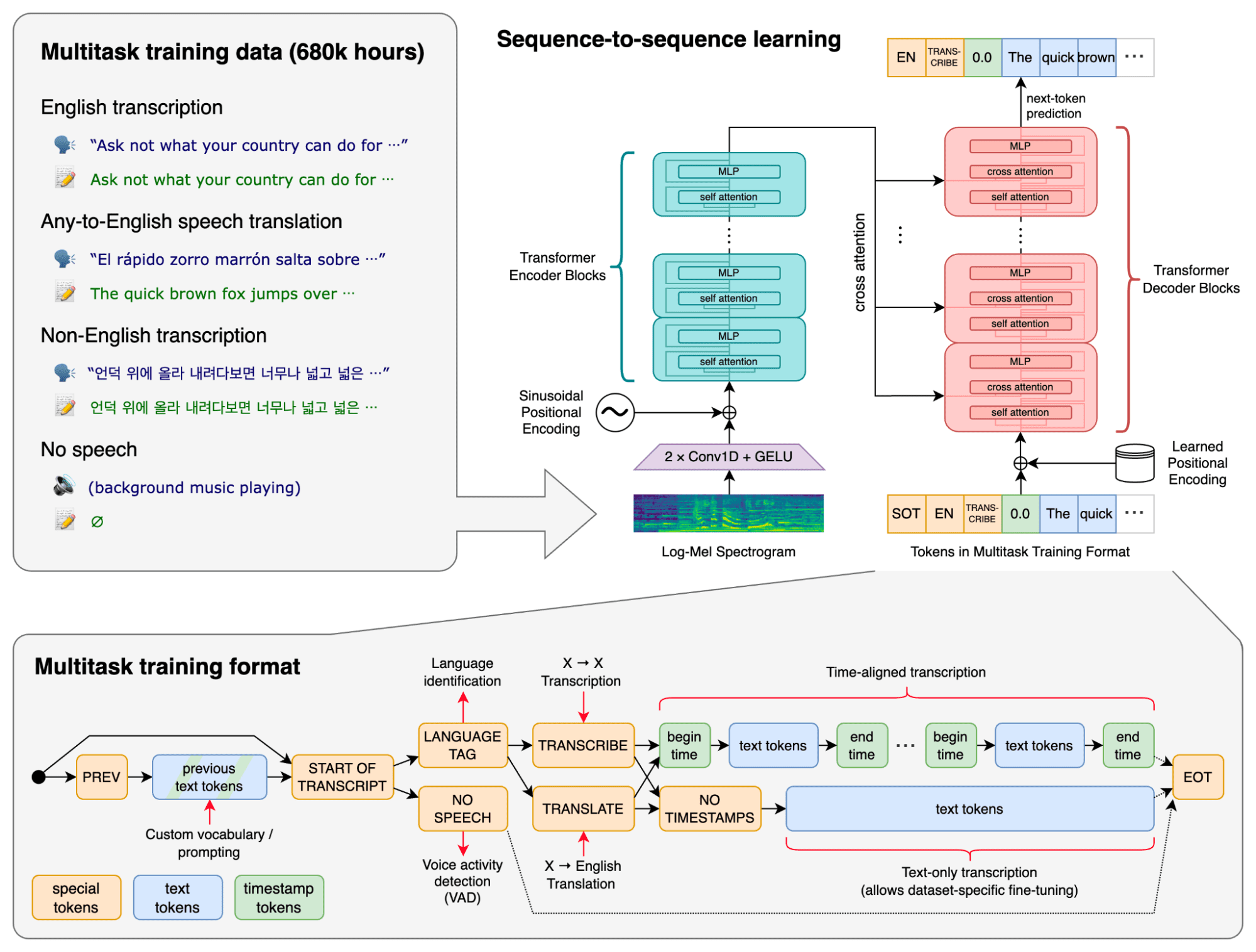

It has been trained on 680,000 hours of large datasets that contain diverse audios that are multilingual. The model also has a multitasking ability and can perform multilingual speech recognition, speech translation, and language identification. These large datasets are supervised data that have been collected from the web.

The tasks mentioned above are represented as a sequence of tokens together so that the decoder can make predictions on them. The joining of these tasks naturally eliminates several stages that normally occur in the traditional speech-processing pipeline. It can take files in different formats such as M4A, MP3, MP4, MPEG, MPGA, WAV and WEBM.

Below is an image of OpenAI’s Whisper approach:

Image from OpenAI GitHub

Whisper API

OpenAI listened to their consumer's needs and took into consideration how hard Whisper can be to run. Therefore, they now have a large-v2-model which is available through their API that provides convenient on-demand access. This will be priced at $0.006 / minute.

Users will also benefit from OpenAI’s highly-optimized serving stack which provides fast performance.

ChatGPT and Whisper

OpenAI were able to reduce the cost of ChatGPT by 90%, and it seems like this saving in costs has now opened up more opportunities for API users. They wanted to give developers access to cutting-edge language and speech-to-text capabilities.

Developers will now be able to use OpenAI’s open-source Whisper large-v2 model, which provides much faster and cost-effective results. In regards to ChatGPT, the model will keep going through continuous improvements which API users will benefit from as well as having a deeper control of their models.

After receiving feedback from developers, OpenAI made some specific changes to help developers experience:

- An improvement in the developer's documentation

- The data that is submitted through the API is not used for improvements in services unless you opt in.

- A 30-day retention policy with the option of stricter retention depending on needs.

Rather than having to use OpenAI’s current language approach, ChatGPT and Whisper APIs will allow third-party developers to easily integrate them into their platforms.

Dedicated instances

OpenAI is also offering dedicated instances for users who require deeper control over their model version and system performance. Developers will pay by time period and will be allocated compute infrastructure that serves their needs. This makes a lot of economic sense for developers who are planning to run 450M tokens per day.

They will have full control of the load of the instances, the option to enable features and pin the model snapshot. Not only will it reduce the developer's costs, but also make their process more effective.

Wrapping it up

The launch of ChatGPT and Whisper APIs is expected to have a profound impact on the community of developers. It provides developers with new state-of-the-art tools and capabilities, allowing them to build better, advanced, language-based applications.

Nisha Arya is a Data Scientist, Freelance Technical Writer and Community Manager at KDnuggets. She is particularly interested in providing Data Science career advice or tutorials and theory based knowledge around Data Science. She also wishes to explore the different ways Artificial Intelligence is/can benefit the longevity of human life. A keen learner, seeking to broaden her tech knowledge and writing skills, whilst helping guide others.