Expert Insights on Developing Safe, Secure, and Trustworthy AI Frameworks

In alignment with President Biden's recent Executive Order emphasizing safe, secure, and trustworthy AI, we share our Trusted AI (TAI) lessons learned two years into the course of our US Federally funded TAI research projects.

By: Dr. Charles Vardeman, Dr. Christopher Sweet, and Dr. Paul Brenner

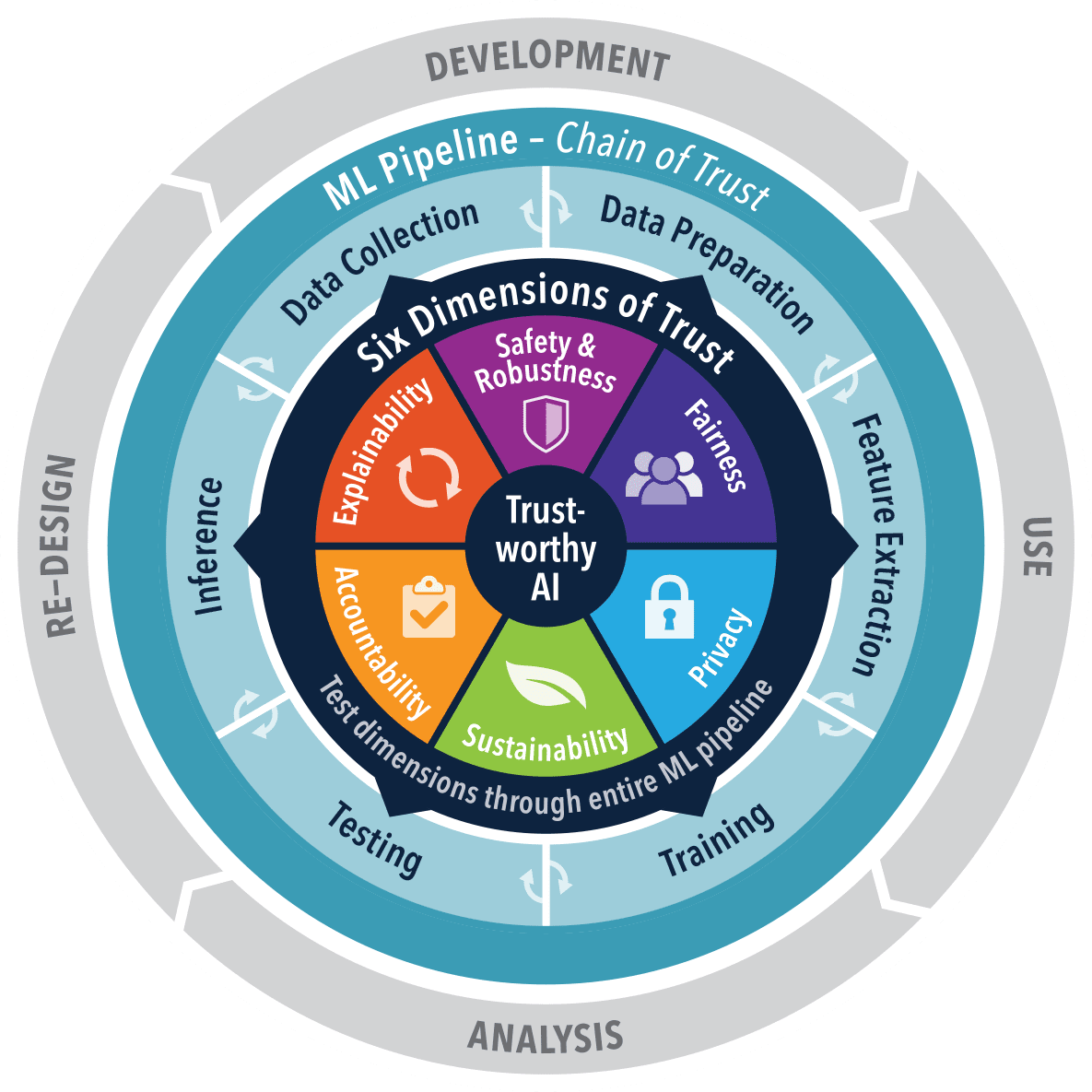

In alignment with President Biden's recent Executive Order emphasizing safe, secure, and trustworthy AI, we share our Trusted AI (TAI) lessons learned two years into the course of our research projects. This research initiative, visualized in the figure below, focuses on operationalizing AI that meets rigorous ethical and performance standards. It aligns with a growing industry trend towards transparency and accountability in AI systems, particularly in sensitive areas like national security. This article reflects on the shift from traditional software engineering to AI approaches where trust is paramount.

TAI Dimensions Applied to the ML Pipeline and AI Dev Cycle

Transitioning from Software Engineering to AI Engineering

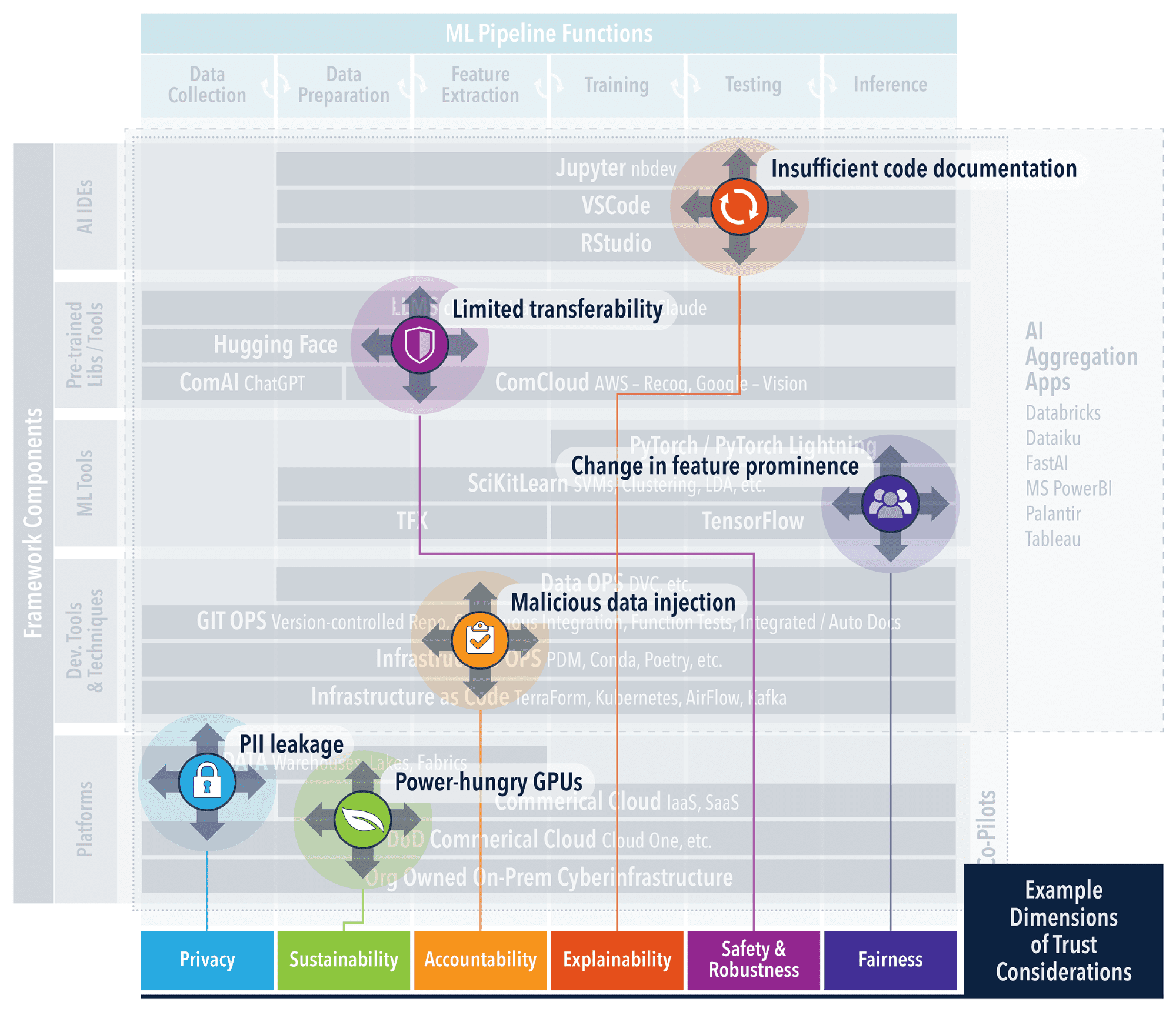

Transitioning from "Software 1.0 to 2.0 and notions of 3.0" necessitates a reliable infrastructure that not only conceptualizes but also practically enforces trust in AI. Even a simple set of example ML components such as that shown in the figure below, demonstrates the significant complexity that must be understood to address trust concerns at each level. Our TAI Frameworks sub-Project addresses this need by offering an integration point for software and best practices from TAI research products. Frameworks like these lower the barriers to TAI implementation. By automating the setup, developers and decision-makers can channel their efforts toward innovation and strategy, rather than grappling with initial complexities. This ensures that trust is not an afterthought but a prerequisite, with each phase from data management to model deployment being inherently aligned with ethical and operational standards. The result is a streamlined path to deploying AI systems that are not only technologically advanced but also ethically sound and strategically reliable for high-stakes environments. The TAI Frameworks project surveys and leverages existing software tools and best practices that have their own open source, sustainable communities and can be directly leveraged within the existing operational environments.

Example AI Framework Components and Exploits

GitOps and CI/CD

GitOps has become integral to AI engineering, especially within the framework of TAI. It represents an evolution in how software development and operational workflows are managed, offering a declarative approach to infrastructure and application lifecycle management. This methodology is pivotal in ensuring continuous quality and incorporating ethical responsibility in AI systems. The TAI Frameworks Project leverages GitOps as a foundational component to automate and streamline the development pipeline, from code to deployment. This approach ensures that best practices in software engineering are adhered to automatically, allowing for an immutable audit trail, version-controlled environment, and seamless rollback capabilities. It simplifies complex deployment processes. Moreover, GitOps facilitates the integration of ethical considerations by providing a structure where ethical checks can be automated as part of the CI/CD pipeline. The adoption of CI/CD in AI development is not just about maintaining code quality; it's about ensuring that AI systems are reliable, safe, and perform as expected. TAI promotes automated testing protocols that address the unique challenges of AI, particularly as we enter the era of generative AI and prompt-based systems. Testing is no longer confined to static code analysis and unit tests. It extends to dynamic validation of AI behaviors, encompassing the outputs of generative models and the efficacy of prompts. Automated test suites must now be capable of assessing not just the accuracy of responses, but also their relevance and safety.

Data-Centric and Documented

In the pursuit of TAI, a data-centric approach is foundational, as it prioritizes the quality and clarity of the data over the intricacies of algorithms, thereby establishing trust and interpretability from the ground up. Within this framework, a range of tools is available to uphold data integrity and traceability. dvc (data version control) is particularly favored for its congruence with the GitOps framework, enhancing Git to encompass data and experiment management (see alternatives here). It facilitates precise version control for datasets and models, just as Git does for code, which is essential for effective CI/CD practices. This ensures that the data engines powering AI systems are consistently fed with accurate and auditable data, a prerequisite for trustworthy AI. We leverage nbdev which compliments dvc by turning Jupyter Notebooks into a medium for literate programming and exploratory programming, streamlining the transition from exploratory analysis to well-documented code. The nature of software development is evolving to this style of “programming” and is only accelerated by the evolution of AI “Co-Pilots” that aid in the documentation and construction of AI Applications. Software Bill of Materials (SBoMs) and AI BoMs, advocated by open standards like SPDX, are integral to this ecosystem. They serve as detailed records that complement dvc and nbdev, encapsulating the provenance, composition, and compliance of AI models. SBoMs provide a comprehensive list of components, ensuring that each element of the AI system is accounted for and verified. AI BoMs extend this concept to include data sources and transformation processes, offering a level of transparency to models and data in an AI application. Together, they form a complete picture of an AI system's lineage, promoting trust and facilitating understanding among stakeholders.

The TAI Imperative

Ethical and data-centric approaches are fundamental to TAI, ensuring AI systems are both effective and reliable. Our TAI frameworks project leverages tools like dvc for data versioning and nbdev for literate programming, reflecting a shift in software engineering that accommodates the nuances of AI. These tools are emblematic of a greater trend towards integrating data quality, transparency, and ethical considerations from the start of the AI development process. In the civilian and defense sectors alike, the principles of TAI remain constant: a system is only as reliable as the data it's built on and the ethical framework it adheres to. As the complexity of AI increases, so does the need for robust frameworks that can handle this complexity transparently and ethically. The future of AI, particularly in mission critical applications, will hinge on the adoption of these data-centric and ethical approaches, solidifying trust in AI systems across all domains.

About Authors

Charles Vardeman, Christopher Sweet, and Paul Brenner are research scientists at the University of Notre Dame Center for Research Computing. They have decades of experience in scientific software and algorithm development with a focus on applied research for technology transfer into product operations. They have numerous technical papers, patents and funded research activities in the realms of data science and cyberinfrastructure. Weekly TAI nuggets aligned with student research projects can be found here.

Dr. Charles Vardeman is a research scientists at the University of Notre Dame Center for Research Computing.