Announcing a Blog Writing Contest, Winner Gets an NVIDIA GPU!

KDnuggets and NVIDIA are announcing a blog-writing contest with a GPU focus, with the winner receiving an RTX 3080 Ti GPU!

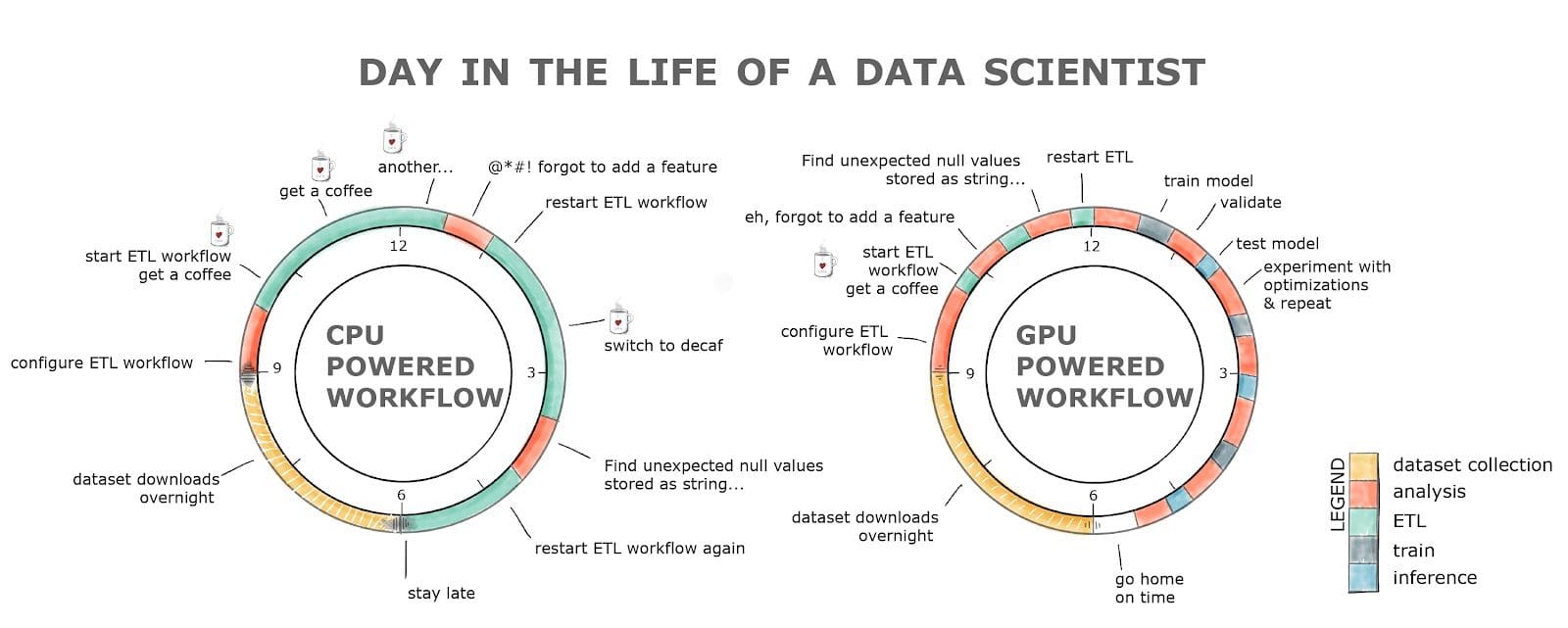

Click to enlarge

KDnuggets, in partnership with NVIDIA, is proud to announce an innovative contest for our readers and community contributors.

We want you to submit a top quality original article sharing a topic or tutorial that centers around the use of GPUs in your work, learning, or research. Do you have some tips to share, or an overview of a project you undertook, or maybe a “how to” tutorial which utilizes NVIDIA GPU technology? We want to hear from you.

If you would like to participate, send the link to your original document written and shared in Google Docs to submissions@kdnuggets.com. The subject line must read “KDnuggets NVIDIA Contest Submission” or your email may be ignored. Also confirm in the body of the email that you reside in North America; for logistical reasons, this contest is only open to residents of North America.

The contest will be open for submissions until 11:59 PM ET on November 30, 2022, so don’t delay. The editorial team at KDnuggets will select the top 10 submissions by consensus. After this, we will consult with members of NVIDIA to choose the top 3 submissions for publication on KDnuggets. Whichever of these published blogs receives the highest number of views according to Google Analytics after their first 2 weeks of publication will be the winner.

The author of the winning publication for this contest will receive their very own RTX 3080 Ti GPU!

To help get you started, NVIDIA has shared this collection of cheat sheets for our readers to consult: https://nvda.ws/3ST9E5O

Here you will find resources including:

- GPU Accelerated DataFrames in Python

- GPU DataFrames for Pandas Users

- Distributed Computing with GPUs in Python

- GPU Accelerated Machine Learning in Python

- GPU Accelerated Graph Processing in Python

- GPU Accelerated Data Streaming in Python

- Processing Cyber Security Logs with GPUs in Python

- GPU Accelerated Signal Processing in Python

NVIDIA has also agreed to consider for publication on the NVIDIA Tech Blog any of the quality submissions which utilize RAPIDS libraries within. NVIDIA GPUs together with their data science SDKs optimize GPU-acceleration for data science workloads, and pairing them for your projects just makes sense.

So what are you waiting for? Get writing now, get those submissions in by November 30, and let’s strengthen everyone’s GPU expertise together.