5 Useful Python Scripts for Synthetic Data Generation

Before you trust a library to generate your data, learn how to do it yourself and see where bias and errors actually begin.

Image by Editor

# Introduction

Synthetic data, as the name suggests, is created artificially rather than being collected from real-world sources. It looks like real data but avoids privacy issues and high data collection costs. This allows you to easily test software and models while running experiments to simulate performance after release.

While libraries like Faker, SDV, and SynthCity exist — and even large language models (LLMs) are widely used for generating synthetic data — my focus in this article is to avoid relying on these external libraries or AI tools. Instead, you will learn how to achieve the same results by writing your own Python scripts. This provides a better understanding of how to shape a dataset and how biases or errors are introduced. We will start with simple toy scripts to understand the available options. Once you grasp these basics, you can comfortably transition to specialized libraries.

# 1. Generating Simple Random Data

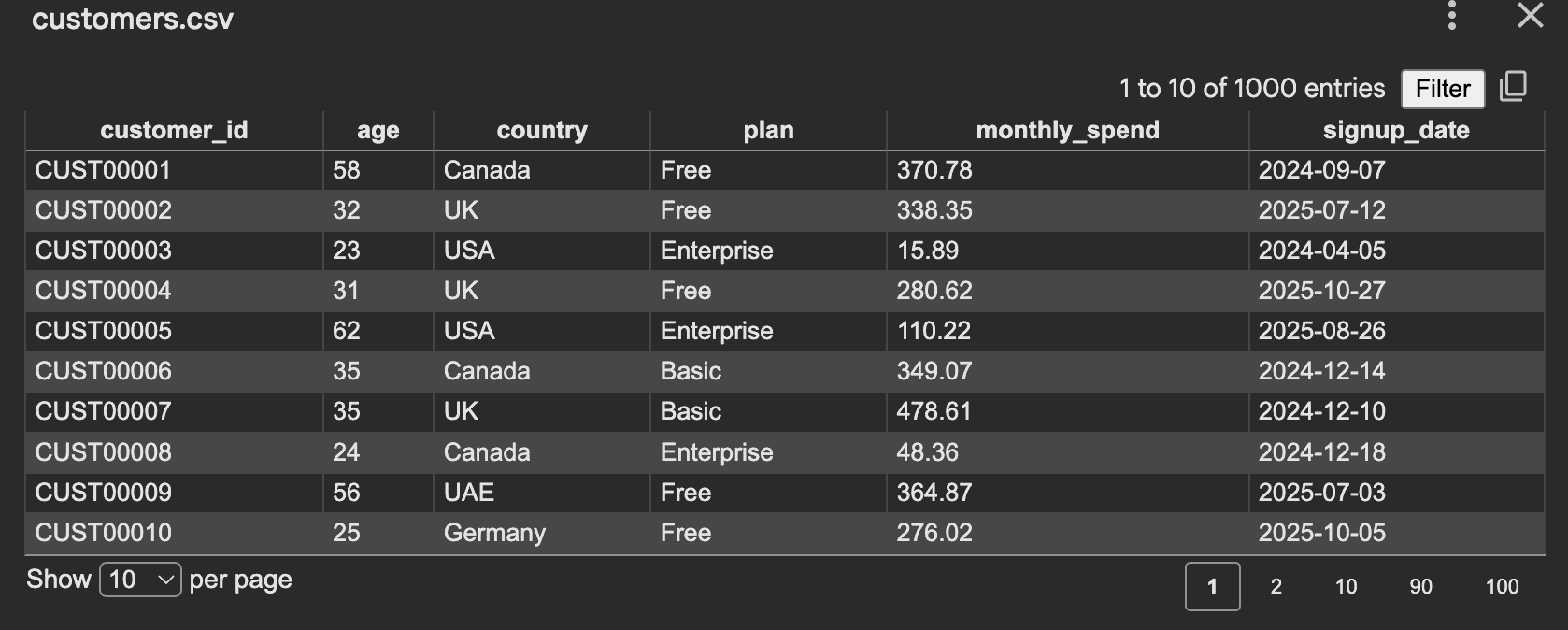

The simplest place to start is with a table. For example, if you need a fake customer dataset for an internal demo, you can run a script to generate comma-separated values (CSV) data:

import csv

import random

from datetime import datetime, timedelta

random.seed(42)

countries = ["Canada", "UK", "UAE", "Germany", "USA"]

plans = ["Free", "Basic", "Pro", "Enterprise"]

def random_signup_date():

start = datetime(2024, 1, 1)

end = datetime(2026, 1, 1)

delta_days = (end - start).days

return (start + timedelta(days=random.randint(0, delta_days))).date().isoformat()

rows = []

for i in range(1, 1001):

age = random.randint(18, 70)

country = random.choice(countries)

plan = random.choice(plans)

monthly_spend = round(random.uniform(0, 500), 2)

rows.append({

"customer_id": f"CUST{i:05d}",

"age": age,

"country": country,

"plan": plan,

"monthly_spend": monthly_spend,

"signup_date": random_signup_date()

})

with open("customers.csv", "w", newline="", encoding="utf-8") as f:

writer = csv.DictWriter(f, fieldnames=rows[0].keys())

writer.writeheader()

writer.writerows(rows)

print("Saved customers.csv")

Output:

This script is straightforward: you define fields, choose ranges, and write rows. The random module supports integer generation, floating-point values, random choice, and sampling. The csv module is designed to read and write row-based tabular data. This kind of dataset is suitable for:

- Frontend demos

- Dashboard testing

- API development

- Learning Structured Query Language (SQL)

- Unit testing input pipelines

However, there is a primary weakness to this approach: everything is completely random. This often results in data that looks flat or unnatural. Enterprise customers might spend only 2 dollars, while "Free" users might spend 400. Older users behave exactly like younger ones because there is no underlying structure.

In real-world scenarios, data rarely behaves this way. Instead of generating values independently, we can introduce relationships and rules. This makes the dataset feel more realistic while remaining fully synthetic. For instance:

- Enterprise customers should almost never have zero spend

- Spending ranges should depend on the selected plan

- Older users might spend slightly more on average

- Certain plans should be more common than others

Let’s add these controls to the script:

import csv

import random

random.seed(42)

plans = ["Free", "Basic", "Pro", "Enterprise"]

def choose_plan():

roll = random.random()

if roll < 0.45:

return "Free"

if roll < 0.75:

return "Basic"

if roll < 0.93:

return "Pro"

return "Enterprise"

def generate_spend(age, plan):

if plan == "Free":

base = random.uniform(0, 10)

elif plan == "Basic":

base = random.uniform(10, 60)

elif plan == "Pro":

base = random.uniform(50, 180)

else:

base = random.uniform(150, 500)

if age >= 40:

base *= 1.15

return round(base, 2)

rows = []

for i in range(1, 1001):

age = random.randint(18, 70)

plan = choose_plan()

spend = generate_spend(age, plan)

rows.append({

"customer_id": f"CUST{i:05d}",

"age": age,

"plan": plan,

"monthly_spend": spend

})

with open("controlled_customers.csv", "w", newline="", encoding="utf-8") as f:

writer = csv.DictWriter(f, fieldnames=rows[0].keys())

writer.writeheader()

writer.writerows(rows)

print("Saved controlled_customers.csv")

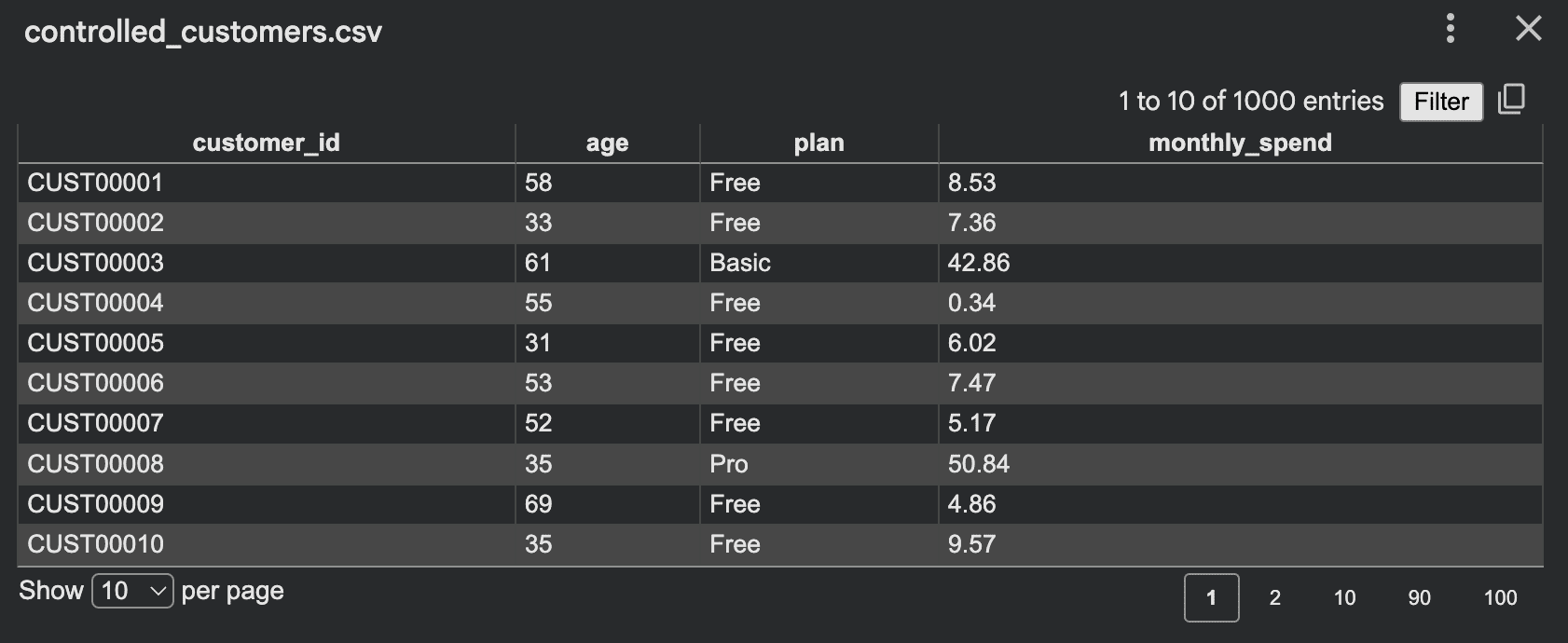

Output:

Now the dataset preserves meaningful patterns. Rather than generating random noise, you are simulating behaviors. Effective controls may include:

- Weighted category selection

- Realistic minimum and maximum ranges

- Conditional logic between columns

- Intentionally added rare edge cases

- Missing values inserted at low rates

- Correlated features instead of independent ones

# 2. Simulating Processes for Synthetic Data

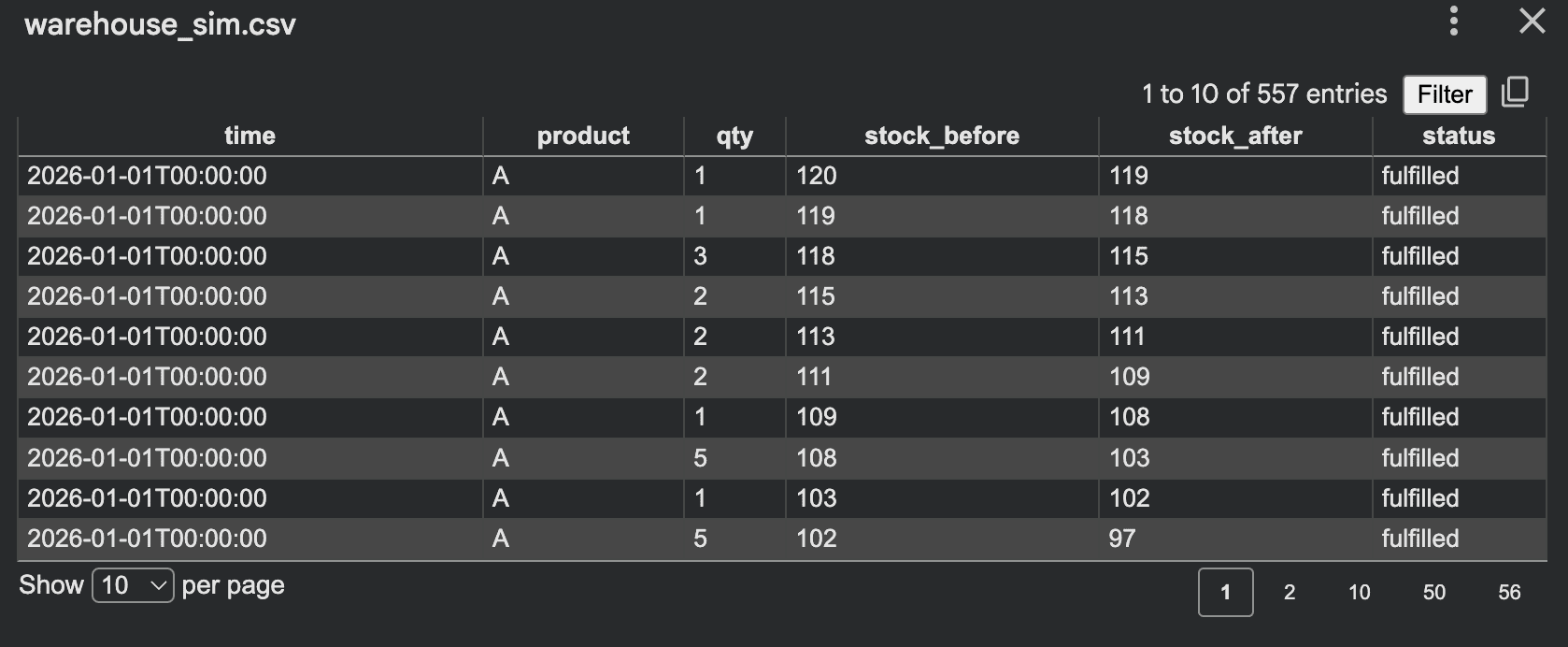

Simulation-based generation is one of the best ways to create realistic synthetic datasets. Instead of directly filling columns, you simulate a process. For example, consider a small warehouse where orders arrive, stock decreases, and low stock levels trigger backorders.

import csv

import random

from datetime import datetime, timedelta

random.seed(42)

inventory = {

"A": 120,

"B": 80,

"C": 50

}

rows = []

current_time = datetime(2026, 1, 1)

for day in range(30):

for product in inventory:

daily_orders = random.randint(0, 12)

for _ in range(daily_orders):

qty = random.randint(1, 5)

before = inventory[product]

if inventory[product] >= qty:

inventory[product] -= qty

status = "fulfilled"

else:

status = "backorder"

rows.append({

"time": current_time.isoformat(),

"product": product,

"qty": qty,

"stock_before": before,

"stock_after": inventory[product],

"status": status

})

if inventory[product] < 20:

restock = random.randint(30, 80)

inventory[product] += restock

rows.append({

"time": current_time.isoformat(),

"product": product,

"qty": restock,

"stock_before": inventory[product] - restock,

"stock_after": inventory[product],

"status": "restock"

})

current_time += timedelta(days=1)

with open("warehouse_sim.csv", "w", newline="", encoding="utf-8") as f:

writer = csv.DictWriter(f, fieldnames=rows[0].keys())

writer.writeheader()

writer.writerows(rows)

print("Saved warehouse_sim.csv")

Output:

This method is excellent because the data is a byproduct of system behavior, which typically yields more realistic relationships than direct random row generation. Other simulation ideas include:

- Call center queues

- Ride requests and driver matching

- Loan applications and approvals

- Subscriptions and churn

- Patient appointment flows

- Website traffic and conversion

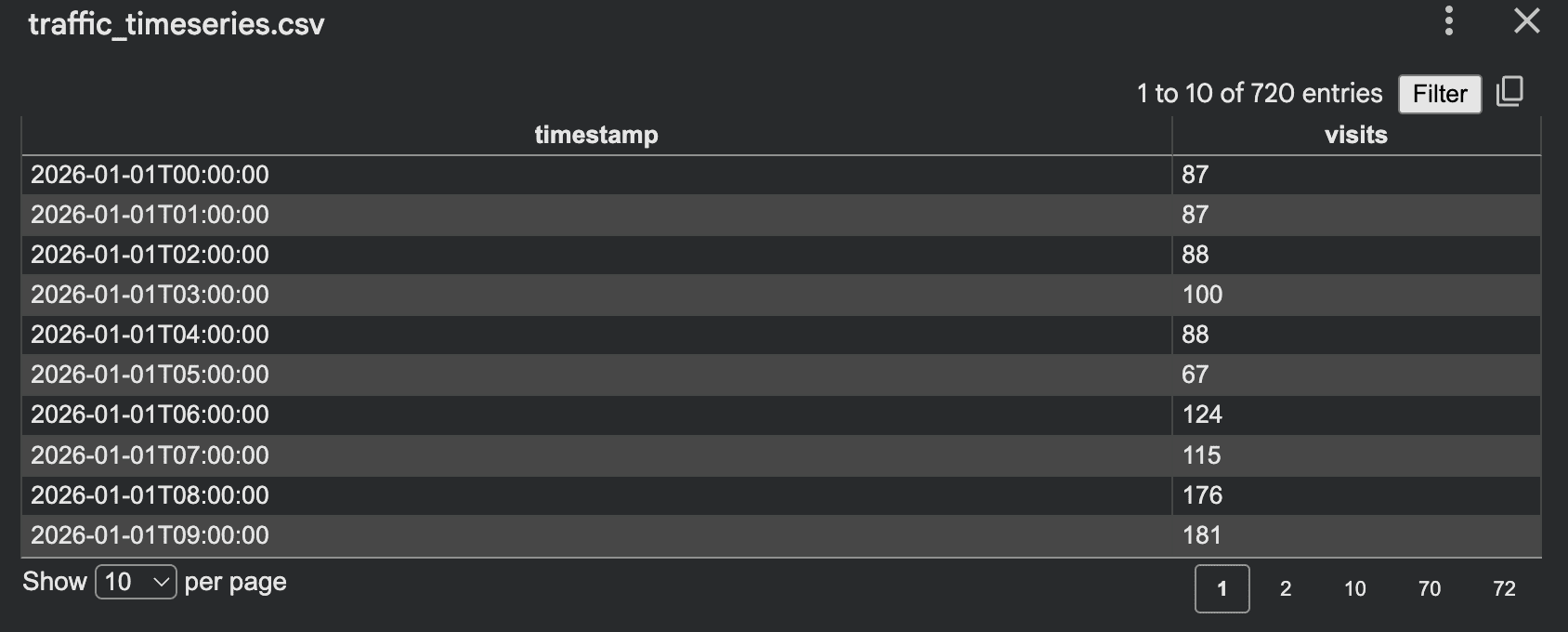

# 3. Generating Time Series Synthetic Data

Synthetic data is not just limited to static tables. Many systems produce sequences over time, such as app traffic, sensor readings, orders per hour, or server response times. Here is a simple time series generator for hourly website visits with weekday patterns.

import csv

import random

from datetime import datetime, timedelta

random.seed(42)

start = datetime(2026, 1, 1, 0, 0, 0)

hours = 24 * 30

rows = []

for i in range(hours):

ts = start + timedelta(hours=i)

weekday = ts.weekday()

base = 120

if weekday >= 5:

base = 80

hour = ts.hour

if 8 <= hour <= 11:

base += 60

elif 18 <= hour <= 21:

base += 40

elif 0 <= hour <= 5:

base -= 30

visits = max(0, int(random.gauss(base, 15)))

rows.append({

"timestamp": ts.isoformat(),

"visits": visits

})

with open("traffic_timeseries.csv", "w", newline="", encoding="utf-8") as f:

writer = csv.DictWriter(f, fieldnames=["timestamp", "visits"])

writer.writeheader()

writer.writerows(rows)

print("Saved traffic_timeseries.csv")

Output:

This approach works well because it incorporates trends, noise, and cyclic behavior while remaining easy to explain and debug.

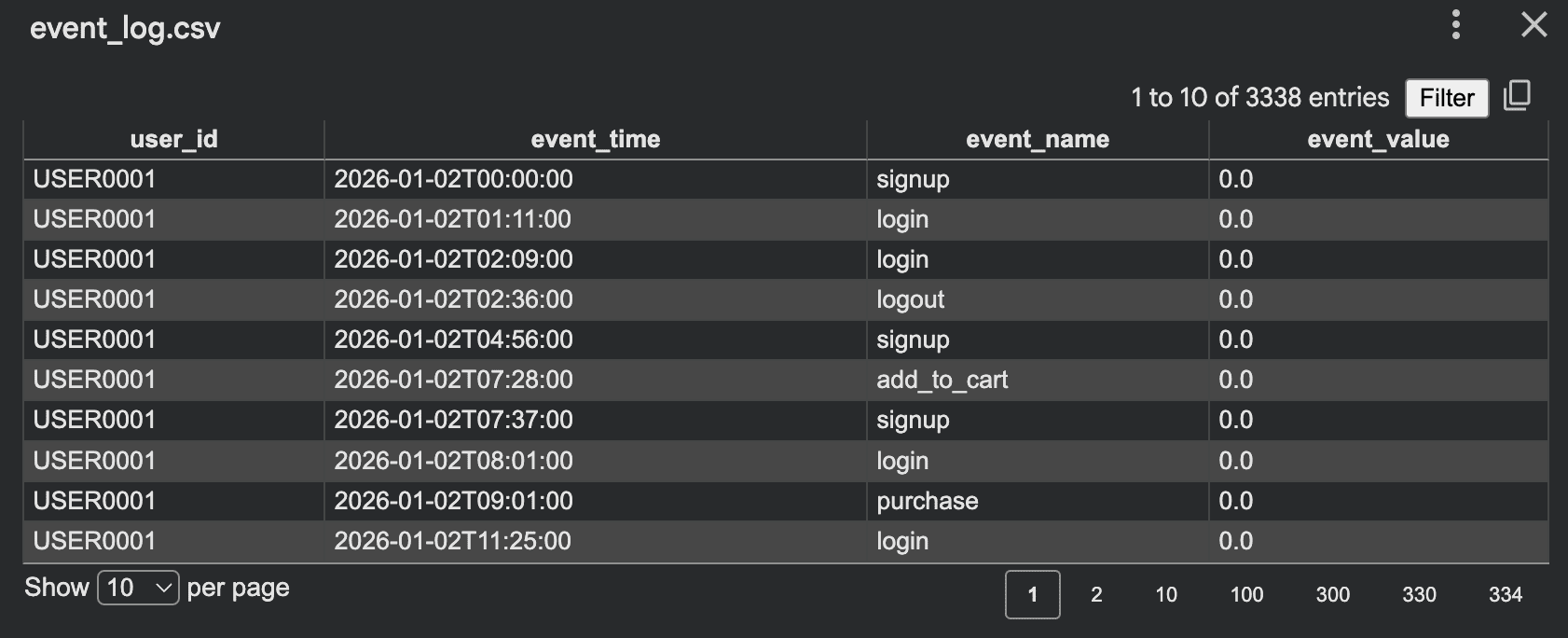

# 4. Creating Event Logs

Event logs are another useful script style, ideal for product analytics and workflow testing. Instead of one row per customer, you create one row per action.

import csv

import random

from datetime import datetime, timedelta

random.seed(42)

events = ["signup", "login", "view_page", "add_to_cart", "purchase", "logout"]

rows = []

start = datetime(2026, 1, 1)

for user_id in range(1, 201):

event_count = random.randint(5, 30)

current_time = start + timedelta(days=random.randint(0, 10))

for _ in range(event_count):

event = random.choice(events)

if event == "purchase" and random.random() < 0.6:

value = round(random.uniform(10, 300), 2)

else:

value = 0.0

rows.append({

"user_id": f"USER{user_id:04d}",

"event_time": current_time.isoformat(),

"event_name": event,

"event_value": value

})

current_time += timedelta(minutes=random.randint(1, 180))

with open("event_log.csv", "w", newline="", encoding="utf-8") as f:

writer = csv.DictWriter(f, fieldnames=rows[0].keys())

writer.writeheader()

writer.writerows(rows)

print("Saved event_log.csv")

Output:

This format is useful for:

- Funnel analysis

- Analytics pipeline testing

- Business intelligence (BI) dashboards

- Session reconstruction

- Anomaly detection experiments

A useful technique here is to make events dependent on earlier actions. For example, a purchase should typically follow a login or a page view, making the synthetic log more believable.

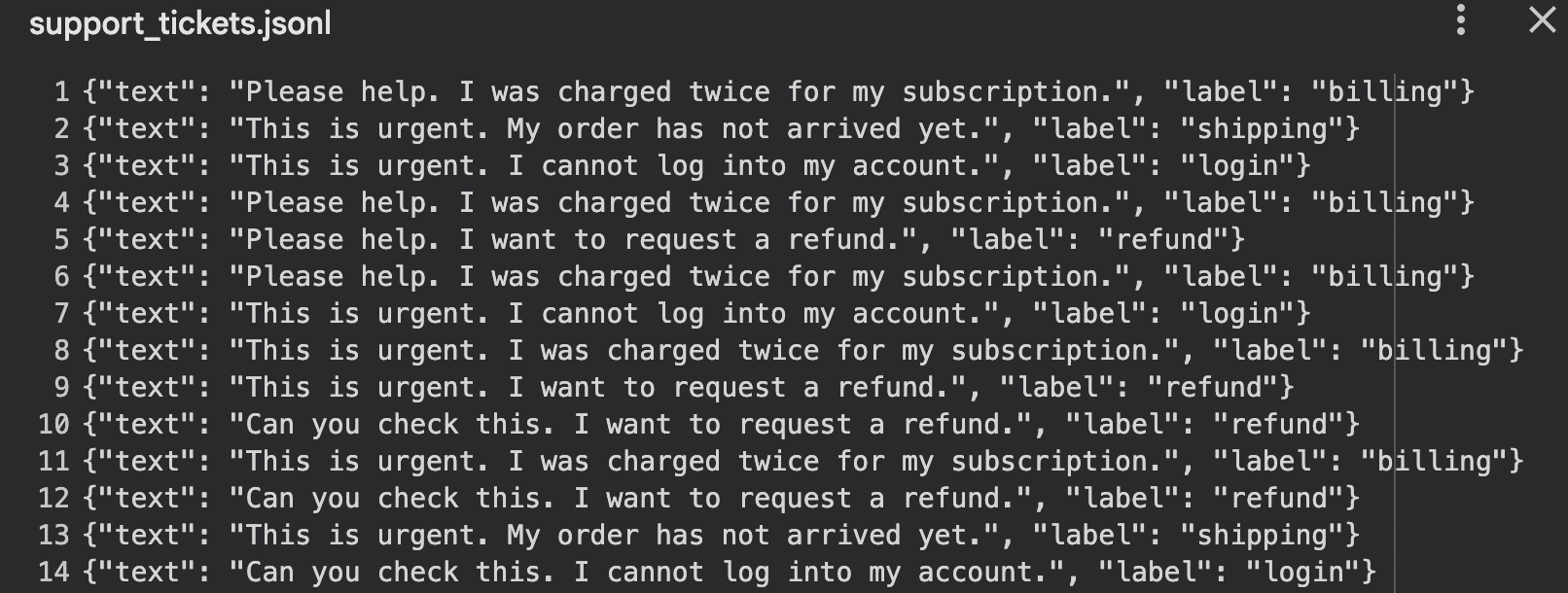

# 5. Generating Synthetic Text Data with Templates

Synthetic data is also valuable for natural language processing (NLP). You do not always need an LLM to start; you can build effective text datasets using templates and controlled variation. For example, you can create support ticket training data:

import json

import random

random.seed(42)

issues = [

("billing", "I was charged twice for my subscription"),

("login", "I cannot log into my account"),

("shipping", "My order has not arrived yet"),

("refund", "I want to request a refund"),

]

tones = ["Please help", "This is urgent", "Can you check this", "I need support"]

records = []

for _ in range(100):

label, message = random.choice(issues)

tone = random.choice(tones)

text = f"{tone}. {message}."

records.append({

"text": text,

"label": label

})

with open("support_tickets.jsonl", "w", encoding="utf-8") as f:

for item in records:

f.write(json.dumps(item) + "\n")

print("Saved support_tickets.jsonl")

Output:

This approach works well for:

- Text classification demos

- Intent detection

- Chatbot testing

- Prompt evaluation

# Final Thoughts

Synthetic data scripts are powerful tools, but they can be implemented incorrectly. Be sure to avoid these common mistakes:

- Making all values uniformly random

- Forgetting dependencies between fields

- Generating values that violate business logic

- Assuming synthetic data is inherently safe by default

- Creating data that is too "clean" to be useful for testing real-world edge cases

- Using the same pattern so frequently that the dataset becomes predictable and unrealistic

Privacy remains the most critical consideration. While synthetic data reduces exposure to real records, it is not risk-free. If a generator is too closely tied to original sensitive data, leakage can still occur. This is why privacy-preserving methods, such as differentially private synthetic data, are essential.

Kanwal Mehreen is a machine learning engineer and a technical writer with a profound passion for data science and the intersection of AI with medicine. She co-authored the ebook "Maximizing Productivity with ChatGPT". As a Google Generation Scholar 2022 for APAC, she champions diversity and academic excellence. She's also recognized as a Teradata Diversity in Tech Scholar, Mitacs Globalink Research Scholar, and Harvard WeCode Scholar. Kanwal is an ardent advocate for change, having founded FEMCodes to empower women in STEM fields.