Deep Learning and the Triumph of Empiricism

Theoretical guarantees are clearly desirable. And yet many of today's best-performing supervised learning algorithms offer none. What explains the gap between theoretical soundness and empirical success?

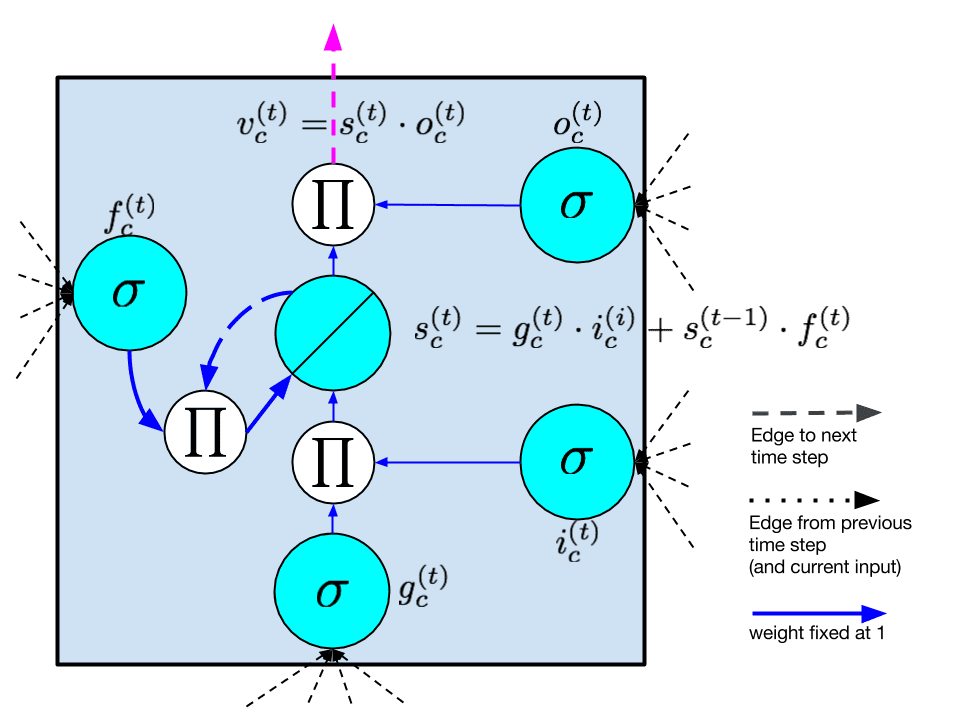

Deep learning is now the standard-bearer for many tasks in supervised machine learning. It could also be argued that deep learning has yielded the most practically useful algorithms in unsupervised machine learning in a few decades. The excitement stemming from these advances has provoked a flurry of research and sensational headlines from journalists. While I am wary of the hype, I too find the technology exciting, and recently joined the party, issuing a 30-page critical review on Recurrent Neural Networks (RNNs) for sequence learning.

But many in the machine learning research community are not fawning over the deepness. In fact, for many who fought to resuscitate artificial intelligence research by grounding it in the language of mathematics and protecting it with theoretical guarantees, deep learning represents a fad. Worse, to some it might seem to be a regression.1

In this article, I'll try to offer a high-level and even-handed analysis of the useful-ness of theoretical guarantees and why they might not always be as practically useful as intellectually rewarding. More to the point, I'll offer arguments to explain why after so many years of increasingly statistically sound machine learning, many of today's best performing algorithms offer no theoretical guarantees.

Guarantee What?

A guarantee is a statement that can be made with mathematical certainty about the behavior, performance or complexity of an algorithm. All else being equal, we would love to say that given sufficient time our algorithm A can find a classifier H from some class of models {H1, H2, ...} that performs no worse than H*, where H* is the best classifier in the class. This is, of course, with respect to some fixed loss function L. Short of that, we'd love to bound the difference or ratio between the performance of H and H* by some constant. Short of such an absolute bound, we'd love to be able to prove that with high probability H and H* are give similar values after running our algorithm for some fixed period of time.

Many existing algorithms offer strong statistical guarantees. Linear regression admits an exact solution. Logistic regression is guaranteed to converge over time. Deep learning algorithms, generally, offer nothing in the way of guarantees. Given an arbitrarily bad starting point, I know of no theoretical proof that a neural network trained by some variant of SGD will necessarily improve over time and not be trapped in a local minima. There is a flurry of recent work which suggests reasonably that saddle points outnumber local minima on the error surfaces of neural networks (an m-dimensional surface where m is the number of learned parameters, typically the weights on edges between nodes). However, this is not the same as proving that local minima do not exist or that they cannot be arbitrarily bad.

Problems with Guarantees

Provable mathematical properties are obviously desirable. They may have even saved machine learning, giving succor at a time when the field of AI was ill-defined, over-promising, and under-delivering. And yet many of today's best algorithms offer nothing in the way of guarantees. How is this possible?

I'll explain several reasons in the following paragraphs. They include:

- Guarantees are typically relative to a small class of hypotheses.

- Guarantees are often restricted to worst-case analysis, but the real world seldom presents the worst case.

- Guarantees are often predicated on incorrect assumptions about data.

Selecting a Winner from a Weak Pool

To begin, theoretical guarantees usually assure that a hypothesis is close to the best hypothesis in some given class. This in no way guarantees that there exists a hypothesis in the given class capable of performing satisfactorily.Here's a heavy handed example: I desire a human editor to assist me in composing a document. Spell-check may come with guarantees about how it will behave. It will identify certain misspellings with 100% accuracy. But existing automated proof-reading tools cannot provide the insight offered by an intelligent human. Of course, a human offers nothing in the way of mathematical guarantees. The human may fall asleep, ignore my emails, or respond nonsensically. Nevertheless, a he/she is capable of expressing a far greater range of useful ideas than Clippy.

A cynical take might be that there are two ways to improve a theoretical guarantee. One is to improve the algorithm. Another is to weaken the hypothesis class of which it is a member. While neural networks offer little in the way of guarantees, they offer a far richer set of potential hypotheses than most better understood machine learning models. As heuristic learning techniques and more powerful computers have eroded the obstacles to effective learning, it seems clear that for many models, this increased expressiveness is essential for making predictions of practical utility.

The Worst Case May Not Matter

Guarantees are most often given in the worst case. By guaranteeing a result that is within a factor epsilon of optimal, we say that the worst case will be no worse than a factor epsilon. But in practice, the worst case scenario may never occur. Real world data is typically highly structured, and worst case scenarios may have a structure such that there is no overlap between a typical and pathological dataset. In these settings, the worst case bound still holds, but it may be the case that all algorithms perform much better. There may not be a reason to believe that the algorithm with the better worst case guarantee will have a better typical case performance.

Predicated on Provably Incorrect Assumptions

Another reason why models with theoretical soundness may not translate into real-world performance is that the assumptions about data necessary to produce theoretical results are often known to be false. Consider Latent Dirichlet Allocation (LDA) for example, a well understood and remarkably useful algorithm for topic modeling. Many theoretical proofs about LDA are predicated upon the assumption that a document is associated with a distribution over topics. Each topic is in turn associated with a distribution over all words in the vocabulary. The generative process then proceeds as follows. For each word in a document, first a topic is chosen stochastically according to the relative probabilities of each topic. Then, conditioned on the chosen topic, a word is chosen according to that topic's word distribution. This process repeats until all words are chosen.

Clearly, this assumption does not hold on any real-world natural language dataset. In real documents, words are chosen contextually and depend highly on the sentences they are placed in. Additionally document lengths aren't arbitrarily predetermined, although this may be the case in undergraduate coursework. However, given the assumption of such a generative process, many elegant proofs about theoretical properties of LDA hold.

To be clear, LDA is indeed a broadly useful, state of the art algorithm. Further, I am convinced that theoretical investigations of the properties of algorithms, even under unrealistic assumptions is a worthwhile and necessary step to improve our understanding and lay the groundwork for more general and powerful theorems later. In this article, I seek only to contextualize the nature of much known theory and to give intuition to data science practitioners about why the algorithms with the most favorable theoretical properties are not always the best performing empirically.

The Triumph of Empiricism

One might ask, If not guided entirely by theory, what allows methods like deep learning to prevail? Further Why are empirical methods backed by intuition so broadly successful now even as they fell out of favor decades ago?

In answer to these question, I believe that the existence of comparatively humongous, well-labeled datasets like ImageNet is responsible for resurgence in heuristic methods. Given sufficiently large datasets, the risk of overfitting is low. Further, validating against test data offers a means to address the typical case, instead of focusing on the worst case. Additionally, the advances in parallel computing and memory size have made it possible to follow-up on many hypotheses simultaneously with empirical experiments. Empirical studies backed by strong intuition offer a path forward when we reach the limits of our formal understanding.

Caveats

For all the success of deep learning in machine perception and natural language, one could reasonably argue that by far, the three most valuable machine learning algorithms are linear regression, logistic regression, and k-means clustering, all of which are well-understood theoretically. A reasonable counter-argument to the idea of a triumph of empiricism might be that far the best algorithms are theoretically motivated and grounded, and that empiricism is responsible only for the newest breakthroughs, not the most significant.

Few Things Are Guaranteed

When attainable, theoretical guarantees are beautiful. They reflect clear thinking and provide deep insight to the structure of a problem. Given a working algorithm, a theory which explains its performance deepens understanding and provides a basis for further intuition. Given the absence of a working algorithm, theory offers a path of attack.

However, there is also beauty in the idea that well-founded intuitions paired with rigorous empirical study can yield consistently functioning systems that outperform better-understood models, and sometimes even humans at many important tasks. Empiricism offers a path forward for applications where formal analysis is stifled, and potentially opens new directions that might eventually admit deeper theoretical understanding in the future.

1Yes, corny pun.

Zachary Chase Lipton is a PhD student in the Computer Science Engineering department at the University of California, San Diego. Funded by the Division of Biomedical Informatics, he is interested in both theoretical foundations and applications of machine learning. In addition to his work at UCSD, he has interned at Microsoft Research Labs.

Zachary Chase Lipton is a PhD student in the Computer Science Engineering department at the University of California, San Diego. Funded by the Division of Biomedical Informatics, he is interested in both theoretical foundations and applications of machine learning. In addition to his work at UCSD, he has interned at Microsoft Research Labs.

Related:

- Not So Fast: Questioning Deep Learning IQ Results

- The Myth of Model Interpretability

- (Deep Learning’s Deep Flaws)’s Deep Flaws

- Data Science’s Most Used, Confused, and Abused Jargon

- Differential Privacy: How to make Privacy and Data Mining Compatible