Overview of MLOps

Building a machine learning model is great, but to provide real business value, it must be made useful and maintained to remain useful over time. Machine Learning Operations (MLOps), overviewed here, is a rapidly growing space that encompasses everything required to deploy a machine learning model into production, and is a crucial aspect to delivering this sought after value.

By Steve Shwartz, AI Author, Investor, and Serial Entrepreneur.

Photo: iStockPhoto / NanoStockk

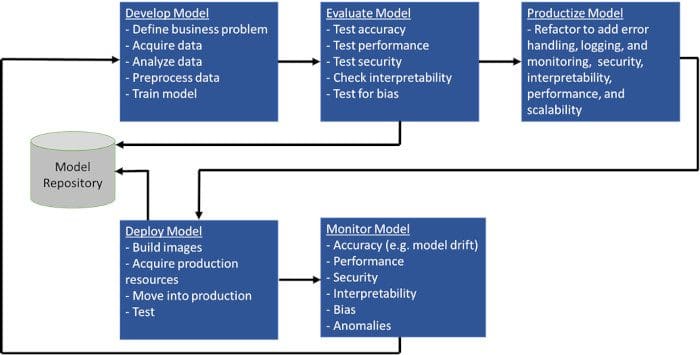

Considerable data science expertise is usually required to create a dataset and build a model for a particular application. But building a good model is usually not enough. In fact, it is not nearly enough. As illustrated below, developing and testing a model is just the first step.

Machine Learning Model Lifecycle.

Machine Learning Operations (MLOps) is everything else required to make that model useful, including capabilities for an automated development and deployment pipeline, monitoring, lifecycle management, and governance, as illustrated above. Let’s look at each of these.

Automation Pipeline

Creating a production ML system requires multiple steps: First, the data must undergo a series of transformations. Then, the model is trained. Usually, this requires experimentation with different network architectures and hyperparameters. Often, it is necessary to go back to the data and try different features. Next, the model must be validated with unit tests and integration tests. It needs to pass tests for data and model bias and explainability. Finally, it is deployed into a public cloud, an on-premise environment, or a hybrid environment. Additionally, some steps in the process might require an approval workflow.

If each of these steps is performed manually, the development process tends to be slow and brittle. Fortunately, many MLOps tools exist to automate these steps from data transformation to deployment end-to-end. When retraining is necessary, it is an automated, reliable, and reproducible process.

Monitoring

ML models tend to work well when first deployed and then work less well over time. As Forrester analyst, Dr. Kjell Carlsson said: “AI models are like six-year-olds during quarantine: They need constant attention . . . otherwise, something will break.”

It is critical for deployments to include various types of monitoring so that ML teams can be alerted when this starts to happen. Performance can degrade due to infrastructure issues such as inadequate CPU or memory. Performance can also degrade when the real-world data that constitute the independent variables that are input to the model start to take on different characteristics than the training data, a phenomenon known as data drift.

Similarly, the model may become less applicable because real-world conditions change, a phenomenon known as concept drift. For example, many predictive models of customer and supplier behavior were sent into a tailspin by COVID-19.

Some companies also monitor alternative models (e.g., different network architectures or different hyperparameters) to see if any of these “challenger” models starts performing better than the production model.

Often, it makes sense to put guardrails around decisions made by the model. These guardrails are simple rules that either trigger an alert, prevent the decision, or put the decision into a workflow for human approval.

Lifecycle Management

When model performance starts to degrade due to data or model drift, model retraining and possibly model re-architecture are required. However, the data science team shouldn’t have to start from scratch. In developing the original model, and perhaps in prior re-architectures, they probably tested many architectures, hyperparameters, and features. It’s critical that all these prior experiments (and results) are recorded so that the data science team doesn’t have to go back to square one. It’s also critical for communication and collaboration between data science team members.

Governance

Machine learning models are being used for many applications that impact people like bank loan decision, medical diagnosis, and hiring/firing decisions. The use of ML models in decision-making has been criticized for two reasons: First, these models are subject to bias, especially if the training data results in models that discriminate based on race, color, ethnicity, national origin, religion, gender, sexual orientation, or other protected classes. Second, these models are often black boxes that don’t explain their decision-making.

As a result, organizations that used ML-based decision-making are under pressure to ensure their models don’t discriminate and are capable of explaining their decisions. Many MLOps vendors are incorporating tools based on academic research (e.g., SHAP and Grad-CAM) that help explain the model decisions and are using a variety of techniques to ensure that the data and models are not biased. Additionally, they are incorporating bias and explainability tests in their monitoring protocols because models can become biased or lose explanatory capability over time.

Organizations also need to build trust and are starting to ensure that on-going performance, lack of bias, and explainability are auditable. This requires model catalogs that not only document all the data, parameter, and architecture decisions but also log each decision and provide traceability so that it can be determined what data, model, and parameters were used for each decision, when the model was retrained or otherwise modified, and who made each change. It is also important for auditors to be able to repeat historical transactions and to test the boundaries of model decision-making with what-if scenarios.

Security and data privacy are also key concerns for organizations using ML. Care must be taken to ensure the personal information is protected and role-based data access capabilities are essential, especially for regulated industries.

Governments around the world are also moving quickly to regulate ML-based decision-making that affects people. The European Union has led the way with its GDPR and CRD IV regulations. In the US, several regulatory agencies, including the US Federal Reserve Bank and the FDA, have created regulations around ML-based decision-making for financial and medical decisions. A more comprehensive law, the recently proposed Data Accountability and Transparency Act of 2020, is slated for Congressional consideration in 2021. Regulations will likely evolve to the point where CEO’s need to sign off on the explainability of and the lack of bias in their ML models.

The MLOps Landscape

As we continue in 2021, the market for MLOps is exploding. According to analyst firm Cognilytica, it is expected to be a $4 billion market by 2025.

There are big players and small players in the MLOps space. Major ML platform vendors like Amazon, Google, Microsoft, IBM, Cloudera, Domino, DataRobot, and H2O are incorporating MLOps capabilities into their platforms. According to Crunchbase, there are 35 private companies in the MLOps space who have raised between $1.8M and $1B in financing and who have between 3 and 2800 employees on LinkedIn:

| Financing ($millions) | Number of Employees | Description |

|

| Cloudera | 1000 | 2803 | Cloudera delivers an Enterprise Data Cloud for any data, anywhere, from the Edge to AI. |

| Databricks | 897 | 1757 | Databricks is a software platform that helps its customers unify their analytics across business, data science, and data engineering. |

| DataRobot | 750 | 1105 | DataRobot brings AI technology and ROI enablement services to global enterprises. |

| Dataiku | 246 | 556 | Dataiku operates as an enterprise artificial intelligence and machine-learning platform. |

| Alteryx | 163 | 1623 | Alteryx accelerates digital transformation by unifying analytics, data science and automated processes. |

| H2O | 151 | 257 | H2O.ai is the open source leader in AI and automatic machine learning with a mission to democratize AI for everyone. |

| Domino | 124 | 232 | Domino is the world's leading Enterprise Data Science Platform, powering data science at over 20% of the Fortune 100. |

| Iguazio | 72 | 83 | The Iguazio Data Science Platform enables you to develop, deploy and manage AI applications at scale and in real-time |

| Explorium.ai | 50 | 96 | Explorium offers a data science platform powered by augmented data discovery and feature engineering |

| Algorithmia | 38 | 63 | Algorithmia is a machine learning model deployment and management solution that automates the MLOps for an organization |

| Paperspace | 23 | 37 | Paperspace powers next-generation applications built on GPUs. |

| Pachyderm | 21 | 32 | Pachyderm is an enterprise-grade data science platform that makes explainable, repeatable, and scalable AI/ML a reality. |

| Weights and Biases | 20 | 58 | Tools for experiment tracking, improved model performance, and results collaboration |

| OctoML | 19 | 37 | OctoML is changing how developers optimize and deploy machine learning models for their AI needs. |

| Arthur AI | 18 | 28 | Arthur AI is a platform that monitors the productivity of machine learning models. |

| Truera | 17 | 26 | Truera provides a Model Intelligence platform for enterprises to analyze machine learning. |

| Snorkel AI | 15 | 39 | Snorkel AI is focused on making AI practical through Snorkel Flow: the data-first platform for enterprise AI |

| Seldon.io | 14 | 48 | Machine Learning Deployment Platform |

| Fiddler Labs | 13 | 46 | Fiddler enables users to create AI solutions that are transparent, explainable, and understandable. |

| run.ai | 13 | 26 | Run:AI develops an automated distributed training technology that virtualizes and accelerates deep learning. |

| ClearML (Allegro) | 11 | 29 | ML / DL Experiment Manager and ML-Ops Open-Source Solution End-to-End Product Life-cycle Management Enterprise Solution |

| Verta | 10 | 15 | Verta builds software infrastructure to help enterprise data science and machine learning (ML) teams develop and deploy ML models. |

| cnvrg.io | 8 | 38 | cnvrg.io is a full stack data science platform that helps teams manage models, and build auto-adaptive machine learning pipelines |

| Datatron | 8 | 19 | Datatron provides a single model governance (management) platform for all of your ML, AI, and Data Science models in production |

| Comet | 7 | 19 | Comet.ml is a machine learning platform designed to help AI practitioners and teams build reliable machine learning models. |

| ModelOp | 6 | 39 | Govern, Monitor and Manage all models across the enterprise |

| WhyLabs | 4 | 15 | WhyLabs is the AI observability and monitoring company. |

| Arize AI | 4 | 14 | Arize AI offers a platform that explains and troubleshoots production AI. |

| DarwinAI | 4 | 31 | DarwinAI’s Generative Synthesis 'AI building AI' technology enables optimized and explainable deep learning. |

| Mona | 4 | 11 | Mona is a SaaS monitoring platform for Data and AI driven systems |

| Valohai | 2 | 13 | Your Managed Machine Learning Platform that lets data scientists build, deploy and track machine learning models. |

| Modzy | 0 | 31 | The secure ModelOps platform to discover, deploy, manage, and govern machine learning at scale—getting to value faster. |

| Algomox | 0 | 17 | Catalyze Your AI Transformation |

| Monitaur | 0 | 8 | Monitaur is a software company that provides auditability, transparency, and governance for companies using machine learning software. |

| Hydrosphere.io | 0 | 3 | Hydrosphere.io is a platform for AI/ML operations automation |

Many of these companies focus on just one segment of MLOps, such as automation pipeline, monitoring, lifecycle management, or governance. Some argue that using multiple, best-of-breed MLOps products are better for data science projects than monolithic platforms. And some companies are building MLOps products for specific verticals. For example, Monitaur positions itself as a best-of-breed governance solution that can work with any platform. Monitaur is also building industry-specific MLOps governance capabilities for regulated industries, starting with insurance. (Full disclosure: I am an investor in Monitaur).

There are also a number of open-source MLOps projects, including:

- MLFlow manages the ML lifecycle, including experimentation, reproducibility, and deployment, and includes a model registry

- DVC manages version control for ML projects to make them shareable and reproducible

- Polyaxon has capabilities for experimentation, lifecycle automation, collaboration, and deployment, and includes a model registry

- Metaflow is a former Netflix project for managing the automation pipeline and deployment

- Kubeflow has capabilities for workflow automation and deployment in Kubernetes containers

2021 promises to be an interesting year for MLOps. We’ll likely see rapid growth, tremendous competition, and most likely, some consolidation.

Bio: Steve Shwartz (@sshwartz) started his AI career as a postdoc at Yale University many years ago, is a successful serial entrepreneur and investor, and is the author of “Evil Robots, Killer Computers, and Other Myths: The Truth About AI and the Future of Humanity”.

Related: