OpenAI’s Approach to Solve Math Word Problems

OpenAI's latest research aims to solve math word problems. Let's dive a bit deeper into the ideas behind this new research.

Source: https://www.quantamagazine.org/symbolic-mathematics-finally-yields-to-neural-networks-20200520/

Yesterday’s edition of The Sequence highlighted OpenAI's latest research to solve math word problems. Today, I would like to dive a bit deeper into the ideas behind this new research.

Mathematical reasoning has long been considered one of the cornerstones of human cognition and one of the main bars to measure the “intelligence” of language models. Take the following problem:

“Anthony had 50 pencils. He gave 1/2 of his pencils to Brandon, and he gave 3/5 of the remaining pencils to Charlie. He kept the remaining pencils. How many pencils did Anthony keep?”

Yes, the solution is 10 pencils but that’s not the point ????. Solving this problem does not only entail reasoning through the text but also orchestrating a sequence of steps to arrive at the solution. This dependency on language interpretability as well as the vulnerability to errors in the sequence of steps represents the two major challenges when building ML models that can solve math word problems. Recently, OpenAI published new research proposing an interesting method to tackle this type of problem.

By now you must be thinking that the OpenAI method relies on GPT-3 given that the pre-trained model has been at the center of most of their progress in different areas of natural language processing. The assumption is directionally correct. OpenAI models are based on GPT-3 for language understanding and use two fundamental methods to optimize its capabilities for math problems.

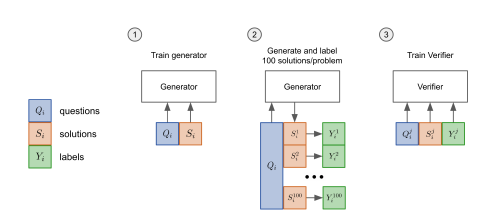

1) Finetuning: The most obvious approach to optimize GPT-3 for math word problems is using a fine-tuning method. In essence, the fine-tuning approach autoregressively sampling a solution and checking whether that solution is correct.

2) Verification: This approach uses trained verifiers that can sample multiple solutions and assign a probability score to each one finally outputting the solution with the highest score. The verification pipeline can sample up to 100 candidate solutions for a given problem.

Image Credit: OpenAI

If we process our original math problem through both methods we can see different reasoning steps.

Image Credit: OpenAI

OpenAI research showed that the verification approach provides a strong performance boost for solving math word problems as well as the datasets are large enough. Among other things, this is due to the fact that verification is a more scalable and parallelizable process.

Image Credit: OpenAI

The GSM8K Dataset

To advance research in solving math word problems, OpenAI open sources the GSM8K dataset. The dataset consists of 8.5K high-quality grade school math word problems which take between 2 to 8 steps to solve. All solutions are written in natural language which helps the interpretability.

Bio: Jesus Rodriguez is currently a CTO at Intotheblock. He is a technology expert, executive investor and startup advisor. Jesus founded Tellago, an award winning software development firm focused helping companies become great software organizations by leveraging new enterprise software trends.

Original. Reposted with permission.

Related:

- DeepMind’s New Super Model: Perceiver IO is a Transformer that can Handle Any Dataset

- A First Principles Theory of Generalization

- Behind OpenAI Codex: 5 Fascinating Challenges About Building Codex You Didn’t Know About