RedPajama Project: An Open-Source Initiative to Democratizing LLMs

Leading project to Empower the Community through Accessible Large Language Models.

Image by Author (Generated via Stable Diffusion 2.1)

In recent times, Large Language Models or LLM have dominated the world. With the introduction of ChatGPT, everyone could now benefit from the text generation model. But, many powerful models are only available commercially, leaving much great research and customization behind.

There are, of course, many projects now trying to open-source many of the LLMs fully. Projects such as Pythia, Dolly, DLite, and many others are some of examples. But why try to make LLMs open-source? It’s a sentiment of the community that moved all these projects to bridge the limitation that the closed model brings. However, are the open-source models inferior compared to the closed ones? Of course not. Many models could rival commercial models and show promising results in many areas.

To follow up with this movement, one of the open-source projects to democratize LLM is the RedPajama. What is this project, and how could it benefit the community? Let’s explore this further.

RedPajama

RedPajama is a collaboration project between Ontocord.ai, ETH DS3Lab, Stanford CRFM, and Hazy Research to develop reproducible open-source LLMs. The RedPajama project contains three milestones, including:

- Pre-training data

- Base models

- Instruction tuning data and models

When this article was written, the RedPajama project had developed the pre-training data and the models, including the base, instructed, and chat versions.

RedPajama Pre-Trained Data

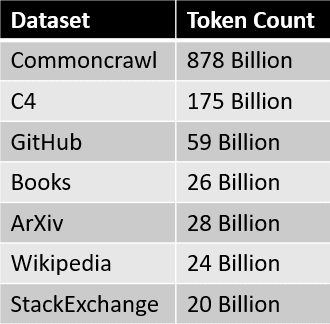

In the first step, RedPajama tries replicating the semi-open model's LLaMa dataset. This means RedPajama tries to build pre-trained data with 1.2 trillion tokens and fully open-source it for the community. Currently, the full data and the sample data can be downloaded on the HuggingFace.

The data sources for the RedPajama dataset are summarized in the table below.

Where each data slice is pre-processed and filtered carefully, the number of tokens also roughly matches the number reported in the LLaMa paper.

The next step after the dataset creation is to development of the base models.

RedPajama Models

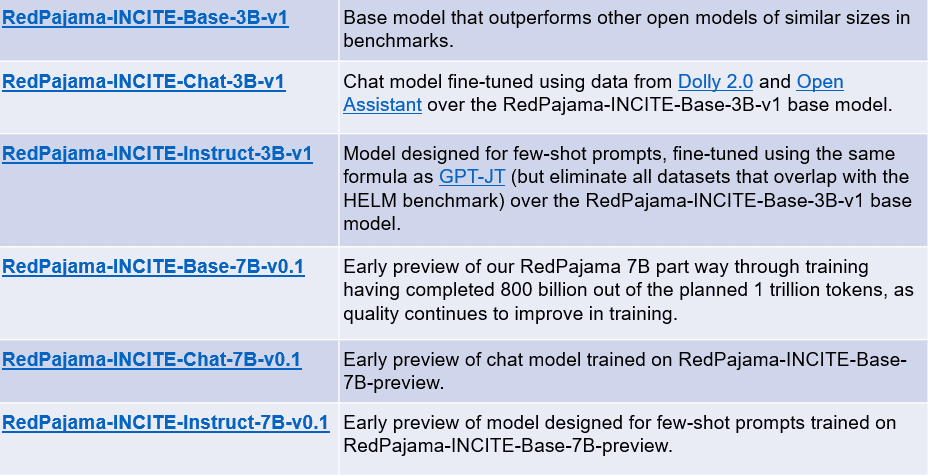

In the following weeks after the creation of the RedPajama dataset, the first model trained on the dataset was released. The base models have two versions: a 3 billion and a 7 Billion parameters model. The RedPajama project also releases two variations of each base model: instruction-tuned and chat models.

The summary of each model can be seen in the table below.

Image by Author (Adapted from together.xyz)

You can access the models above using the following links:

- RedPajama-INCITE-Base-3B-v1

- RedPajama-INCITE-Chat-3B-v1

- RedPajama-INCITE-Instruct-3B-v1

- RedPajama-INCITE-Base-7B-v0.1

- RedPajama-INCITE-Chat-7B-v0.1

- RedPajama-INCITE-Instruct-7B-v0.1

Let’s try out the RedPajama Base model. For example, we will try the RedPajama 3B base model with the code adapted from HuggingFace.

import torch

import transformers

from transformers import AutoTokenizer, AutoModelForCausalLM

# init

tokenizer = AutoTokenizer.from_pretrained(

"togethercomputer/RedPajama-INCITE-Base-3B-v1"

)

model = AutoModelForCausalLM.from_pretrained(

"togethercomputer/RedPajama-INCITE-Base-3B-v1", torch_dtype=torch.bfloat16

)

# infer

prompt = "Mother Teresa is"

inputs = tokenizer(prompt, return_tensors="pt").to(model.device)

input_length = inputs.input_ids.shape[1]

outputs = model.generate(

**inputs,

max_new_tokens=128,

do_sample=True,

temperature=0.7,

top_p=0.7,

top_k=50,

return_dict_in_generate=True

)

token = outputs.sequences[0, input_length:]

output_str = tokenizer.decode(token)

print(output_str)

a Catholic saint and is known for her work with the poor and dying in Calcutta, India.

Born in Skopje, Macedonia, in 1910, she was the youngest of thirteen children. Her parents died when she was only eight years old, and she was raised by her older brother, who was a priest.

In 1928, she entered the Order of the Sisters of Loreto in Ireland. She became a teacher and then a nun, and she devoted herself to caring for the poor and sick.

She was known for her work with the poor and dying in Calcutta, India.

The 3B Base model's result is promising, and it might be better if we use the 7B Base model. As the development is still ongoing, the project might have an even better model in the future.

Conclusion

Generative AI is rising, but sadly many great models are still locked under the company's archive. RedPajama is one of the leading projects that try to replicate the semi-open LLaMA model to democratize the LLMs. By developing a similar dataset to the LLama, RedPajama manages to create an open-source 1.2 trillion tokens dataset that many open-source projects have used.

RedPajama also releases two kinds of models; 3B and 7B parameter base models, where each base model contains instruction-tuned and chat models.

Cornellius Yudha Wijaya is a data science assistant manager and data writer. While working full-time at Allianz Indonesia, he loves to share Python and Data tips via social media and writing media.