5 Useful Docker Containers for Agentic Developers

Build AI agents instantly with 5 ready‑to‑run Docker containers. Pull, run, and start creating with zero setup.

Image by Author

# Introduction

The rise of frameworks like LangChain and CrewAI has made building AI agents easier than ever. However, developing these agents often involves hitting API rate limits, managing high-dimensional data, or exposing local servers to the internet.

Instead of paying for cloud services during the prototyping phase or polluting your host machine with dependencies, you can leverage Docker. With a single command, you can spin up the infrastructure that makes your agents smarter.

Here are 5 essential Docker containers that every AI agent developer should have in their toolkit.

# 1. Ollama: Run Local Language Models

Ollama dashboard

When building agents, sending every prompt to a cloud provider like OpenAI can get expensive and slow. Sometimes, you need a fast, private model for specific tasks — such as grammar correction or classification tasks.

Ollama allows you to run open-source large language models (LLMs) — like Llama 3, Mistral, or Phi — directly on your local machine. By running it in a container, you keep your system clean and can easily switch between different models without a complex Python environment setup.

Privacy and cost are major concerns when building agents. The Ollama Docker image makes it easy to serve models like Llama 3 or Mistral via a REST API.

// Explaining Why It Matters for Agentic Developers

Instead of sending sensitive data to external APIs like OpenAI, you can give your agent a "brain" that lives inside your own infrastructure. This is important for enterprise agents who handle proprietary data. By running docker run ollama/ollama, you immediately have a local endpoint that your agent code can call to generate text or reason about tasks.

// Initiating a Quick Start

To pull and run the Mistral model via the Ollama container, use the following command. This maps the port and keeps the models persisted on your local drive.

docker run -d -v ollama:/root/.ollama -p 11434:11434 --name ollama ollama/ollama

Once the container is running, you need to pull a model by executing a command inside the container:

docker exec -it ollama ollama run mistral

// Explaining Why It's Useful for Agentic Developers

You can now point your agent’s LLM client to http://localhost:11434. This gives you a local, API-compatible endpoint for fast prototyping and ensures your data never leaves your machine.

// Reviewing Key Benefits

- Data Privacy: Keep your prompts and data secure

- Cost Efficiency: No API fees for inference

- Latency: Faster responses when running on local GPUs

Learn more: Ollama Docker Hub

# 2. Qdrant: The Vector Database for Memory

Qdrant dashboard

Agents require memory to recall past conversations and domain knowledge. To give an agent long-term memory, you need a vector database. These databases store numerical representations (embeddings) of text, allowing your agent to search for semantically similar information later.

Qdrant is a high-performance, open-source vector database built in Rust. It is fast, reliable, and offers both a gRPC and a REST API. Running it in Docker gives you a production-grade memory system for your agents instantly.

// Explaining Why It Matters for Agentic Developers

To build a retrieval-augmented generation (RAG) agent, you need to store document embeddings and retrieve them quickly. Qdrant acts as the agent's long-term memory. When a user asks a question, the agent converts it into a vector, searches Qdrant for similar vectors — representing relevant knowledge — and uses that context to formulate an answer. Running it in Docker keeps this memory layer decoupled from your application code, making it more robust.

// Initiating a Quick Start

You can start Qdrant with a single command. This exposes the API and dashboard on port 6333 and the gRPC interface on port 6334.

docker run -d -p 6333:6333 -p 6334:6334 qdrant/qdrant

After running this, you can connect your agent to localhost:6333. When the agent learns something new, store the embedding in Qdrant. The next time the user asks a question, the agent can search this database for relevant "memories" to include in the prompt, making it truly conversational.

# 3. n8n: Glue Workflows Together

n8n dashboard

Agentic workflows rarely exist in a vacuum. You sometimes need your agent to check your email, update a row in a Google Sheet, or send a Slack message. While you could write the API calls manually, the process is often tedious.

n8n is a fair-code workflow automation tool. It allows you to connect different services using a visual UI. By running it locally, you can create complex workflows — such as "If an agent detects a sales lead, add it to HubSpot and send a Slack alert" — without writing a single line of integration code.

// Initiating a Quick Start

To persist your workflows, you should mount a volume. The following command sets up n8n with SQLite as its database.

docker run -d --name n8n -p 5678:5678 -v n8n_data:/home/node/.n8n n8nio/n8n

// Explaining Why It's Useful for Agentic Developers

You can design your agent to call an n8n webhook URL. The agent simply sends the data, and n8n handles the messy logic of talking to third-party APIs. This separates the "brain" (the LLM) from the "hands" (the integrations).

Access the editor at http://localhost:5678 and start automating.

Learn more: n8n Docker Hub

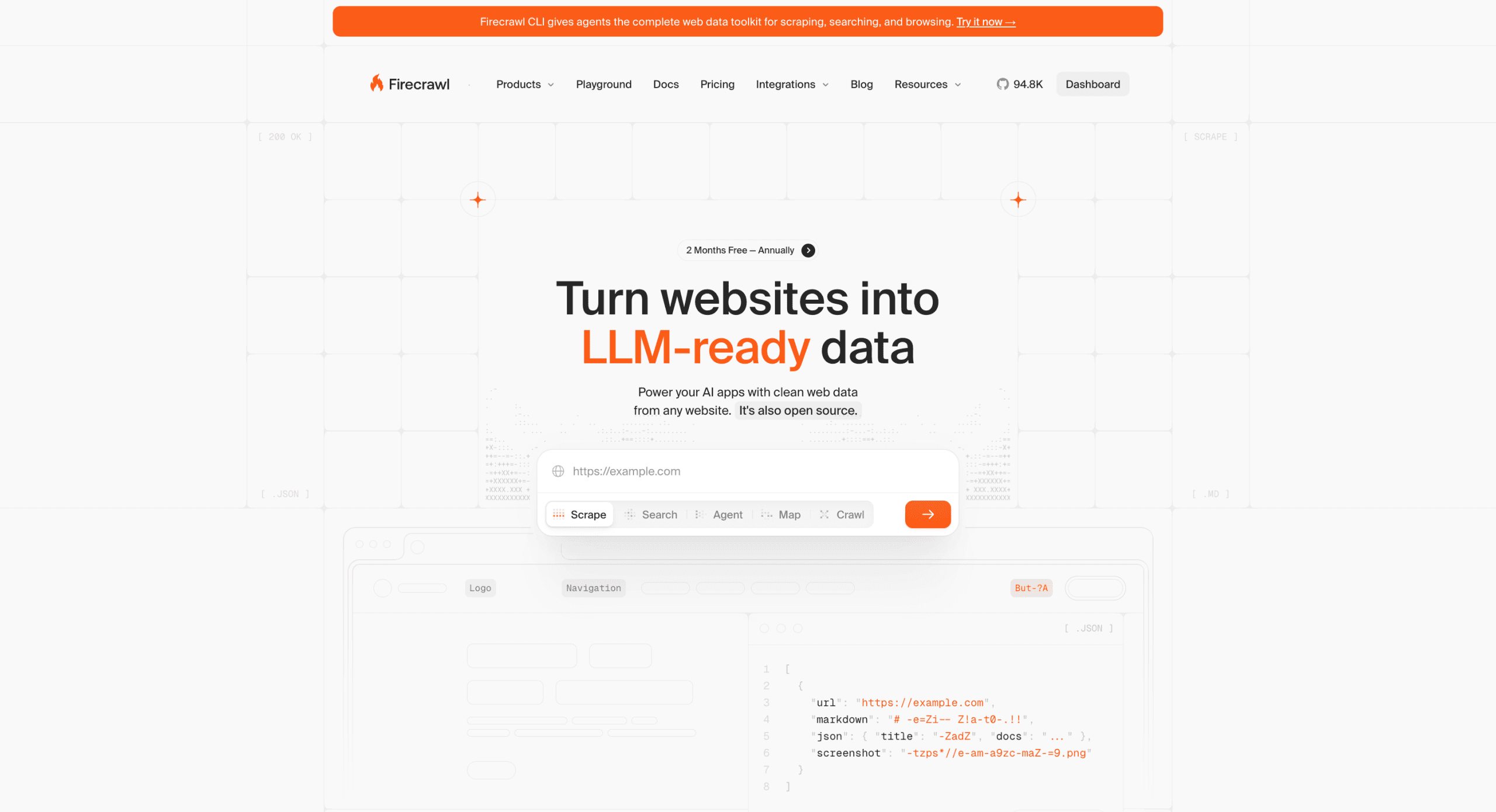

# 4. Firecrawl: Transform Websites into Large Language Model-Ready Data

Firecrawl dashboard

One of the most common tasks for agents is research. However, agents struggle to read raw HTML or JavaScript-rendered websites. They need clean, markdown-formatted text.

Firecrawl is an API service that takes a URL, crawls the website, and converts the content into clean markdown or structured data. It handles JavaScript rendering and removes boilerplate — such as ads and navigation bars — automatically. Running it locally bypasses the usage limits of the cloud version.

// Initiating a Quick Start

Firecrawl uses a docker-compose.yml file because it consists of multiple services, including the app, Redis, and Playwright. Clone the repository and run it.

git clone https://github.com/mendableai/firecrawl.git

cd firecrawl

docker compose up

// Explaining Why It's Useful for Agentic Developers

Give your agent the ability to ingest live web data. If you are building a research agent, you can have it call your local Firecrawl instance to fetch a webpage, convert it to clean text, chunk it, and store it in your Qdrant instance autonomously.

# 5. PostgreSQL and pgvector: Implement Relational Memory

PostgreSQL dashboard

Sometimes, vector search alone is not enough. You may need a database that can handle structured data — like user profiles or transaction logs — and vector embeddings simultaneously. PostgreSQL, with the pgvector extension, allows you to do just that.

Instead of running a separate vector database and a separate SQL database, you get the best of both worlds. You can store a user's name and age in a table column and store their conversation embeddings in another column, then perform hybrid searches (e.g. "Find me conversations from users in New York about refunds").

// Initiating a Quick Start

The official PostgreSQL image does not include pgvector by default. You need to use a specific image, such as the one from the pgvector organization.

docker run -d --name postgres-pgvector -p 5432:5432 -e POSTGRES_PASSWORD=mysecretpassword pgvector/pgvector:pg16

// Explaining Why It's Useful for Agentic Developers

This is the ultimate backend for stateful agents. Your agent can write its memories and its internal state into the same database where your application data lives, ensuring consistency and simplifying your architecture.

# Wrapping Up

You do not need a massive cloud budget to build sophisticated AI agents. The Docker ecosystem provides production-grade alternatives that run perfectly on a developer laptop.

By adding these five containers to your workflow, you equip yourself with:

- Brains: Ollama for local inference

- Memory: Qdrant for vector search

- Hands: n8n for workflow automation

- Eyes: Firecrawl for web ingestion

- Storage: PostgreSQL with pgvector for structured data

Start your containers, point your LangChain or CrewAI code to localhost, and watch your agents come to life.

// Further Reading

Shittu Olumide is a software engineer and technical writer passionate about leveraging cutting-edge technologies to craft compelling narratives, with a keen eye for detail and a knack for simplifying complex concepts. You can also find Shittu on Twitter.