Image by Author

A few months ago, we learnt about Falcon LLM, which was founded by the Technology Innovation Institute (TII), a company part of the Abu Dhabi Government’s Advanced Technology Research Council. Fast forward a few months, they’ve just got even bigger and better - literally, so much bigger.

Falcon 180B: All You Need to Know

Falcon 180B is the largest openly available language model, with 180 billion parameters. Yes, that’s right, you read correctly - 180 billion. It was trained on 3.5 trillion tokens using TII's RefinedWeb dataset. This represents the longest single-epoch pre-training for an open model.

But it’s not just about the size of the model that we’re going to focus on here, it’s also about the power and potential behind it. Falcon 180B is creating new standards with Large language models (LLMs) when it comes to capabilities.

The models that are available:

The Falcon-180B Base model is a causal decoder-only model. I would recommend using this model for further fine-tuning your own data.

The Falcon-180B-Chat model has similarities to the base version but goes in a bit deeper by fine-tuning using a mix of Ultrachat, Platypus, and Airoboros instruction (chat) datasets.

Training

Falcon 180B scaled up for its predecessor Falcon 40B, with new capabilities such as multiquery attention for enhanced scalability. The model used 4096 GPUs on Amazon SageMaker and was trained on 3.5 trillion tokens. This is roughly around 7,000,000 GPU hours. This means that Falcon 180B is 2.5x faster than LLMs such as Llama 2 and was trained on 4x more computing.

Wow, that’s a lot.

Data

The dataset used for Falcon 180B was predominantly sourced (85%) from RefinedWeb, as well as being trained on a mix of curated data such as technical papers, conversations, and some elements of code.

Benchmark

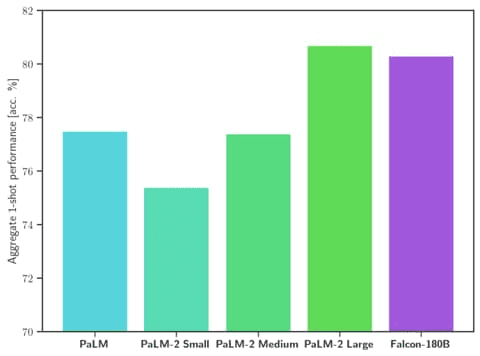

The part you all want to know - how is Falcon 180B doing amongst its competitors?

Falcon 180B is currently the best openly released LLM to date (September 2023). It has been shown to outperform Llama 2 70B and OpenAI’s GPT-3.5 on MMLU. It typically sits somewhere between GPT 3.5 and GPT 4.

Image by HuggingFace Falcon 180B

Falcon 180B ranked 68.74 on the Hugging Face Leaderboard, making it the highest-scoring openly released pre-trained LLM where it surpassed Meta’s LLaMA 2 which was at 67.35.

How to use Falcon 180B?

For the developer and natural language processing (NLP) enthusiasts out there, Falcon 180B is available on the Hugging Face ecosystem, starting with Transformers version 4.33.

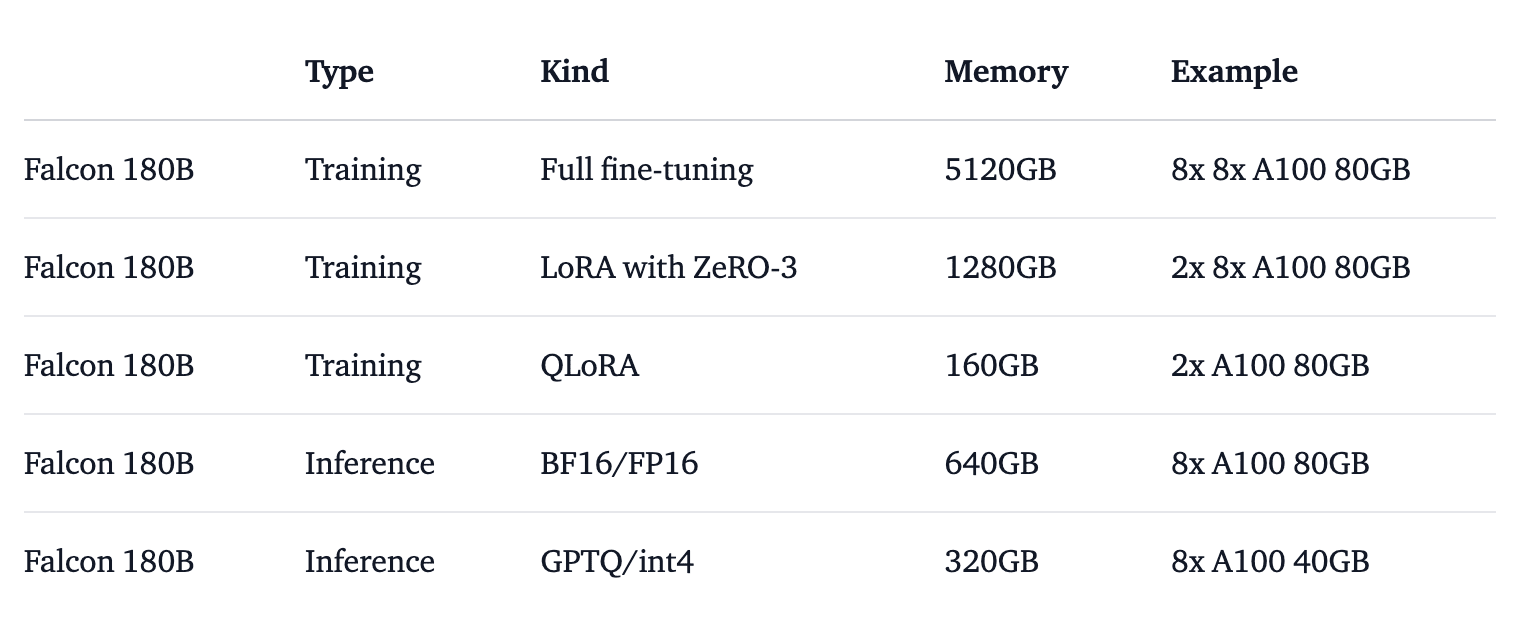

However, as you can imagine due to the model’s size, you will need to take into consideration hardware requirements. To get a better understanding of the hardware requirements, HuggingFace ran tests needed to run the model for different use cases, as shown in the image below:

Image by HuggingFace Falcon 180B

If you would like to give it a test and play around with it, you can try out Falcon 180B through the demo by clicking on this link: Falcon 180B Demo.

Falcon 180B vs ChatGPT

The model has some serious hardware requirements which are not easily accessible to everybody. However, based on other people's findings on testing both Falcon 180B against ChatGPT by asking them the same questions, ChatGPT took the win.

It performed well on code generation, however, it needs a boost on text extraction and summarization.

Wrapping it up

If you’ve had a chance to play around with it, let us know what your findings were against other LLMs. Is Falcon 180B worth all the hype that’s around it as it is currently the largest publicly available model on the Hugging Face model hub?

Well, it seems to be as it has shown to be at the top of the charts for open-access models, and models like PaLM-2, a run for their money. We’ll find out sooner or later.

Nisha Arya is a data scientist, freelance technical writer, and an editor and community manager for KDnuggets. She is particularly interested in providing data science career advice or tutorials and theory-based knowledge around data science. Nisha covers a wide range of topics and wishes to explore the different ways artificial intelligence can benefit the longevity of human life. A keen learner, Nisha seeks to broaden her tech knowledge and writing skills, while helping guide others.