We Used 5 Outlier Detection Methods on a Real Dataset: They Disagreed on 96% of Flagged Samples

Out of 816 wines flagged by at least one method, just 32 made the unanimous list. Those wines had something in common.

Image by Author

# Introduction

All tutorials on data science make detecting outliers appear to be quite easy. Remove all values greater than three standard deviations; that's all there is to it. But once you start working with an actual dataset where the distribution is skewed and a stakeholder asks, "Why did you remove that data point?" you suddenly realize you don't have a good answer.

So we ran an experiment. We tested five of the most commonly used outlier detection methods on a real dataset (6,497 Portuguese wines) to find out: do these methods produce consistent results?

They didn't. What we learned from the disagreement turned out to be more valuable than anything we could have picked up from a textbook.

Image by Author

We built this analysis as an interactive Strata notebook, a format you can use for your own experiments using the Data Project on StrataScratch. You can view and run the full code here.

# Setting Up

Our data comes from the Wine Quality Dataset, publicly available through UCI's Machine Learning Repository. It contains physicochemical measurements from 6,497 Portuguese "Vinho Verde" wines (1,599 red, 4,898 white), along with quality ratings from expert tasters.

We selected it for several reasons. It's production data, not something generated artificially. The distributions are skewed (6 of 11 features have skewness \( > 1 \)), so the data do not meet textbook assumptions. And the quality ratings let us check if the detected "outliers" show up more among wines with unusual ratings.

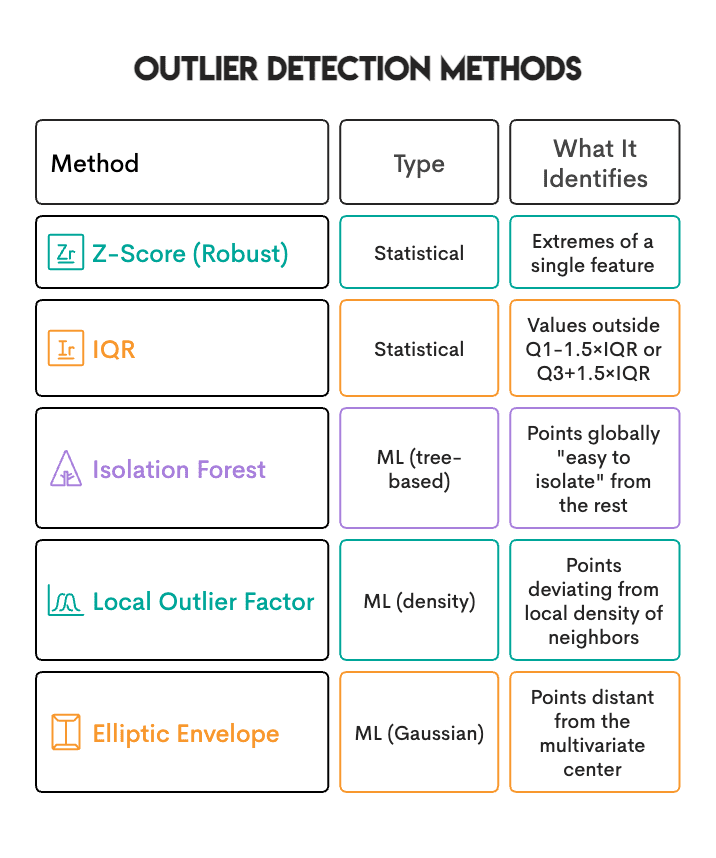

Below are the five methods we tested:

# Discovering the First Surprise: Inflated Results From Multiple Testing

Before we could compare methods, we hit a wall. With 11 features, the naive approach (flagging a sample based on an extreme value in at least one feature) produced extremely inflated results.

IQR flagged about 23% of wines as outliers. Z-Score flagged about 26%.

When nearly 1 in 4 wines get flagged as outliers, something is off. Real datasets don’t have 25% outliers. The problem was that we were testing 11 features independently, and that inflates the results.

The math is straightforward. If each feature has less than a 5% probability of having a "random" extreme value, then with 11 independent features:

\[ P(\text{at least one extreme}) = 1 - (0.95)^{11} \approx 43\% \]

In plain terms: even if every feature is perfectly normal, you'd expect nearly half your samples to have at least one extreme value somewhere just by random chance.

To fix this, we changed the requirement: flag a sample only when at least 2 features are simultaneously extreme.

Changing min_features from 1 to 2 changed the definition from "any feature of the sample is extreme" to "the sample is extreme across more than one feature."

Here's the fix in code:

# Count extreme features per sample

outlier_counts = (np.abs(z_scores) > 3.5).sum(axis=1)

outliers = outlier_counts >= 2

# Comparing 5 Methods on 1 Dataset

Once the multiple-testing fix was in place, we counted how many samples each method flagged:

Here's how we set up the ML methods:

from sklearn.ensemble import IsolationForest

from sklearn.neighbors import LocalOutlierFactor

iforest = IsolationForest(contamination=0.05, random_state=42)

lof = LocalOutlierFactor(n_neighbors=20, contamination=0.05)

Why do the ML methods all show exactly 5%? Because of the contamination parameter. It requires them to flag exactly that percentage. It's a quota, not a threshold. In other words, Isolation Forest will flag 5% regardless of whether your data contains 1% true outliers or 20%.

# Discovering the Real Difference: They Identify Different Things

Here's what surprised us most. When we examined how much the methods agreed, the Jaccard similarity ranged from 0.10 to 0.30. That's poor agreement.

Out of 6,497 wines:

- Only 32 samples (0.5%) were flagged by all 4 primary methods

- 143 samples (2.2%) were flagged by 3+ methods

- The remaining "outliers" were flagged by only 1 or 2 methods

You might think it's a bug, but it's the point. Each method has its own definition of "unusual":

If a wine has residual sugar levels significantly higher than average, it's a univariate outlier (Z-Score/IQR will catch it). But if it's surrounded by other wines with similar sugar levels, LOF won't flag it. It's normal within the local context.

So the real question isn't "which method is best?" It's "what kind of unusual am I searching for?"

# Checking Sanity: Do Outliers Correlate With Wine Quality?

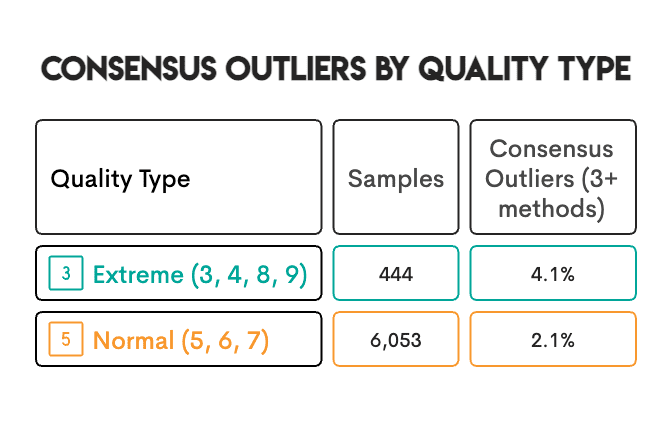

The dataset includes expert quality ratings (3-9). We wanted to know: do detected outliers appear more frequently among wines with extreme quality ratings?

Extreme-quality wines were twice as likely to be consensus outliers. That's a good sanity check. In some cases, the connection is clear: a wine with way too much volatile acidity tastes vinegary, gets rated poorly, and gets flagged as an outlier. The chemistry drives both outcomes. But we can't assume this explains every case. There might be patterns we're not seeing, or confounding factors we haven't accounted for.

# Making Three Decisions That Shaped Our Results

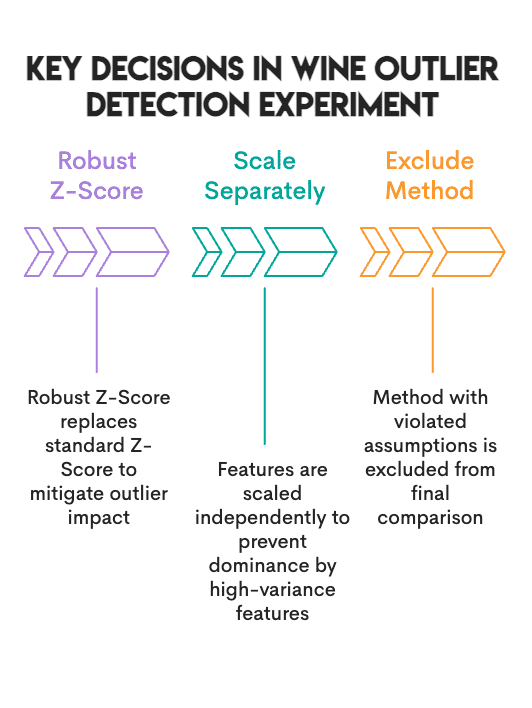

// 1. Using Robust Z-Score Rather Than Standard Z-Score

A Standard Z-Score uses the mean and standard deviation of the data, both of which are affected by the outliers present in our dataset. A Robust Z-Score instead uses the median and Median Absolute Deviation (MAD), neither of which is affected by outliers.

As a result, the Standard Z-Score identified 0.8% of the data as outliers, while the Robust Z-Score identified 3.5%.

# Robust Z-Score using median and MAD

median = np.median(data, axis=0)

mad = np.median(np.abs(data - median), axis=0)

robust_z = 0.6745 * (data - median) / mad

// 2. Scaling Red And White Wines Separately

Red and white wines have different baseline levels of chemicals. For example, when combining red and white wines into a single dataset, a red wine that has perfectly average chemistry relative to other red wines may be identified as an outlier based solely on its sulfur content compared to the combined mean of red and white wines. Therefore, we scaled each wine type separately using the median and Interquartile Range (IQR) of each wine type, and then combined the two.

# Scale each wine type separately

from sklearn.preprocessing import RobustScaler

scaled_parts = []

for wine_type in ['red', 'white']:

subset = df[df['type'] == wine_type][features]

scaled_parts.append(RobustScaler().fit_transform(subset))

// 3. Knowing When To Exclude A Method

Elliptic Envelope assumes your data follows a multivariate normal distribution. Ours didn't. Six of eleven features had skewness above 1, and one feature hit 5.4. We kept the Elliptic Envelope in the comparison for completeness, but left it out of the consensus vote.

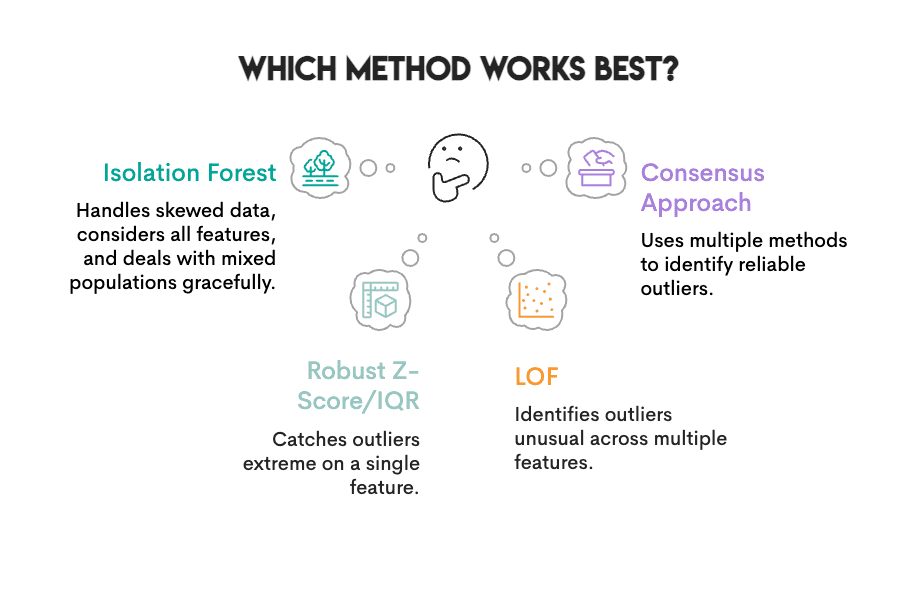

# Determining Which Method Performs Best For This Wine Dataset

Image by Author

Can we pick a "winner" given the characteristics of our data (heavy skewness, mixed population, no known ground truth)?

Robust Z-Score, IQR, Isolation Forest, and LOF all handle skewed data reasonably well. If forced to pick one, we'd go with Isolation Forest: no distribution assumptions, considers all features at once, and deals with mixed populations gracefully.

But no single method does everything:

- Isolation Forest can miss outliers that are only extreme on one feature (Z-Score/IQR catches those)

- Z-Score/IQR can miss outliers that are unusual across multiple features (multidimensional outliers)

The better approach: use multiple methods and trust the consensus. The 143 wines flagged by 3 or more methods are far more reliable than anything flagged by a single method alone.

Here's how we calculated consensus:

# Count how many methods flagged each sample

consensus = zscore_out + iqr_out + iforest_out + lof_out

high_confidence = df[consensus >= 3] # Identified by 3+ methods

Without ground truth (as in most real-world projects), method agreement is the closest measure of confidence.

# Understanding What All This Means For Your Own Projects

Define your problem before picking your method. What kind of "unusual" are you actually looking for? Data entry errors look different from measurement anomalies, and both look different from genuine rare cases. The type of problem points to different methods.

Check your assumptions. If your data is heavily skewed, the Standard Z-Score and Elliptic Envelope will steer you wrong. Look at your distributions before committing to a method.

Use multiple methods. Samples flagged by three or more methods with different definitions of "outlier" are more trustworthy than samples flagged by just one.

Don't assume all outliers should be removed. An outlier could be an error. It could also be your most interesting data point. Domain knowledge makes that call, not algorithms.

# Concluding Remarks

The point here isn't that outlier detection is broken. It's that "outlier" means different things depending on who's asking. Z-Score and IQR catch values that are extreme on a single dimension. Isolation Forest and LOF find samples that stand out in their overall pattern. Elliptic Envelope works well when your data is actually Gaussian (ours wasn't).

Figure out what you're really looking for before you pick a method. And if you're not sure? Run multiple methods and go with the consensus.

# FAQs

// 1. Determining Which Technique I Should Start With

A good place to begin is with the Isolation Forest technique. It does not assume how your data is distributed and uses all of your features at the same time. However, if you want to identify extreme values for a particular measurement (such as very high blood pressure readings), then Z-Score or IQR may be more suitable for that.

// 2. Choosing a Contamination Rate For Scikit-learn Methods

It depends on the problem you are trying to solve. A commonly used value is 5% (or 0.05). But keep in mind that contamination is a quota. This means that 5% of your samples will be classified as outliers, regardless of whether there actually are 1% or 20% true outliers in your data. Use a contamination rate based on your knowledge of the proportion of outliers in your data.

// 3. Removing Outliers Before Splitting Train/test Data

No. You should fit an outlier-detection model to your training dataset, and then apply the trained model to your testing dataset. If you do otherwise, your test data is influencing your preprocessing, which introduces leakage.

// 4. Handling Categorical Features

The techniques covered here work on numerical data. There are three possible alternatives for categorical features:

- encode your categorical variables and continue;

- use a technique designed for mixed-type data (e.g. HBOS);

- run outlier detection on numeric columns separately and use frequency-based methods for categorical ones.

// 5. Knowing If A Flagged Outlier Is An Error Or Just Unusual

You cannot determine from the algorithm alone when an identified outlier represents an error versus when it is simply unusual. It flags what's unusual, not what's wrong. For example, a wine that has an extremely high residual sugar content might be a data entry error, or it might be a dessert wine that is intended to be that sweet. Ultimately, only your domain expertise can provide an answer. If you're unsure, mark it for review rather than removing it automatically.

Nate Rosidi is a data scientist and in product strategy. He's also an adjunct professor teaching analytics, and is the founder of StrataScratch, a platform helping data scientists prepare for their interviews with real interview questions from top companies. Nate writes on the latest trends in the career market, gives interview advice, shares data science projects, and covers everything SQL.