Talking Machine – 3 Deep Learning Gurus Talk about History and Future of Machine Learning, part 1

An recent interview from the talking machine podcast with three deep learning experts. They talked about the neural network winter and its renewal.

The talking machine is a series of podcasts about machine learning by Katherine Gorman, a journalist and Ryan Adams who is an Assistant Professor of Computer Science at Harvard. The most recent ones are interview with three pillars of deep learning community, Geoffrey Hinton, Yoshua Bengio, and Yann LeCun at NIPS 2014, to talk about the history and future of deep learning.

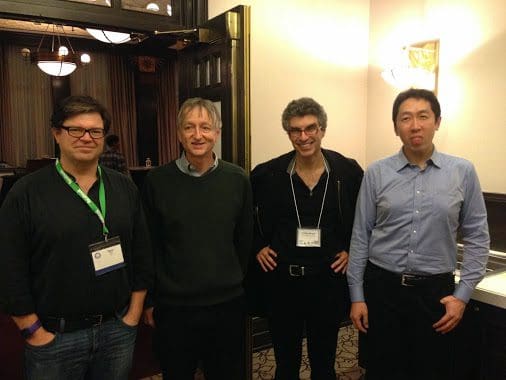

From Left to right: Yann LeCun, Geoff Hinton, Yoshua Bengio, Andrew Ng, at NIPS 2014 (from Andrew Ng's Facebook page).

From Left to right: Yann LeCun, Geoff Hinton, Yoshua Bengio, Andrew Ng, at NIPS 2014 (from Andrew Ng's Facebook page).

Neural Network – “The three of us all knew that it would be the ultimate answer.”

Although the idea of neural network was proposed long time ago, the interest in it was not sparked until the 2000s.

In the 1980s, a number of groups were working on backpropagation algorithm, including Yann. They had some impressive results with written handwriting characters and speech recognition. “But it was a disappointment overall since we couldn’t learn lots of less features. The whole idea of deep learning is to learn less features.” Geoffrey said.

Although system of handwriting characters recognition developed by Yann at University of Toronto and later at AT&T worked well and was used widely, it was not enough to keep people interested. In the 1990s, other machine learning methods which are easy to apply did as well as or better than neural network on many problems.

In late 90s and early 2000s, it was very difficult to do research in neural nets. Yoshua talked about his own experience. “In my own lab, I had to twist my students’ arms to do work in neural networks. My students were afraid of seeing their paper rejected, which did happen quite a bit. “

“We were struggling with so many methods to make neural network better. All of us were convinced it was going to work in the end. It’s just a question of time.“, Geoffrey said.

Unsupervised Learning – "The future belongs to unsupervised learning."

The way that the community got interested in neural nets again is through unsupervised learning in early 2000. “All of us are doing unsupervised learning from then. But it is true that the commercial success of deep learning has been mostly with supervised learning backprop”, Yann said.

The first method which makes many breakthroughs is unsupervised learning with less features. “You learn one layer of features. Then you treat the learned features as data and learn another layer of features.” It started working by 2005 with better results on small datasets.

However, the papers still could not convince the community, particularly in computer vision and speech recognition. Yann told the story from his perspective. “All three of us have the same philosophy about unsupervised learning, but we have different methods. Geoffrey was working on Boltzmann machines. Yoshua had a lot of ideas and try everything. I was trying to tell the world that convnets (Convolutional Neural Networks) are working. “

“The results ware impressive. However, the paper is rejected from Computer Vision Conference on 2011 because the reviewers didn’t know convnets and they thought learning was not good since we couldn’t understand what’s working inside of learning. Feature learning is against the philosophy of computer vision where a lot of people build their careers around the idea of building features.”

"In a workshop on the future of computer vision at MIT in 2011, I gave a talk with the topic ‘In five years, all of you will be learning your features’. I was wrong. It only takes three years."

In 2012, Yann’s technique is applied in Geoffrey’s lab and the result is so overwhelming. “More like overnight, the senior computer vision researchers began to use deep learning. Now people are trying on everything.” Geoffrey said.

Here is the second part of this blog.

Related: