A Simple XGBoost Tutorial Using the Iris Dataset

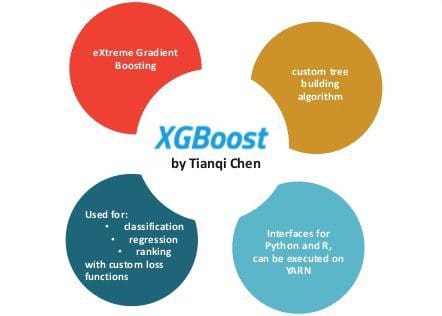

This is an overview of the XGBoost machine learning algorithm, which is fast and shows good results. This example uses multiclass prediction with the Iris dataset from Scikit-learn.

By Ieva Zarina, Software Developer, Nordigen.

I had the opportunity to start using xgboost machine learning algorithm, it is fast and shows good results. Here I will be using multiclass prediction with the iris dataset from scikit-learn.

The XGBoost algorithm (source).

Installing Anaconda and xgboost

In order to work with the data, I need to install various scientific libraries for python. The best way I have found is to use Anaconda. It simply installs all the libs and helps to install new ones. You can download the installer for Windows, but if you want to install it on a Linux server, you can just copy-paste this into the terminal:

wget http://repo.continuum.io/archive/Anaconda2-4.0.0-Linux-x86_64.sh bash Anaconda2-4.0.0-Linux-x86_64.sh -b -p $HOME/anaconda echo 'export PATH="$HOME/anaconda/bin:$PATH"' >> ~/.bashrc bash

After this, use conda to install pip which you will need for installing xgboost. It is important to install it using Anaconda (in Anaconda’s directory), so that pip installs other libs there as well:

conda install -y pip

Now, a very important step: install xgboost Python Package dependencies beforehand. I install these ones from experience:

sudo apt-get install -y make g++ build-essential gfortran libatlas-base-dev liblapacke-dev python-dev python-setuptools libsm6 libxrender1

I upgrade my python virtual environment to have no trouble with python versions:

pip install --upgrade virtualenv

And finally I can install xgboost with pip (keep fingers crossed):

pip install xgboost

This command installs the latest xgboost version, but if you want to use a previous one, just specify it with:

pip install xgboost==0.4a30

Now test if everything is has gone well – type python in the terminal and try to import xgboost:

import xgboost as xgb

If you see no errors – perfect.

Xgboost Demo with the Iris Dataset

Here I will use the Iris dataset to show a simple example of how to use Xgboost.

First you load the dataset from sklearn, where X will be the data, y – the class labels:

from sklearn import datasets iris = datasets.load_iris() X = iris.data y = iris.target

Then you split the data into train and test sets with 80-20% split:

from sklearn.cross_validation import train_test_split X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, random_state=42)

Next you need to create the Xgboost specific DMatrix data format from the numpy array. Xgboost can work with numpy arrays directly, load data from svmlignt files and other formats. Here is how to work with numpy arrays:

import xgboost as xgb dtrain = xgb.DMatrix(X_train, label=y_train) dtest = xgb.DMatrix(X_test, label=y_test)

If you want to use svmlight for less memory consumption, first dumpthe numpy array into svmlight format and then just pass the filename to DMatrix:

import xgboost as xgb from sklearn.datasets import dump_svmlight_file dump_svmlight_file(X_train, y_train, 'dtrain.svm', zero_based=True) dump_svmlight_file(X_test, y_test, 'dtest.svm', zero_based=True) dtrain_svm = xgb.DMatrix('dtrain.svm') dtest_svm = xgb.DMatrix('dtest.svm')

Now for the Xgboost to work you need to set the parameters:

param = { 'max_depth': 3, # the maximum depth of each tree 'eta': 0.3, # the training step for each iteration 'silent': 1, # logging mode - quiet 'objective': 'multi:softprob', # error evaluation for multiclass training 'num_class': 3} # the number of classes that exist in this datset num_round = 20 # the number of training iterations

Different datasets perform better with different parameters. The result can be really low with one set of params and really good with others. You can look at this Kaggle script how to search for the best ones. Generally try with eta 0.1, 0.2, 0.3, max_depth in range of 2 to 10 and num_round around few hundred.

Train

Finally the training can begin. You just type:

bst = xgb.train(param, dtrain, num_round)

To see how the model looks you can also dump it in human readable form:

bst.dump_model('dump.raw.txt')

And it looks something like this (f0, f1, f2 are features):

booster[0]: 0:[f2<2.45] yes=1,no=2,missing=1 1:leaf=0.426036 2:leaf=-0.218845 booster[1]: 0:[f2<2.45] yes=1,no=2,missing=1 1:leaf=-0.213018 2:[f3<1.75] yes=3,no=4,missing=3 3:[f2<4.95] yes=5,no=6,missing=5 5:leaf=0.409091 6:leaf=-9.75349e-009 4:[f2<4.85] yes=7,no=8,missing=7 7:leaf=-7.66345e-009 8:leaf=-0.210219 ....

You can see that each tree is no deeper than 3 levels as set in the params.

Use the model to predict classes for the test set:

preds = bst.predict(dtest)

But the predictions look something like this:

[[ 0.00563804 0.97755206 0.01680986] [ 0.98254657 0.01395847 0.00349498] [ 0.0036375 0.00615226 0.99021029] [ 0.00564738 0.97917044 0.0151822 ] [ 0.00540075 0.93640935 0.0581899 ] ....

Here each column represents class number 0, 1, or 2. For each line you need to select that column where the probability is the highest:

import numpy as np best_preds = np.asarray([np.argmax(line) for line in preds])

Now you get a nice list with predicted classes:

[1, 0, 2, 1, 1, ...]

Determine the precision of this prediction:

from sklearn.metrics import precision_score print precision_score(y_test, best_preds, average='macro') # >> 1.0

Perfect! Now save the model for later use:

from sklearn.externals import joblib joblib.dump(bst, 'bst_model.pkl', compress=True) # bst = joblib.load('bst_model.pkl') # load it later

Now you have a working model saved for later use, and ready for more prediction.

See the full code on github or below:

Bio: Ieva Zarina is a Software Developer at Nordigen.

Original. Reposted with permission.

Related: