What is an Ontology? The simplest definition you’ll find… or your money back*

This post takes the concept of an ontology and presents it in a clear and simple manner, devoid of the complexities that often surround such explanations.

Jo Stichbury, Grakn Labs.

This is a short blog post to introduce the concept of an ontology for those who are unfamiliar with the term, or who have previously encountered explanations that make little or no sense, as I have. I’m aiming to “democratise knowledge of this topic” as one of my colleagues put it.

Find your way out of the maze of words (image: public domain)

Having spent the last six months reading around this subject in my new role at GRAKN.AI, with numerous frustrated and cyclical google searches, I think I’m in a good position to attempt a simple explanation. If, like me, you aren’t a philosopher, mathematician or hard-core computer science PhD, you may be put off by definitions such as this from Wikipedia:

“...an ontology is a formal naming and definition of the types, properties, and interrelationships of the entities that really or fundamentally exist for a particular domain of discourse. It is thus a practical application of philosophical ontology, with a taxonomy...”

Another example:

“An ontology is a formal, explicit specification of a shared conceptualization.”

Maybe it’s just me, but although I know the meaning of those words, I don’t really understand anything when they’re put together as above. Google isn’t massively helpful either:

To be fair, if you stick with it rather than run away screaming, the Wikipedia article I cited above continues to explain that an ontology is:

“...a model for describing the world that consists of a set of types, properties, and relationship types. There is also generally an expectation that the features of the model in an ontology should closely resemble the real world (related to the object)...”

This makes more sense to me, as I’m familiar with object-oriented C++, and it doesn’t sound that unlike it. I thought I’d illustrate the definition with an example using an ontology in GRAKN.AI to define objects, express their properties, and show how the objects relate to one another.

Still with me? I hope I’ve not slipped into the territory of confusing semantic terminology just yet (by the way, please see the end of this article for more examples of ontological explicative horrors).

The Grakn Knowledge Model

In Grakn, we use four types in an ontology:

- entity: Represents an objects or thing, for example: person, man, woman.

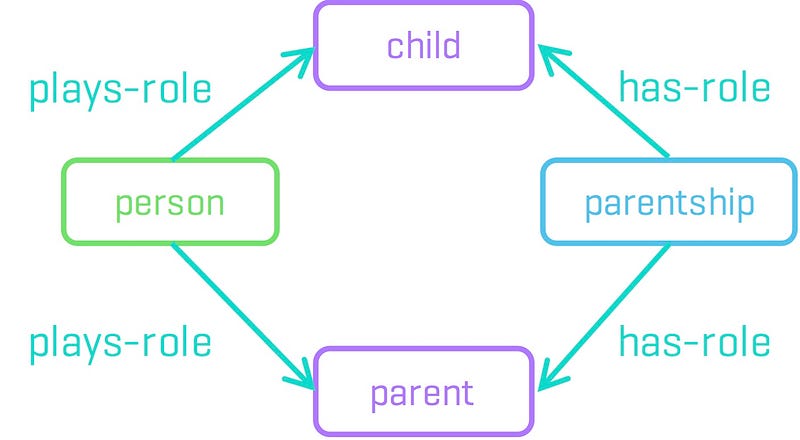

- relation: Represents relationships between things, for example, a parent-child relationship between two person entities.

- role: Describes the participation of entities in a relation. For example, in a marriage relation, there are roles of husband and wife, respectively.

- resource: Represents the properties associated with an entity or a relation, for example, a name or date. Resources consist of primitive types and values, such as strings or integers.

Grakn has its own declarative graph query language, called Graql, for expressing an ontology that can be to be loaded into a graph. Let’s look at a simple example from the Grakn documentation, which uses genealogy data from a family that lived in the 18th and 19th century. Here is the ontology:

insert # Entities person sub entity plays parent plays child has identifier has firstname has surname has middlename has age-at-death has death-date has gender; # Resources identifier sub resource datatype string; firstname sub resource datatype string; surname sub resource datatype string; middlename sub resource datatype string; age-at-death sub resource datatype long; death-date sub resource datatype string; gender sub resource datatype string; birth-date sub resource datatype string; # Roles and Relations parentship sub relation relates parent relates child has birth-date; parent sub role; child sub role;

The sub keyword expresses sub-typing, so person sub entity is simply describing that a person is a sub-type of the built-in Graql entity type.

In the ontology above, there is one entity sub-type, or category of object, which is a person. The person can take one of two different roles in a parentship relation with another person entity: a parent or child role. The parentship relation has a single property associated with it (a date of birth), while the person entity has a number of properties, such as names, age and gender.

Of course, this is a contrived example, designed to show the basic elements of a Grakn ontology. It is possible to build a more extensive hierarchy through inheritance (entities to represent a man or a woman could inherit from an abstract person entity for example) and to introduce additional relations and roles (for example, marriage).

Why Do We Need an Ontology?

We need an ontology to allow Grakn to discover whether the data has any inconsistencies (also known as validation) and to extract implicit information from data (known as inference).

As an example of validation, consider a glitch in the data whereby a person was incorrectly named as a parent of themselves. In adding the data, Grakn would spot that a person entity was attempting to take both parent and child roles in a parentship relation, and would flag it up as an inconsistency.

Graql uses machine reasoning to perform inference over data types and relation types, to discover implicit associations. A nice example is to use the gender of a person to infer more specific details of their role in a parentship relation (whether they are the mother or father, or daughteror son).

We can extend the ontology we defined above to show those additional roles:

person plays son plays daughter plays mother plays father; parentship sub relation relates mother relates father relates son relates daughter; mother sub parent; father sub parent; son sub child; daughter sub child;

We also need to provide Graql with some “rules” to follow to infer these new roles in the parentship. I won’t show them in Graql, as it isn’t particularly readable, and the object here is to illustrate what they do, rather than how they are written. In effect they say that, in a parentship relation:

- if the parent is male and the child is male, the roles are

fatherandson. - if the parent is female and the child is male, the roles are

motherandson. - if the parent is male and the child is female, the roles are

fatheranddaughter. - if the parent is female and the child is female, the roles are

motheranddaughter.

The rules are applied by Graql across the dataset to build new information from what is already contained in the data.

To find out more about how to work with the Grakn knowledge model, we recommend you take a look at our documentation: in particular the Quickstart Tutorial and Knowledge Model guide. If you’re stuck or need to talk to us, please join our growing Grakn Community.

*Money back guarantee

I hope this post has simplified a difficult topic to describe. As the article is provided for free, we are sorry that we can’t offer you a refund if I failed. But let me know.

I’d like to be clear that I mean no disrepect to the original authors of some of the above terrifying explanations, who are experts in their fields and writing for the cognoscenti. Just for entertainment, here are a few other amazingly opaque definitions. Please hit us up in the comments or tweet @GraknLabs if we haven’t included your personal favourite...

Gruber 2008: “ …an ontology defines a set of representational primitives with which to model a domain of knowledge or discourse.”

Gene Ontology Consortium: “Ontologies are ‘specifications of a relational vocabulary’. In other words they are sets of defined terms like the sort that you would find in a dictionary, but the terms are given hierarchical relationships to one another. The terms in a given vocabulary are likely to be restricted to those used in a particular field or domain, and in the case of GO, the terms are all biological.”

Jo Stichbury is a Technical Author at Grakn Labs. Former Head of Technical Publications / Communications at Nokia and Symbian. PhD from the University of Cambridge. Interests include data science & machine learning, cats, cakes, driverless cars & Manchester City.

GRAKN.AI is an open source distributed knowledge graph platform to power the next generation of intelligent applications. Using the power of machine reasoning, we provided a platform to help manage and make sense of highly interconnected big data. Grakn performs machine reasoning through Graql, a graph query language capable of reasoning and graph analytics.

Original. Reposted with permission.

Related: