Summary of Unintuitive Properties of Neural Networks

Neural networks work really well on many problems, including language, image and speech recognition. However understanding how they work is not simple, and here is a summary of unusual and counter intuitive properties they have.

Neural network are powerful learning models especially deep learning networks on visual and speech recognition problems. This may result from their capacity of expressing arbitrary computation. However, it is still hard to fully understand their properties, thus, how they made the final decision after a sequence of decisions in a dynamic environment. Inspired by the notes by Hugo Larochelle, we summarize here several unintuitive properties of neural networks:

It is a “black-box” problem

Screenshot of Deep visualisation toolkit

Since we have many layers engaged in such complicated decision making process, we don't really understand how they think. In spite of having made a lot of efforts (e.g., a researcher created a popular toolkit called Deep Visualization Toolbox) to capture step by step how a neural network get trained, what we can see inside these layers is still very intricate.

They can make dumb errors

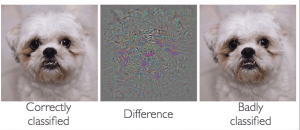

A group including researchers from Google Research and New York University found that there have been adversarial negatives appears to be contradict with the ability of high generalization performance of the network. It is desirable to know if the network can generalize well, how can it be confused by these adversarial examples.

Adversarial examples of neural networks

They are strangely non-convex

When optimising the neural network, the cost function can have a number of local maxima and minima. This means that it is in general neither convex nor concave. This problem has been demonstrated by a research team when they proposed a method of identifying and attacking the saddle point problem in high-dimensional non-convex optimisation. However, according to a research group affiliated with Google and Stanford University, they introduced a simple scheme to look for evidence that neural networks are overcoming local optima. and found that from initialisation to solution, a variety of modern neural networks never encounter any significant obstacles. Their experiments were intended to answer questions: Do neural networks enter and escape a series of local minima? Do they move at varying speed as they approach and then pass a variety of saddle points?. Their evidence strongly supported the answer is No.

The cost function of neural network is non-convex.

They work best when badly trained

A flat minimum that is a large connected region in weight-space where the error remain approximately unchanged can be shown to correspond to low expected overfitting. In an application to stock market prediction, the network with flat minimum search algorithm was shown to outperform conventional back propagation and weight decay. Nevertheless, a recent report on large-batch training for deep learning found that using large batch sizes tends to find sharped minima and generalize worse. In other words, generalization make more senses if taking the training algorithm into account.

They can easily memorise and be compressed

In a recent work at ICLR 2017, a simple experimental framework is used to define a notion of effective capacity of learning models. The work suggests that the effective capacity of several state-of-the-art neural network architectures is “large enough to shatter the training data”, thus, the models are rich enough to memorise the training data. For a deep acoustic model used by Android voice search, a Google research team showed that nearly all of the improvement by training an ensemble of deep neural nets can be distilled into a single neural net of the same size which is much easier to deploy.

They are influenced by initialisation/first examples

On visual and language data sets, Deep Belief Networks that show impressive performance involve an unsupervised learning phase (pre-training component) before usual supervised learning models. In an experiment to answer how the pre-training work, it was empirically shown the influence of pre-training in terms of model capacity, training example number, and architecture depth.

Yet they forget what they learned

It is important and meaningful if the learning can work well in a sequential manner, e.g., being able to learn progressively and adaptively. However, so far, neural networks have not been capable of this desired property. They have typically been designed to learn multiple tasks only if the data is presented all at once. After learning a task, the acquired knowledge will be overwritten for a new task training. In cognitive science, it is called as ‘catastrophic forgetting’ and is a well-known limitation of neural networks.

Those are few examples of counter-intuitive properties of neural networks. If we have results and don't really understand why the model made that decision, it's hard to advance further in scientific research; especially it is just getting larger and more complex.