Today I Built a Neural Network During My Lunch Break with Keras

So yesterday someone told me you can build a (deep) neural network in 15 minutes in Keras. Of course, I didn’t believe that at all. So the next day I set out to play with Keras on my own data.

By Matthijs Cox, Nanotechnology Data Scientist

Being able to go from idea to result with the least possible delay is key to doing good research.

- Keras.io

So yesterday someone told me you can build a (deep) neural network in 15 minutes in Keras. Of course, I didn’t believe that at all. Last time I tried (maybe 2 years ago?) it was still quite some work, involving comprehensive knowledge of programming and math. That was some dead serious craftmanship.

So in the evening I spent some time studying the Keras documentation, and I must say it seemed easy enough. But surely I would find some difficulties when I would try it out, right? Getting used to these packages can take months sometimes.

The next morning

So the next day I set out to play with Keras on my own data. First I set out to refactor some code in our own internal package for converting data to tabular form. This was frustrating me for a while anyway. In the end, that and answering my e-mails and questions took most of my morning. After finishing that, I could easily export some of my data to csv files and read it in with Pandas, convert it to Numpy Arrays, and we’re ready to go.

The lunch break

Since this is all a hobby project, I partly sacrificed my lunch break for the model building. Keras and Tensorflow installed in no time, super easy since the last time I tried to install Tensorflow on a Windows laptop. Then I practically copy-pasted the code from the Keras Documentation. I’m not even going to start a github repository, this is all I did:

from keras.models import Sequential

from keras.layers import Dense

import numpy as np

model = Sequential()

model.add(Dense(units=64, activation=’relu’, input_dim=1424))

model.add(Dense(units=2696))

model.compile(loss='mse', optimizer='adam')

model.fit(predictors[0:80,], estimator[0:80,],

validation_data=(predictors[81:,],estimator[81:,]),

epochs=80, batch_size=32)

np.savetxt("keras_fit.csv", model.predict(data), delimiter=",")

What’s this? I’m building a model, slapping on some dense layers, finishing it, fitting the data, and doing the prediction. All in less than 10 lines of code. I’m not doing any hyperparameter optimization or smart layer architecture today. But I must say; pfew, that was easy!

The afternoon

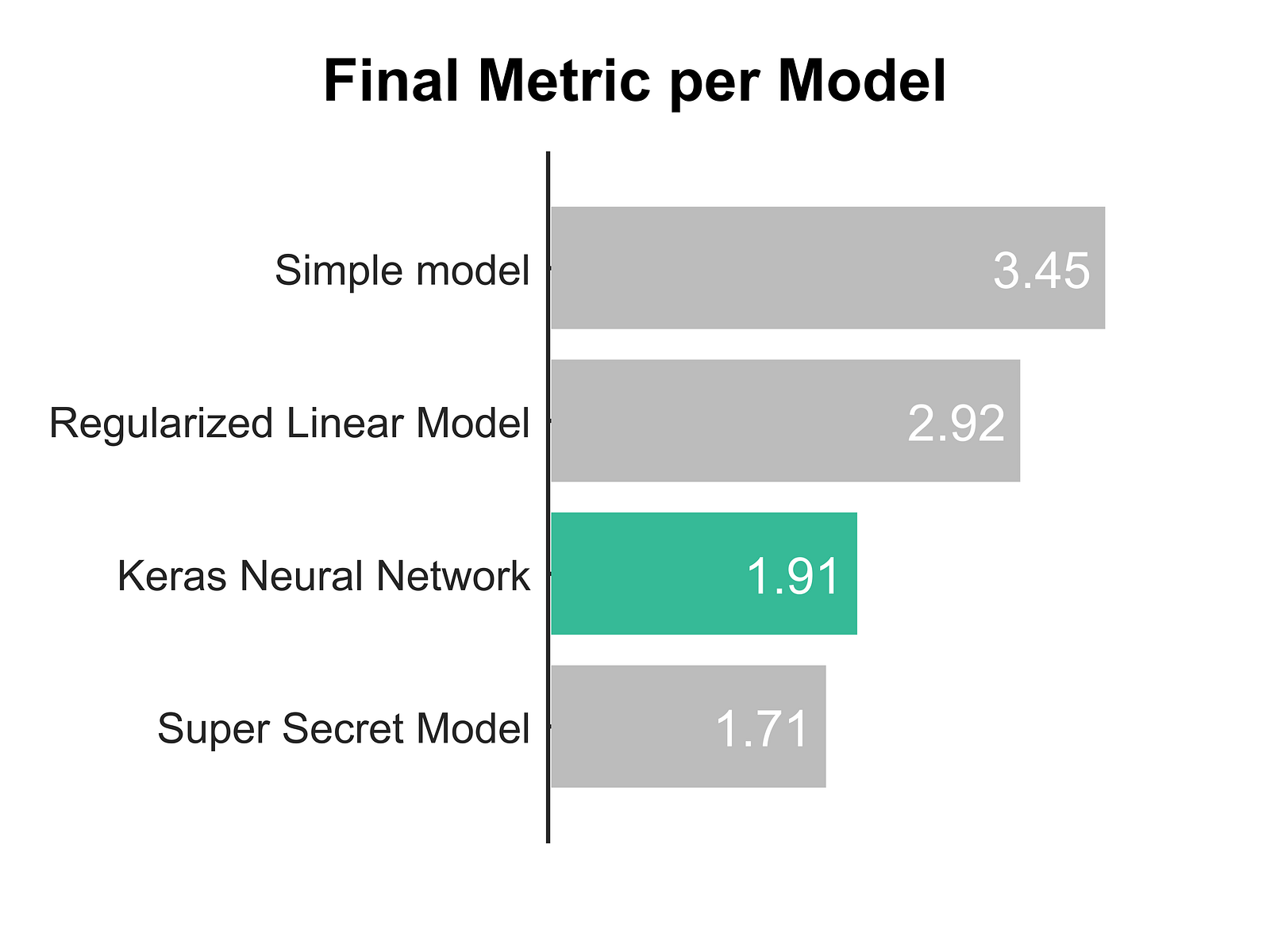

Now I’m very curious about the actual performance. So I just have to test it against some benchmarks. Don’t tell my managers I’m spending my time on this though! (Just kidding, they encourage some exploring and learning.) So I’m loading the data back into my own testing framework, and running a few other algorithms. Here are the results for my final performance metrics.

It’s embarrassingly good for less than an hour of model building. The super secret model we’ve been working on for 1.5 years still outperforms it (thankfully). On top of that, the big downside of any Neural Network of course is that it’s a complete black box as to what it actually learned. While our secret model is using pattern recognition that we can diagnose later on as humans.

The conclusion

So this has also been my fastest article ever, written entirely in an enthousiastic state of mind. Now I’m spending my last minutes of the day writing this article to give a big applause to whoever made Keras. Here is my conclusion:

- Keras API: Awesome!

- Keras Documentation: Awesome!

- Keras Results: Awesome!

Anyone who’s thinking of doing some Deep/Machine Learning, I would certainly advise to start with Keras. Hitting the ground running is a lot of fun, and you can learn and finetune the details later on.

Bio: Matthijs Cox is a Nanotechnology Data Scientist, Proud Father and Husband, Graphic Designer and Writer for Fun.

Original. Reposted with permission.

Related:

- 7 Steps to Mastering Deep Learning with Keras

- Keras Cheat Sheet: Deep Learning in Python

- Keras Tutorial: Recognizing Tic-Tac-Toe Winners with Neural Networks