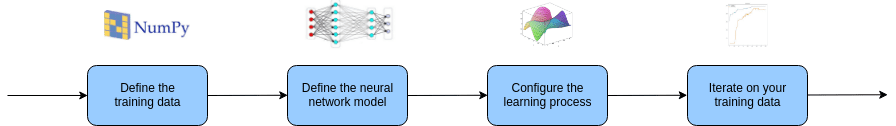

The Keras 4 Step Workflow

The Keras 4 Step Workflow

In his book "Deep Learning with Python," Francois Chollet outlines a process for developing neural networks with Keras in 4 steps. Let's take a look at this process with a simple example.

Francois Chollet, in his book "Deep Learning with Python," outlines early on an overview for developing neural networks with Keras. Generalizing from a simple MNIST example earlier in the book, Chollet simplifies the network building process, as relates directly to Keras, to 4 main steps.

This is not a machine learning workflow, nor is it a complete framework for approaching a problem to solve with deep learning. These 4 steps pertain solely to the portion of your overall neural network machine learning workflow where Keras comes into play. These steps are as follows:

- Define the training data

- Define a neural network model

- Configure the learning process

- Train the model

While Chollet then spends the rest of his book sufficiently filling in the necessary details to utilize it, let's take a preliminary look at the workflow by way of an example.

1. Define the training data

This first step is straightforward: you must define your input and target tensors. The more difficult data-related aspect -- which falls outside of the Keras-specific workflow -- is actually finding or curating, and then cleaning and otherwise preprocessing some data, which is a concern for any machine learning task. This is the one step of the model which does not generally concern tuning model hyperparameters.

While our contrived example randomly generates some data to use, it captures the singular aspect of this step: define your input (X_train) and target (y_train) tensors.

# Define the training data import numpy as np X_train = np.random.random((5000, 32)) y_train = np.random.random((5000, 5))

2. Define a neural network model

Keras has 2 ways to define a neural network: the Sequential model class and the Functional API. Both share the goal of defining a neural network, but take different approach.

The Sequential class is used to define a linear stack of network layers which then, collectively, constitute a model. In our example below, we will use the Sequential constructor to create a model, which will then have layers added to it using the add() method.

The alternative manner for creating a model is via the Functional API. As opposed to the Sequential model's limitation of defining a network solely constructed of layers in a linear stack, the Functional API provides the flexibility required for more complex models. This complexity is best exemplified in the use cases of multi-input models, multi-output models, and the definition of graph-like models.

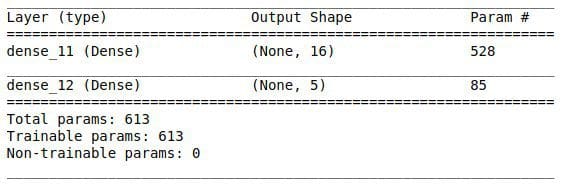

The code in our example uses the Sequential class. It first calls the constructor, after which calls are made to the add() method to add layers to the model. The first such call adds a layer of type Dense ("Just your regular densely-connected NN layer"). The Dense layer has an output of size 16, and an input of size INPUT_DIM, which is 32 in our case (check the above code snippet to confirm). Note that only the first layer of the model requires the input dimension to be explicitly stated; the following layers are able to infer from the previous linear stacked layer. Following standard practice, the rectified linear unit activation function is used for this layer.

The next line of code defines the next Dense layer of our model. Note that the input size is not specified here. The output size of 5 is specified, however, which matches our number of presumed classes in our toy multi-class classification problem (check the above code snippet, again, to confirm). Since it is a multi-class classification problem we are solving with our network, the activation function for this layer is set to softmax.

# Define the neural network model from keras import models from keras import layers INPUT_DIM = X_train.shape[1] model = models.Sequential() model.add(layers.Dense(16, activation='relu', input_dim=INPUT_DIM)) model.add(layers.Dense(5, activation='softmax'))

With those few lines, our Keras model is defined. The Sequential class' summary() method provides the following insight into our model:

3. Configure the learning process

With both the training data defined and model defined, it's time configure the learning process. This is accomplished with a call to the compile() method of the Sequential model class. Compilation requires 3 arguments: an optimizer, a loss function, and a list of metrics.

In our example, set up as a multi-class classification problem, we will use the Adam optimizer, the categorical crossentropy loss function, and include solely the accuracy metric.

# Configure the learning process from keras import optimizers from keras import metrics model.compile(optimizer='adam', loss='categorical_crossentropy', metrics=['accuracy'])

The with the call made to compile() with these arguments, our model now has its learning process configured.

4. Train the model

At this point we have training data and a fully configured neural network to train with said data. All that is left is to pass the data to the model for the training process to commence, a process which is completed by iterating on the training data. Training begins by calling the fit() method.

At minimum, fit() requires 2 arguments: input and target tensors. If nothing more is provided, a single iteration of the training data is performed, which generally won't do you any good. Therefore, it would be more conventional to, at a practical minimum, define a pair of additional arguments: batch_size and epochs. Our example includes these 4 total arguments.

# Train the model model.fit(X_train, y_train, batch_size=128, epochs=10)

Note that the epoch accuracies are not particularly admirable, which makes sense given the random data which was used.

Hopefully this has shed some light on the manner in which Keras can be used to solve plain old classification problems by using a straightforward 4 step process prescribed by the library's author and outlined herein.

Related:

- Frameworks for Approaching the Machine Learning Process

- 7 Steps to Mastering Deep Learning with Keras

- Using Genetic Algorithm for Optimizing Recurrent Neural Networks

The Keras 4 Step Workflow

The Keras 4 Step Workflow