Data Science For Our Mental Development

In this blog, I aim to generalize how AI can help us with mental development in the future as well as discuss some of the present-day solutions.

By Syed Sadat Nazrul, Analytic Scientist

Emotion is a fundamental element of human society. If you think about it, everything worth analyzing is influenced by human behavior. Cyber attacks are highly impacted by disgruntled employees who may either ignore due diligence or engage in insider misuse. The stock market depends on the effect of the economic climate, which itself is dependent on the aggregate behavior of the masses. In the field of communication, it is common knowledge that what we say account for only 7% of the message while the rest 93% is encoded in facial expressions and other non-verbal cues. Entire fields of psychology and behavioral economics are dedicated to this field. That being said, the ability to measure and analyze emotions effectively will enable us to improve society in remarkable ways. For example, a psychology professor at the University of California, San Francisco, Paul Ekman, describes in his book, Telling Lies: Clues to Deceit in the Marketplace, Politics, and Marriage, how reading facial expression can help psychologists find signs of potential suicide attempts while the patient lies about such intentions. Sounds like a job for facial recognition models? What about neural mapping? Can we effectively map emotional states from neural impulses? What about improving cognitive abilities? Or even emotional intelligence and effective communication? There are plenty of problems in the world to solve using the vast array of unstructured data that is available to us.

That being said, as with every data science problem, we need to dive down to the core challenge of modeling emotions:

- How to frame the problem? What should our model classes be? What are we optimizing for?

- What data should we collect? What correlations are we looking for? What aspects should we dive more into than others?

- Is there any issue in having access to such data? What are the societal and cultural views on having access to emotions? What privacy regulations need to be maintained? What about data security?

For more information on effectively designing AI products, read my UX Design Guide for Data Scientists and AI Products. In this blog, I aim to generalize how AI can help us with mental development in the future as well as discuss some of the present-day solutions.

Healthcare

Patients lying to their doctors is not uncommon. The trust barrier for both men and women is fueled by embarrassment and too little face time with doctors. One digital health platform, ZocDoc, reveals that nearly half (46%) of Americans have avoided telling their doctor about a health issue because they were embarrassed or afraid of being judged. Around a third say they withheld details because they couldn’t find the right opportunity or didn’t have enough time during the appointment (27%) or because the doctor didn’t ask any questions or specifically if anything was bothering them (32%). A major impact of this is in the field of suicide. According to the World Health Organization (WHO), as many as 800,000 people die due to suicide every year, with 60 percent facing major depression. Even though depression places a patient at a higher risk of engaging in suicidal behavior, the difference between suicidal depressed and just depressed individual is not easy to detect.

Deena Zaidi describes in her blog, Machine Learning uses facial expressions to distinguish between depression and suicidal behavior, how a suicide expert who conducted an in-depth assessment of risk factors would predict a patient’s future suicidal thoughts and behaviors with the same degree of accuracy as someone with no knowledge of the patient. This is no different from making decisions based on a coin flip. While reading facial expressions using supervised learning models are still being developed, a lot of promise is already being shown in the field.

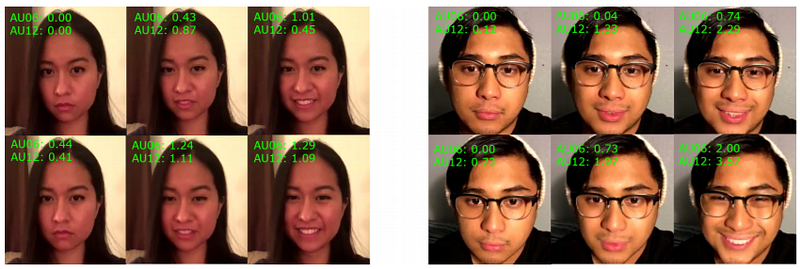

Duchenne (top) vs non-Duchenne (bottom) smiles analyzed from SVM results help detect suicidal risks (Source: Investigating Facial Behavior Indicators of Suicidal Ideation by Laksana et al.)

One report, authored in collaboration with scientists at the University of Southern California, Carnegie Mellon University, and Cincinnati Children’s Hospital Medical Center, investigates non-verbal facial behavior to detect suicidal risks and claims to have found a pattern that differentiates depressed and suicidal patients. Using SVM, they found that facial behavior descriptors such as the percentage of smiles involving the contraction of the orbicularis oculi muscles (Duchenne smiles) had statistical significance between the suicidal and non-suicidal groups.

Cognitive Abilities

Cognitive abilities are brain-based skills we need to carry out any task from the simplest to the most complex. They have more to do with the mechanisms of how we learn, remember, problem-solve, and pay attention, rather than with any actual knowledge. People are keen to improve cognition. Who wouldn’t want to remember names and faces better, to be able more quickly to grasp difficult abstract ideas, and to be able to “see connections” better?

Elevate app on the Apple Store

Currently, there are applications out there that are helping us train our cognitive abilities. One such example is Elevate, which consists of brain games for the user to play at the correct difficulty level in order to improve in mental math, reading and critical thinking abilities. The value of optimal cognitive functioning is so obvious that to elaborate the point may be unnecessary. We are constantly pushing the boundaries of our 5 senses in order to grasp a deeper meaning of the world around us. For example, in the field of image recognition, AI can already “see” better than we can by observing variables far beyond the RGB spectrum, which in turn aids us in going past our own visual limitations. However, why limit ourselves to a 2D screen to visualize 3D objects when we can go virtual?

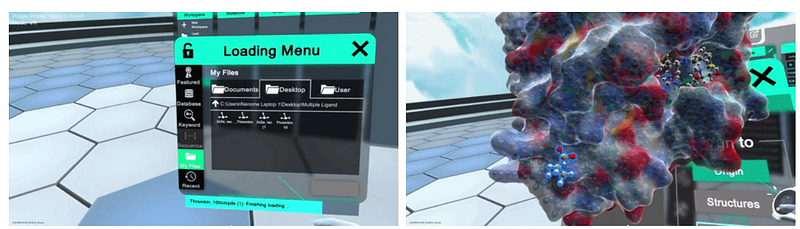

Nanome.AI develops Augmented Reality for analyzing abstract molecular structures

Augmented Reality allows us to feel as though we have teleported to another world. Computational material science and biology are fields that come to my mind instantly when thinking about this problem. Being a computational material scientist in the past, I know that visualizing complex molecular structures is a challenge for many researchers. Nanome.AI help visualize these complex structures in Augmented Reality. Going further, there are already plenty of startups that are using Augmented reality in the field of anatomy for training surgeons.

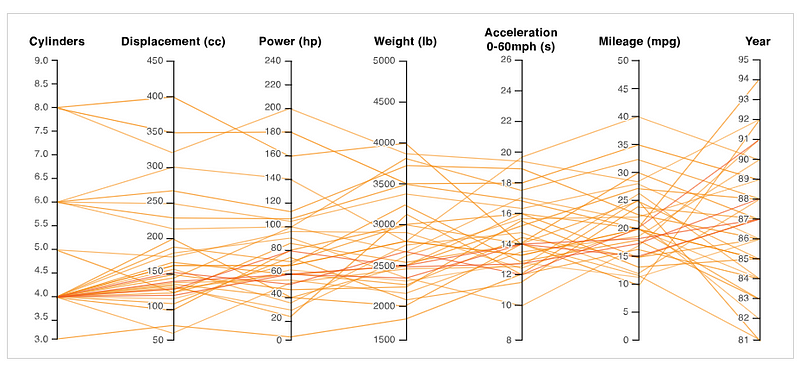

Parallel Coordinate plot visualizing 7-dimensional space

New idioms of Data Visualization and Dimensionality Reduction algorithms are always being produced for us to better experience the world around us. For example, we have the Parallel Coordinates that allow us to visualize and filter through high dimensional space while t-SNE is popular for visualizing complex space after decomposing them down to 2D or 3D space.

Emotional Intelligence

Emotional Intelligence is the capacity to be aware of, control, and express one’s emotions, and to handle interpersonal relationships judiciously and empathetically. All people experience emotions, but it is a select few who can accurately identify them as they occur. It could either be a lack of self-awareness or simply our limited emotional vocabulary. Many times, we don’t even know what we want. We strive to connect with those around us in a specific way or consume a particular product, just so we could feel a very unique emotion that we fail to describe. We feel much more than just happiness, sadness, anger, anxiety or fear. Our emotions are complex combinations of all of the above. The ability to understand our own emotions as well as those of people around us is vital for the emotional well being and maintaining positive relationships.

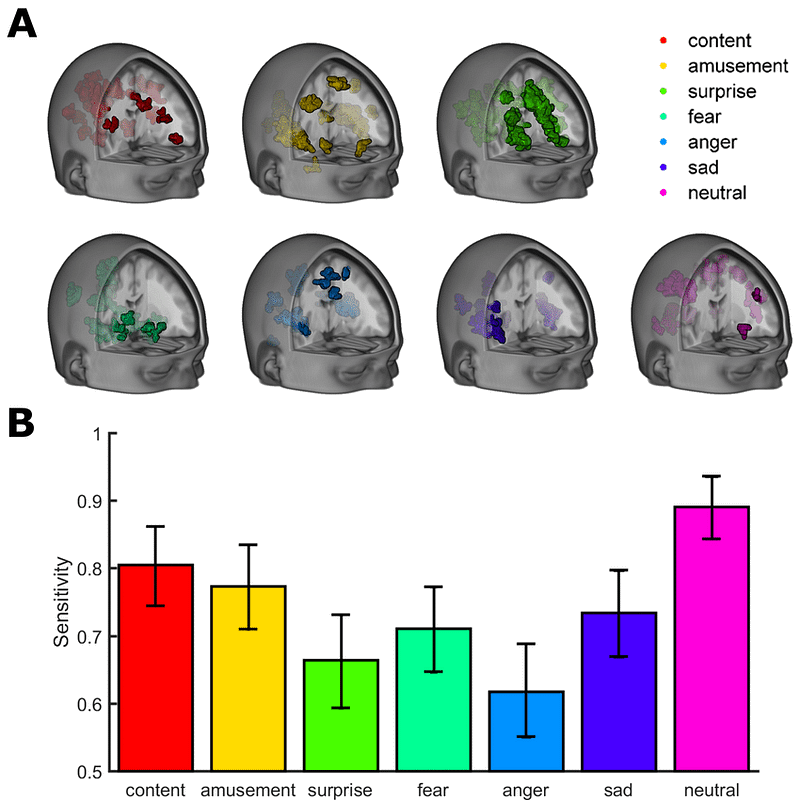

Distributed patterns of brain activity predict the experience of discrete emotions detected using fMRI scans on top and sensitivity range at the bottom (Source: Decoding Spontaneous Emotional States in the Human Brain by Kragal et al.)

With innovations in neural mapping, we will better understand who we are as human beings as well as the myriad emotional states that we can attain. Supervised learning has already given access to some common emotions. Our complex emotional modes may be better understood by performing unsupervised learning on brain waves. For example, a simple outlier detection algorithm can perhaps reveal new emotional modes or significant emotional stressors to pay attention to. Such studies can potentially reveal innovative ways of improving our emotional intelligence.

Demonstration of how reading microexpression accurately can help negotiate during business transactions (Source: TED Talk: How Body Language and Micro Expressions Predict Success — Patryk & Kasia Wezowski)

Even supervised image recognition models that help prevent suicide can allow individuals to read the emotions of whom they speak to. For example, one TED Talk on micro expressions shows how the ideal price point can be estimated during a business negotiation, simply by keeping aware of certain facial expressions. The same TED Talk mentions that salespeople who are better at reading micro expressions sell 20% more than those who cannot. Hence, this 20% advantage can probably be attained by investing in glasses that can reveal the emotions of those who speak to.

Emotional intelligence includes both understanding our own emotions and being more sensitive towards those around us. With deeper studies into our emotional states, we can perhaps unlock new emotions that we have never experienced before. In the right hands, AI can act as our extensions to help us form meaningful bonds with the people we value in our lives.

Experience and Imagination

Imagination is the faculty or action of forming new ideas, or images, or concepts of external objects not present to the senses. The effect of AI on our experience and imagination would result from an aggregate of better cognitive abilities and emotional intelligence. Simply put, access to richer modes of cognitive abilities and emotional intelligence will allow us to experience ideas that cannot be easily conceived by the average minds of today.

A philosophy professor at Oxford University, Nick Bostrom, in his paper, Why I Want to be a Posthuman When I Grow Up, describes how access to new modes of experience will enhance our experience and imagination. Let’s assume that the modes of experience we have today is represented in space X. 10 years from now, let’s say that the modes of experience are represented in space Y. The space Y will be significantly bigger than space X. This futuristic space of Y may have access to new types of emotions other than our conventional happy, sad and mad. This new space of Y can even allow us to comprehend abstract thoughts that reflect what we wish to express more accurately. Each of us could potentially see the world in ways even Vincent van Gogh could never fathom in his wildest imaginations!

This new space of Y can actually unlock a new world of possibilities that lie beyond our current imagination. The people of the future will think, feel and experience the world at a much richer degree than we can today.

Communication

When you lack emotional intelligence, it’s hard to understand how you come across to others. You feel misunderstood because you don’t deliver your message in a way that people can understand. Even with practice, emotionally intelligent people know that they don’t communicate every idea perfectly. AI has the potential to change that with enhanced self-expression.

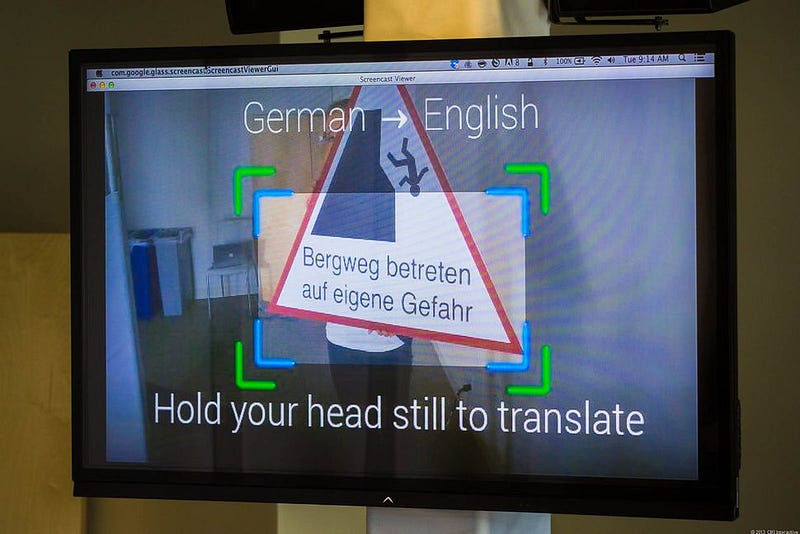

Google glasses translate German into English (Source: Google buys Word Lens maker to boost Translate)

10 years ago, most of our communication was restricted to phones and emails. Today, we have access to video conferences, Augmented Reality and a wide array of applications on social media. As we enhance our cognitive abilities and emotional intelligence, we can express ourselves through idioms of far greater resolution and lower levels of abstractions. Google glasses can translate foreign texts on the fly. I have already mentioned the use of Google glasses for potentially reading microexpressions in an earlier section. However, why limit our communication to just what we can “see”?

Controlling drones using sending electrical impulses to headgear based sensor (Source: Mind-controlled drone race: U. of Florida holds unique UAV competition)

Students from the University of Florida achieving control of drones using nothing but the mind. We even have access to vibrating gaming consoles that take advantage of our sense of touch for making that Mario Kart game that much more realistic. Augmented Realities of today only limit us to our vision and sense of hearing. In the future, Augmented Realities might actually allow us to smell, taste and touch our virtual environment. Along with access to our 5 senses, our emotional reaction to certain situations might be fine-tuned and optimized with the power of AI. This might mean sharing the fear of our main characters on Paranormal Activity, feeling the heartbreak of Emma or being excited about the adventures of Pokemon.

Some Potential Concerns

While I have named all the positives of being able to read emotions using AI and helping us understand ourselves and those around us, we must not forget the potential challenges:

- Data Security: According to the World Privacy Forum, the street value of stolen healthcare credentials is around $50, compared to the street for $1 for stolen credit card information or social security number alone. Similarly, mental health information is sensitive personalized data that can be exploited by hackers. Just as hackers are out there trying to steal our credit card and health insurance information, access to emotional data can potentially pay well on the black market.

- Government Data Regulations: For any highly sensitive personalized data, different countries have different regulations to abide by. In the US, healthcare related data will need to abide by HIPAA regulations while those related to financial applications will require PCI. If we look beyond, there is the GDPR in the EU and the SAC in China.

- Ethical Boundaries: As with any new technology, society may not be so pleased with having their emotions being accessed. Let’s face facts. We are probably OK with a doctor checking our emotional data for improving our well being but not an insurance company trying to charge us a higher premium. Along the same line of thinking, we already had the suspicion of US vote-rigging by the manipulation of mass psychology. However, ethical norms rely heavily on what is considered “normal” in the given society. Something that is not acceptable may not be acceptable in the future. While some aspects applications of data science in this field may seem uncomfortable to the general public, other applications such as fraud analytics for the protection against credit fraud and anti-money laundering as well as marketing analytics for recommender systems like on Amazon and Netflix are perfectly acceptable. When introducing a new idea, social acceptance will depend heavily on the type of problem the data being collected is being used to solve. For more detail on developing AI products, check my blog on UX Design Guide for Data Scientists and AI Products.

Bio: Syed Sadat Nazrul is using Machine Learning to catch cyber and financial criminals by day... and writing cool blogs by night.

Original. Reposted with permission.

Related:

- Receiver Operating Characteristic Curves Demystified (in Python)

- Data Science Interview Guide

- DevOps for Data Scientists: Taming the Unicorn