The Pareto Principle for Data Scientists

The Pareto Principle for Data Scientists

In this article, I’ll share a few ways in which we, as data scientists, can use the power of the Pareto Principle to guide our day-to-day activities.

By Pradeep Gulipalli, Tiger Analytics

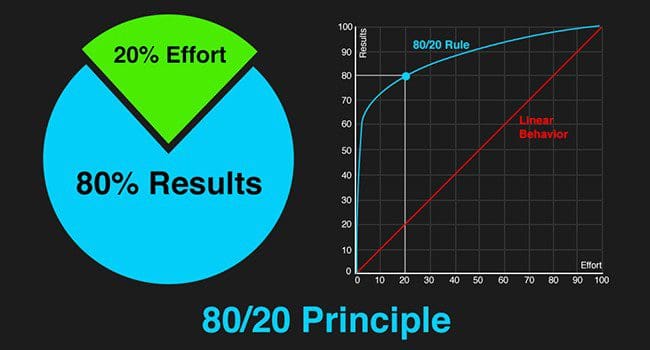

More than a century ago, Vifredo Pareto, a professor of Political Economy, published the results of his research on the distribution of wealth in society. The dramatic inequalities he observed, e.g. 20% of the people owned 80% of the wealth, surprised economists, sociologists, and political scientists. Over the last century, several pioneers in varied fields observed this disproportionate distribution in several situations, including business. The theory that a vital few inputs/causes (e.g. 20% of inputs) directly influence a significant majority of the outputs/effects (e.g. 80% of outputs) came to be known as the Pareto Principle – also referred to as the 80-20 rule.

The Pareto Principle is a very simple yet extremely powerful management tool. Business executives have long used it for strategic planning and decision making. Observations such as 20% of the stores generate 80% of the revenue, 20% of software bugs cause 80% of the system crashes, 20% of the product features drive 80% of the sales etc. are popular, and analytically savvy businesses try to find such Paretos in their worlds. This way they are able to plan and prioritize their actions. In fact, today, data science plays a big role in sifting through tons of complex data to help identify future Paretos.

While data science is helping predict new Paretos for businesses, data science can benefit from taking a look internally, searching for Paretos within. Exploiting these can make data science significantly more efficient and effective. In this article, I’ll share a few ways in which we, as data scientists, can use the power of the Pareto Principle to guide our day-to-day activities.

Project Prioritization

If you are a data science leader/manager, you’d inevitably need to help develop the analytics strategy for your organization. While different business leaders can share their needs, you have to articulate all these organizational (or business unit) needs and prioritize them into an analytic roadmap. A simple approach is to quantify the value of solving each analytic need, and sort them in the decreasing order of value. You’ll often notice that the top few problems/use-cases are disproportionately valuable (Pareto Principle), and should be prioritized above the others. In fact, a better approach would be to quantify the complexity of solving/implementing each problem/use-case, and prioritizing them based on a trade-off between value and complexity (e.g. by laying them on a plot with value on the y-axis and complexity on the x-axis).

Problem Scoping

Business problems tend to be vague and unstructured, and a data scientist’s job involves identifying the right scope. Scoping often requires keeping the focus on the most important aspects of the problem and deprioritizing aspects that are of less value. To start with, looking at the distribution of outputs/effects over inputs/causes will help us understand if high level Paretos exist in the problem space. Subsequently, we can choose to look at only certain inputs/outputs or causes/effects. For example, if 20% of stores generate 80% of sales, we can group rest of the stores into a cluster and do the analysis instead of evaluating them individually.

Scoping also involves evaluating risks – deeper evaluation will often tell us that the top items pose significantly higher risk while the bottom ones have a very remote chance of occurring (the Pareto Principle). Rather than address all risks, we can possibly prioritize our time and efforts towards a few of the key risks.

Data Planning

Complex business problems require data beyond what is readily available in analytic data marts. We need to request access, purchase, fetch, scrape, parse, process, and integrate data from internal/external sources. These come in different shapes, sizes, health, complexity, costs etc. Waiting for the entire data plan to fall in place can cause project delays that are not in our control. One simple approach could be to categorize these data needs based on their value to the end solution, e.g. Absolute must have, Good to have, and Optional (the Pareto Principle). This will help us focus on the Absolute must haves, and not get distracted or delayed by the Optional items. In addition to value, considering aspects of cost, time, and effort of data acquisition will help us better prioritize our data planning exercise.

Analysis

It’s anecdotally said that a craftsman completes 80% of their work using only 20% of their tools. This holds true for us data scientists as well. We tend to use few analyses and models for a significant part of our work (the Pareto Principle), while the other techniques get used much less frequently. Typical examples during exploratory analysis include, variable distributions, anomaly detection, missing value imputation, correlation matrices etc. Similarly, examples during the modeling phase include k-fold cross-validation, actual vs. predicted plots, misclassification tables, analyses for hyperparameter tuning etc. Building mini automations (e.g. libraries, code snippets, executables, UIs) to use/access/implement these analyses can bring significant efficiencies in the analytic process.

Modeling

During the modeling phase, it doesn’t take us long to arrive upon a reasonable working model early in the process. Majority of the accuracy gains have been made by now (the Pareto Principle). The rest of the process is about fine-tuning the models and pushing for the incremental accuracy gains. Sometimes, the incremental accuracy gains are required to make the solution viable for business. On other occasions, the model fine-tuning doesn’t add much value to the eventual insight/proposition. As data scientists, we need to be cognizant of these situations, so that we know where to draw the line accordingly.

Business Communication

Today’s data science ecosystem is very multi-disciplinary. Teams include business analysts, machine learning scientists, big data engineers, software developers and multiple business stakeholders. A key driver of success of a such teams is communication. As someone who is working hard, you might be tempted to communicate all the work – challenges, analyses, models, insights etc. However, in today’s world of information overload, taking such an approach will not help. We will need to realize that there are ‘useful many but a vital few’ (the Pareto Principle) and use this understanding to simplify the amount of information we communicate. Similarly, what we present and highlight needs to be customized based on the target audience (business stakeholders vs. data scientists)

The Pareto Principle is a powerful tool in our arsenal. Used the right way, it can help us declutter and optimize our activities.

Bio: Pradeep Gulipalli is a Co-founder of Tiger Analytics and currently heads the team in India. Over the last decade, he has worked with clients at Fortune 100 companies and start-ups alike to enable scientific decision making in their organizations. He has helped design analytics road-maps for complex business environments and architected numerous data science solution frameworks, which include pricing, forecasting, anomaly detection, personalization, optimization, behavioral simulations.

Bio: Pradeep Gulipalli is a Co-founder of Tiger Analytics and currently heads the team in India. Over the last decade, he has worked with clients at Fortune 100 companies and start-ups alike to enable scientific decision making in their organizations. He has helped design analytics road-maps for complex business environments and architected numerous data science solution frameworks, which include pricing, forecasting, anomaly detection, personalization, optimization, behavioral simulations.

Related:

- What no one will tell you about data science job applications

- How Do You Identify the Right Data Scientist for Your Team?

- Preparing for the Unexpected

The Pareto Principle for Data Scientists

The Pareto Principle for Data Scientists