Computational Linear Algebra for Coders: The Free Course

Computational Linear Algebra for Coders: The Free Course

Interested in learning more about computational linear algebra? Check out this free course from fast.ai, structured with a top-down teaching method, and solidify your understanding of an important set of machine learning-related concepts.

You already know about fast.ai, so I won't bore you with yet another explanation. But while you may be familiar with fast.ai's fantastic deep learning courses, perhaps you don't know about their equally remarkable Computational Linear Algebra course.

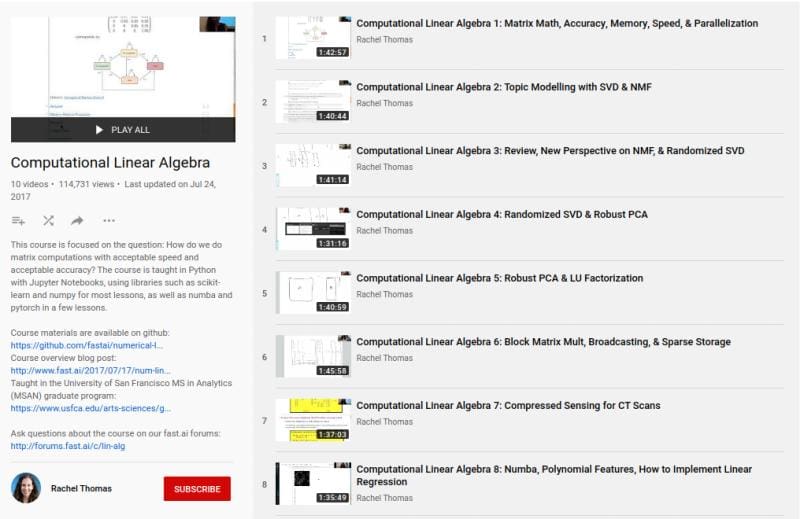

The course, by Rachel Thomas, co-founder at fast.ai, is equal parts Jupyter notebook-based textbook — created by Rachel for the course — and a series of accompanying lecture videos — also created by Rachel. What exactly is covered within?

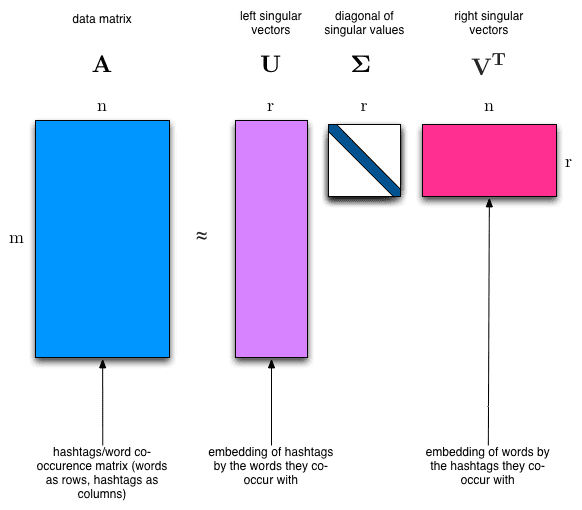

This course is focused on the question: How do we do matrix computations with acceptable speed and acceptable accuracy?

What does it take to understand and utilize computational linear algebra in the wild, and why would you bother? From the course textbook's Motivation section in Chapter 1:

It's not just about knowing the contents of existing libraries, but knowing how they work too. That's because often you can make variations to an algorithm that aren't supported by your library, giving you the performance or accuracy that you need. In addition, this field is moving very quickly at the moment, particularly in areas related to deep learning, recommendation systems, approximate algorithms, and graph analytics, so you'll often find there's recent results that could make big differences in your project, but aren't in your library.

Knowing how the algorithms really work helps to both debug and accelerate your solution.

Specific topics covered include, but are not limited to:

- Topic Modeling with NMF and SVD

- How to Implement Linear Regression

- Predicting Health Outcomes with Linear Regressions

- PageRank with Eigen Decompositions

- Implementing QR Factorization

I reached out to course creator Rachel Thomas, asking her to highlight the reasons she undertook the course project, and why others should consider taking the course. Here's what she said:

Computational linear algebra is such a useful and practical field. I created this course to teach it with the fast.ai "top-down" philosophy of starting with practical, hands-on applications such as how to reconstruct an image from a CT scan using the angles of the x-rays and the readings. As the course goes on, we dig into more underlying details.

This approach is very different from how most math courses operate: typically, math courses first introduce all the separate components you will be using, and then you gradually build them into more complex structures. The problems with this are that students often lose motivation, don’t have a sense of the “big picture”, and don’t know which pieces they’ll even end up needing.

The course is also distinctive in using some very modern approaches, such as randomized SVD and libraries like numba and PyTorch.

If you happen to be interested in a deeper treatment of computational linear algebra, solidifying your comprehension of its constituent concepts, and developing a better working understanding of what is going when you import and utilize libraries that may be doing the heavy lifting, you should give Rachel Thomas' fast.ai course consideration.

Related:

- Deep Learning for Coders with fastai and PyTorch: The Free eBook

- Free MIT Courses on Calculus: The Key to Understanding Deep Learning

- Free Mathematics Courses for Data Science & Machine Learning

Computational Linear Algebra for Coders: The Free Course

Computational Linear Algebra for Coders: The Free Course