Essential Math for Data Science: Basis and Change of Basis

In this article, you will learn what the basis of a vector space is, see that any vectors of the space are linear combinations of the basis vectors, and see how to change the basis using change of basis matrices.

Basis and Change of Basis

One way to understand eigendecomposition is to consider it as a change of basis. You’ll learn in this article what is the basis of a vector space.

You’ll see that any vector of the space are linear combinations of the basis vectors and that the number you see in vectors depends on the basis you choose.

Finally, you’ll see how to change the basis using change of basis matrices.

It is a nice way to consider matrix factorization as eigendecomposition or Singular Value Decomposition. As you can see in Chapter 09 of Essential Math for Data Science, with eigendecomposition, you choose the basis such that the new matrix (the one that is similar to the original matrix) becomes diagonal.

Definitions

The basis is a coordinate system used to describe vector spaces (sets of vectors). It is a reference that you use to associate numbers with geometric vectors.

To be considered as a basis, a set of vectors must:

- Be linearly independent.

- Span the space.

Every vector in the space is a unique combination of the basis vectors. The dimension of a space is defined to be the size of a basis set. For instance, there are two basis vectors in ℝ2 (corresponding to the x and y-axis in the Cartesian plane), or three in ℝ3.

As shown in section 7.4 of Essential Math for Data Science, if the number of vectors in a set is larger than the dimensions of the space, they can’t be linearly independent. If a set contains fewer vectors than the number of dimensions, these vectors can’t span the whole space.

As you saw, vectors can be represented as arrows going from the origin to a point in space. The coordinates of this point can be stored in a list. The geometric representation of a vector in the Cartesian plane implies that we take a reference: the directions given by the two axes x and y.

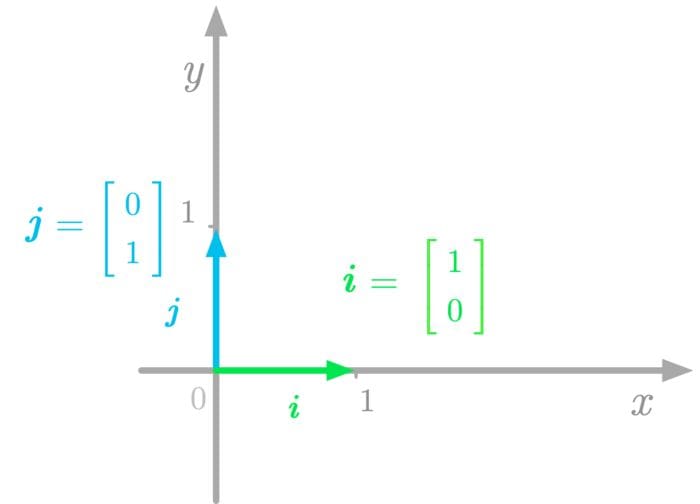

Basis vectors are the vectors corresponding to this reference. In the Cartesian plane, the basis vectors are orthogonal unit vectors (length of one), generally denoted as i and j.

Figure 1: The basis vectors in the Cartesian plane.

For instance, in Figure 1, the basis vectors i and j point in the direction of the x-axis and y-axis respectively. These vectors give the standard basis. If you put these basis vectors into a matrix, you have the following identity matrix (for more details about identity matrices, see 6.4.3 in Essential Math for Data Science):

Thus, the columns of I2 span ℝ2. In the same way, the columns of I3 span ℝ3 and so on.

Basis vectors can be orthogonal because orthogonal vectors are independent. However, the converse is not necessarily true: non-orthogonal vectors can be linearly independent and thus form a basis (but not a standard basis).

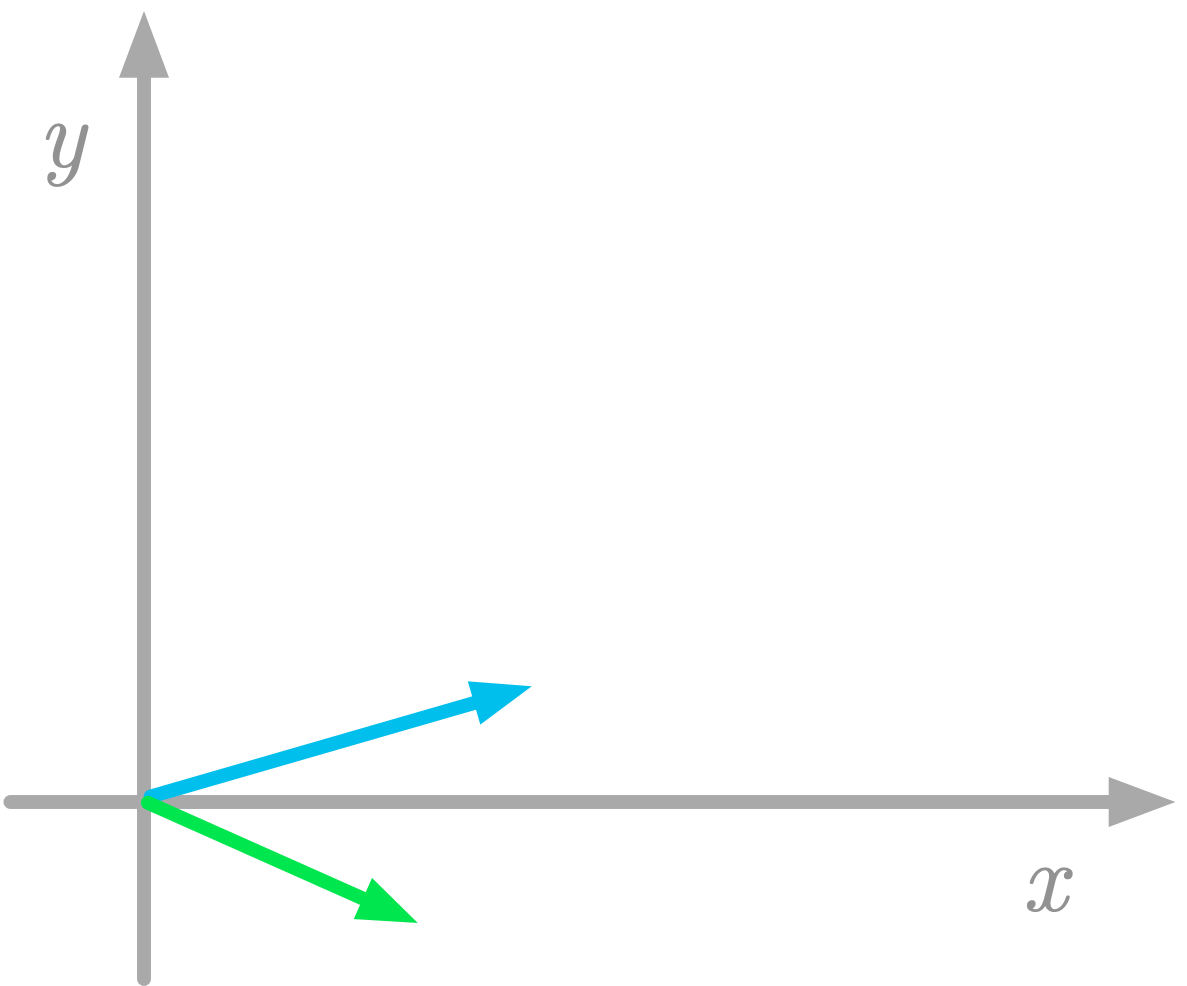

The basis of your vector space is very important because the values of the coordinates corresponding to the vectors depend on this basis. By the way, you can choose different basis vectors, like in the ones in Figure 2 for instance.

Figure 2: Another set of basis vectors.

Keep in mind that vector coordinates depend on an implicit choice of basis vectors.

Linear Combination of Basis Vectors

You can consider any vector in a vector space as a linear combination of the basis vectors.

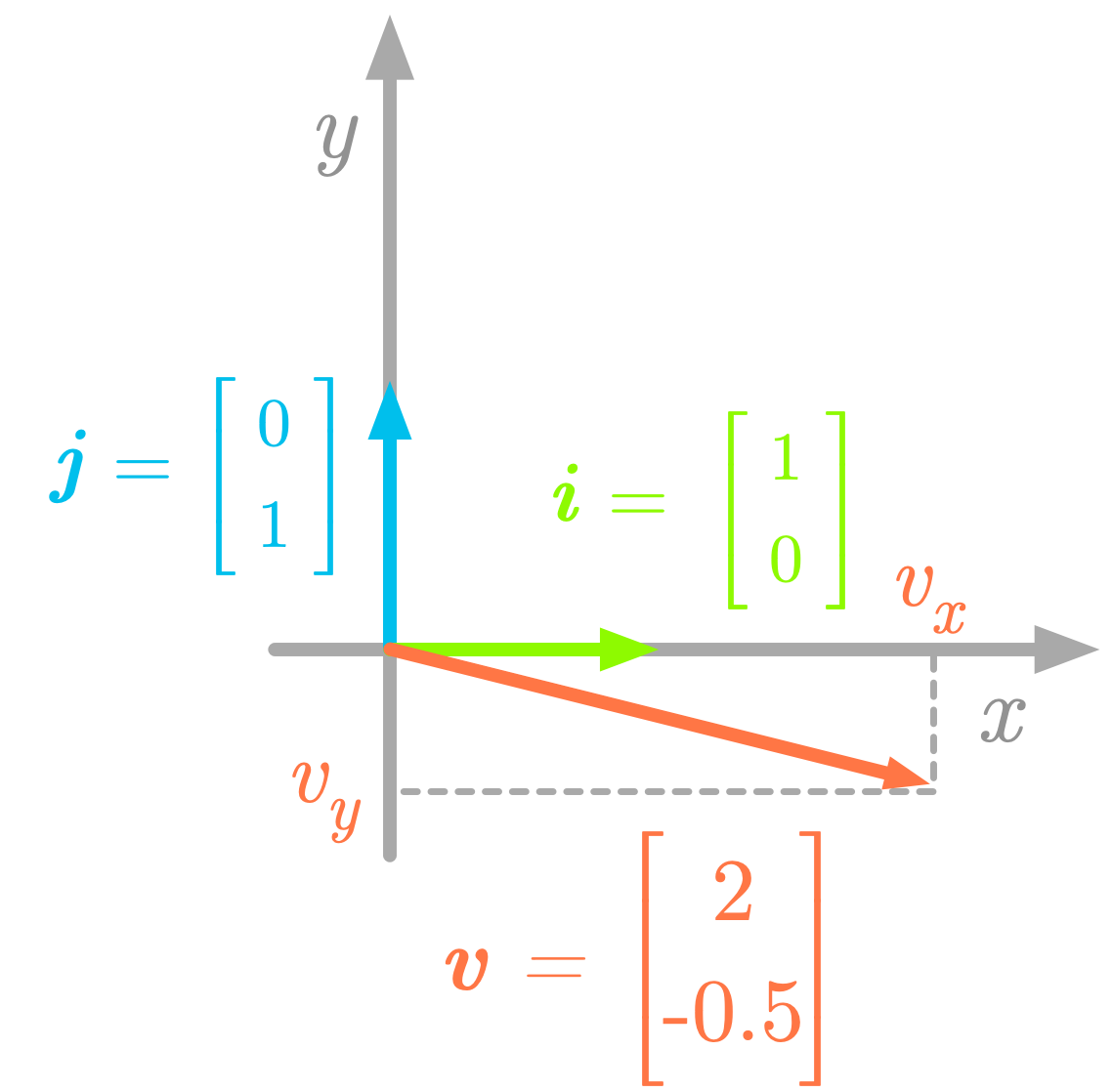

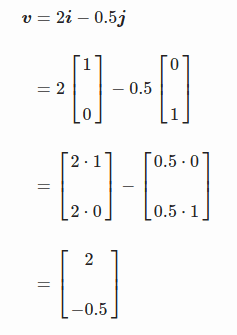

For instance, take the following two-dimensional vector v:

Figure 3: Components of the vector v.

The components of the vector v are the projections on the x-axis and on the y-axis (vx and vy, as illustrated in Figure 3). The vector v corresponds to the sum of its components: v = vx + vy, and you can obtain these components by scaling the basis vectors: vx = 2i and vy = −0.5j. Thus, the vector v shown in Figure 3 can be considered as a linear combination of the two basis vectors i and j:

Other Bases

The columns of identity matrices are not the only case of linearly independent columns vectors. It is possible to find other sets of n vectors linearly independent in ℝn.

For instance, let’s consider the following vectors in ℝ2:

and

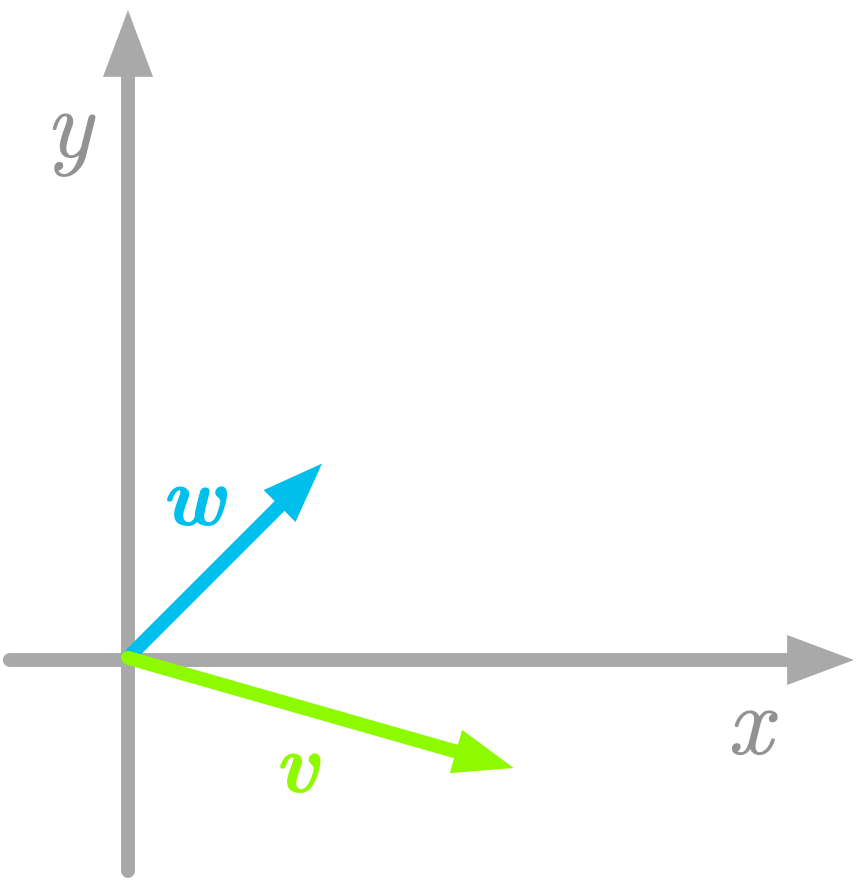

The vectors v and w are represented in Figure 4.

Figure 4: Another basis in a two-dimensional space.

From the definition above, the vectors v and w are a basis because they are linearly independent (you can’t obtain one of them from combinations of the other) and they span the space (all the space can be reached from the linear combinations of these vectors).

It is critical to keep in mind that, when you use the components of vectors (for instance vx and vy, the x and y components of the vector v), the values are relative to the basis you chose. If you use another basis, these values will be different.

You can see in Chapter 09 and 10 of Essential Math for Data Science that the ability to change the bases is fundamental in linear algebra and is key to understand eigendecomposition or Singular Value Decomposition.

Vectors Are Defined With Respect to a Basis

You saw that to associate geometric vectors (arrows in the space) with coordinate vectors (arrays of numbers), you need a reference. This reference is the basis of your vector space. For this reason, a vector should always be defined with respect to a basis.

Let’s take the following vector:

The values of the x and y components are respectively 2 and -0.5. The standard basis is used when not specified.

You could write Iv to specify that these numbers correspond to coordinates with respect to the standard basis. In this case i is called the change of basis matrix.

You can define vectors with respect to another basis by using another matrix than i.

Linear Combinations of the Basis Vectors

Vector spaces (the set of possible vectors) are characterized in reference to a basis. The expression of a geometrical vector as an array of numbers implies that you choose a basis. With a different basis, the same vector v is associated with different numbers.

You saw that the basis is a set of linearly independent vectors that span the space. More precisely, a set of vectors is a basis if every vector from the space can be described as a finite linear combination of the components of the basis and if the set is linearly independent.

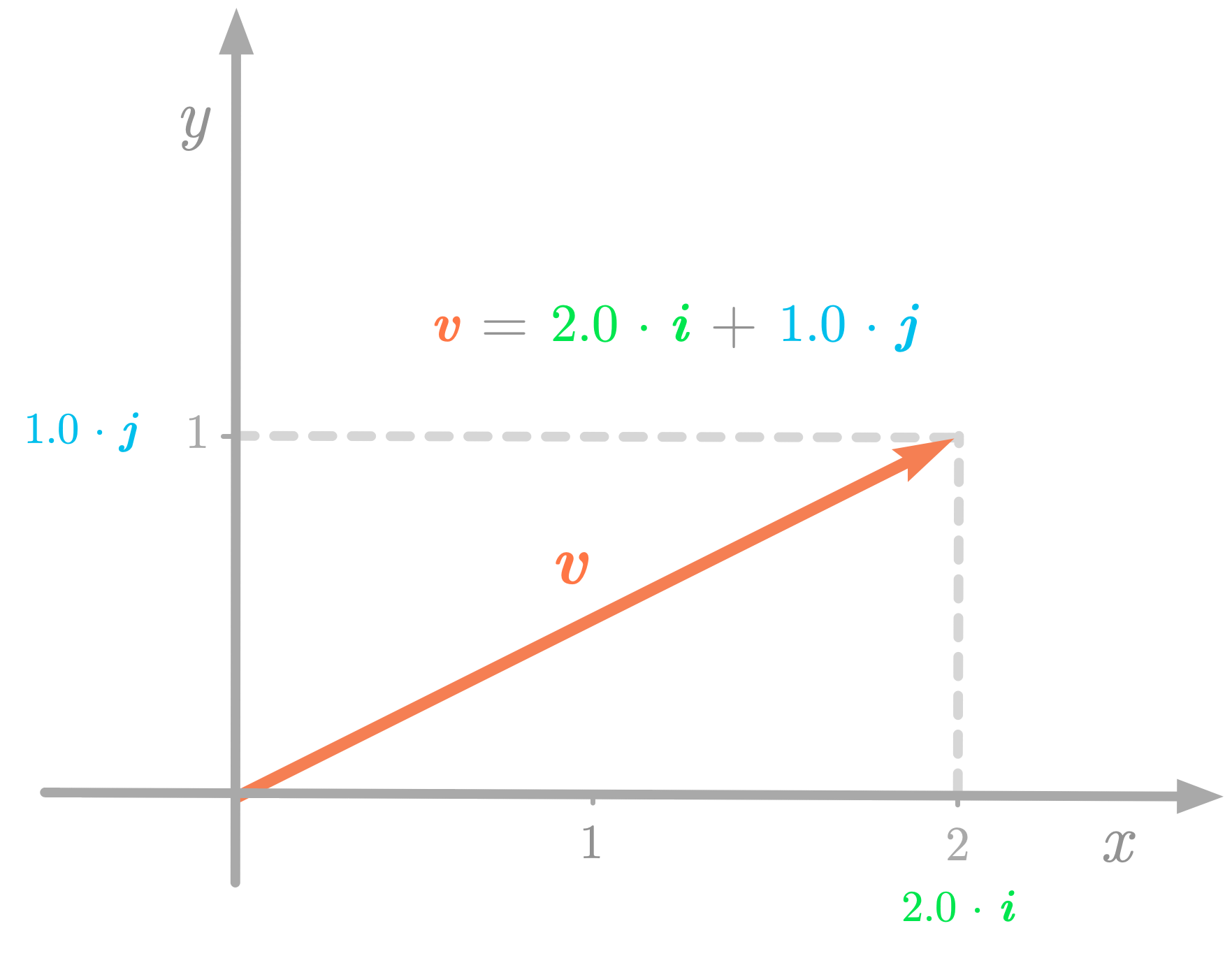

Consider the following two-dimensional vector:

In the ℝ2 Cartesian plane, you can consider v as a linear combination of the standard basis vectors i and j, as shown in Figure 5.

Figure 5: The vector v can be described as a linear combination of the basis vectors i and j.

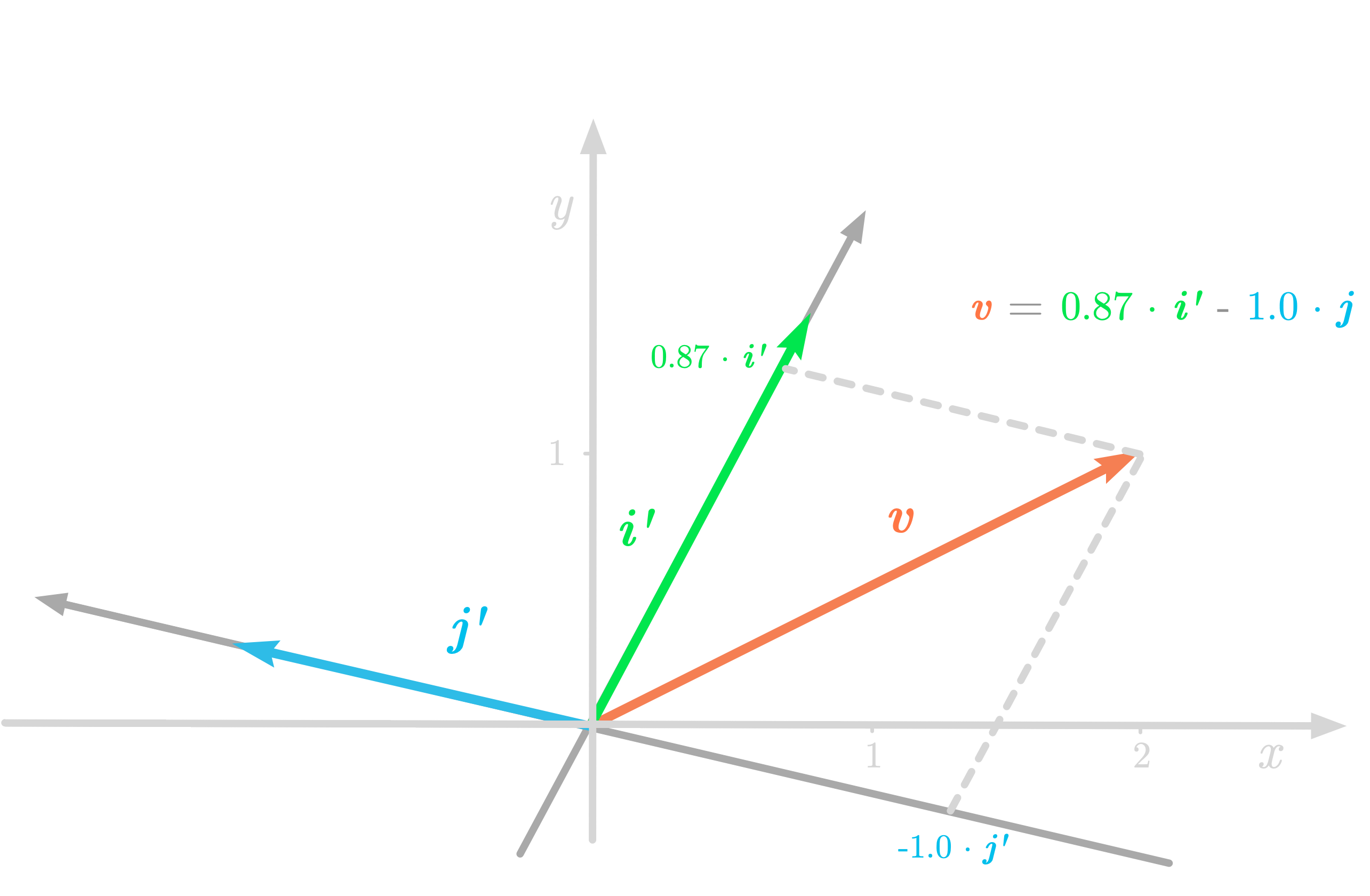

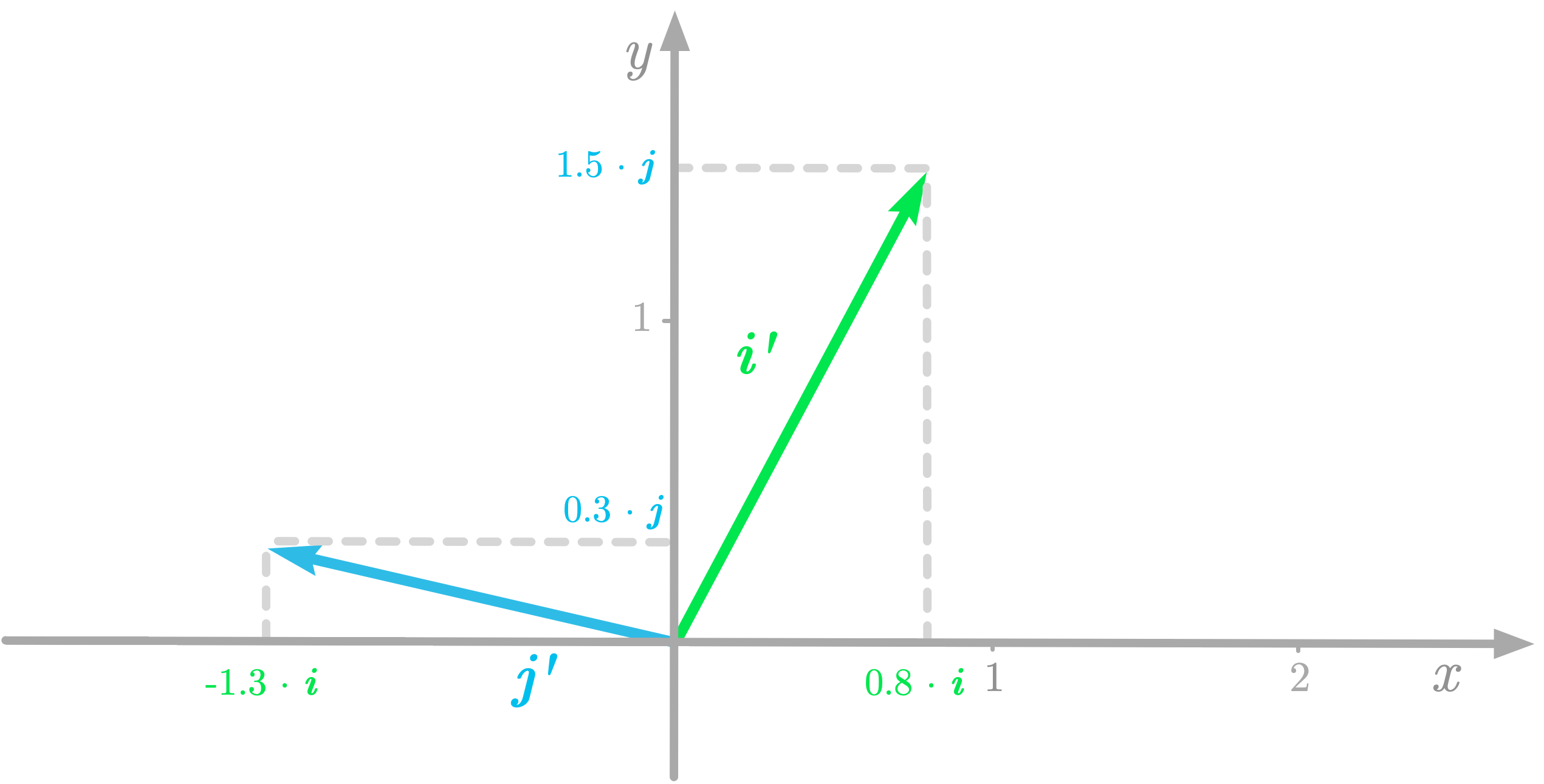

But if you use another coordinate system, v is associated with new numbers. Figure 6 shows a representation of the vector v with a new coordinate system (i′ and j′).

Figure 6: The vector v with respect to the coordinates of the new basis.

In the new basis, v is a new set of numbers:

The Change of Basis Matrix

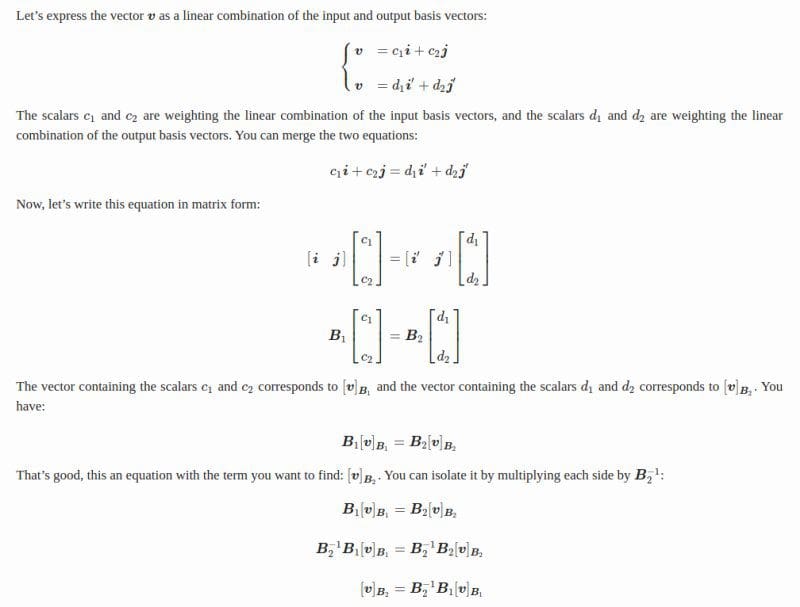

You can use a change of basis matrix to go from a basis to another. To find the matrix corresponding to new basis vectors, you can express these new basis vectors (i′ and j′) as coordinates in the old basis (i and j).

Let’s take again the preceding example. You have:

and

This is illustrated in Figure 7.

Figure 7: The coordinates of the new basis vectors with respect to the old basis.

Since they are basis vectors, i′ and j′ can be expressed as linear combinations of i and j.:

Let’s write these equations under the matrix form:

To have the basis vectors as columns, you need to transpose the matrices. You get:

This matrix is called the change of basis matrix. Let’s call it CC:

As you can notice, each column of the change of basis matrix is a basis vector of the new basis. You’ll see next that you can use the change of basis matrix CC to convert vectors from the output basis to the input basis.

The difference between change of basis and linear transformation is conceptual. Sometimes it is useful to consider the effect of a matrix as a change of basis; sometimes you get more insights when you think of it as a linear transformation. Either you move the vector or you move its reference. This is why rotating the coordinate system has an inverse effect compared to rotating the vector itself. For eigendecomposition and SVD, both of these views are usually taken together, which can be confusing at first. Keeping this difference in mind will be useful throughout the end of the book. The main technical difference between the two is that change of basis must be invertible, which is not required for linear transformations.

Finding the Change of Basis Matrix

A change of basis matrix maps an input basis to an output basis. Let’s call the input basis B1 with the basis vectors i and j, and the output basis B2 with the basis vectors i′ and j′. You have:

and

From the equation of the change of basis, you have:

If you want to find the change of basis matrix given B1 and B2, you need to calculate the inverse of B1 to isolate C:

In words, you can calculate the change of basis matrix by multiplying the inverse of the input basis matrix (, which contains the input basis vectors as columns) by the output basis matrix (

, which contains the output basis vectors as columns).

Be careful, this change of basis matrix allows you to convert vectors from to

and not the opposite. Intuitively, this is because moving an object is the opposite to moving the reference. Thus, to go from

to

, you must use the inverse of the change of basis matrix

.

Note that if the input basis is the standard basis (), then the change of basis matrix is simply the output basis matrix:

Since the basis vectors are linearly independent, the columns of C are linearly independent, and thus, as stated in section 7.4 of Essential Math for Data Science, C is invertible.

Example: Changing the Basis of a Vector

Let’s change the basis of a vector v, using again the geometric vectors represented in Figure 6.

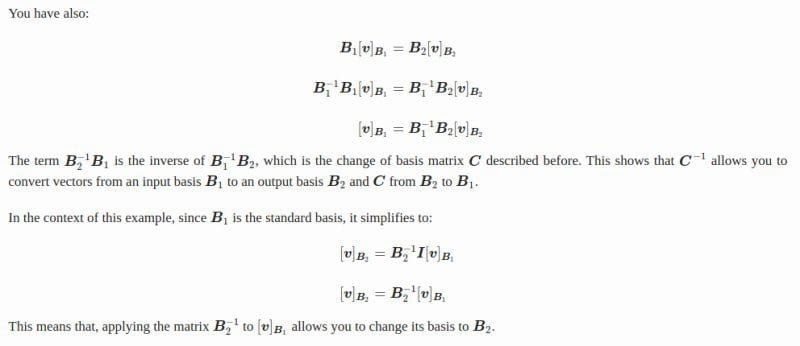

Notation

You’ll change the basis of v from the standard basis to a new basis. Let’s denote the standard basis as and the new basis as

. Remember that the basis is a matrix containing the basis vectors as columns. You have:

and

Let’s denote the vector v relative to the basis as

:

The goal is to find the coordinates of v relative to the basis , denoted as

.

To distinguish the basis used to define a vector, you can put the basis name (like ) in subscript after the vector name enclosed in square brackets. For instance,

denotes the vector v relative to the basis

, also called the representation of v with respect to

Using Linear Combinations

Let’s code this:

v_B1 = np.array([2, 1])

B_2 = np.array([

[0.8, -1.3],

[1.5, 0.3]

])

v_B2 = np.linalg.inv(B_2) @ v_B1

v_B2

array([ 0.86757991, -1.00456621])

These values are the coordinates of the vector v relative to the basis . This means that if you go to 0.86757991i′−1.00456621j′ you arrive to the position (2, 1) in the standard basis, as illustrated in Figure 6.

Conclusion

Understanding the concept of basis is a nice way to approach matrix decomposition (also called matrix factorization), like eigendecomposition or singular value decomposition (SVD). In these terms, you can think of matrix decomposition as finding a basis where the matrix associated with a transformation has specific properties: the factorization is a change of basis matrix, the new transformation matrix, and finally the inverse of the change of basis matrix to come back into the initial basis (more details in Chapter 09 and 10 of Essential Math for Data Science).

Bio: Hadrien Jean is a machine learning scientist. He owns a Ph.D in cognitive science from the Ecole Normale Superieure, Paris, where he did research on auditory perception using behavioral and electrophysiological data. He previously worked in industry where he built deep learning pipelines for speech processing. At the corner of data science and environment, he works on projects about biodiversity assessement using deep learning applied to audio recordings. He also periodically creates content and teaches at Le Wagon (data science Bootcamp), and writes articles in his blog (hadrienj.github.io).

Original. Reposted with permission.

Related:

- Essential Math for Data Science: Scalars and Vectors

- Essential Math for Data Science: Introduction to Matrices and the Matrix Product

- Essential Math for Data Science: Information Theory