Top /r/MachineLearning Posts, September: Implement a neural network from scratch in C++

Neural network in C++ for beginners, Chinese character handwriting recognition beats humans, a handy machine learning algorithm cheat sheet, neural nets versus functional programming, and a neural nets paper repository.

By Matthew Mayo.

In September on /r/MachineLearning, we find a neural network tutorial video for C++, read that deep learning based Chinese character handwriting recognition beats humans, grab ourselves a machine learning algorithm cheat sheet, connect the dots between functional programming and deep learning, and discover a neural network paper repository.

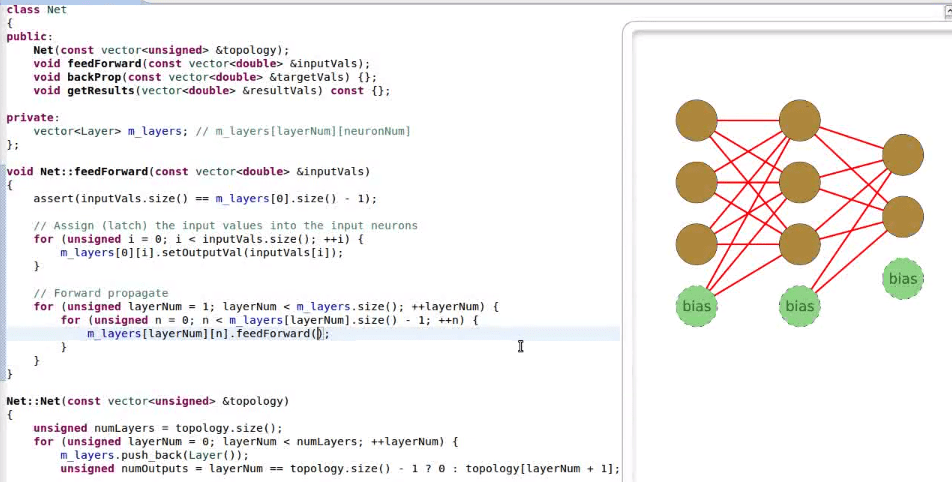

1. Neural Net in C++ for Absolute Beginners +154

This post is a video of Dave Miller explaining a simple back propagation neural network, and demonstrating how to code one in C++. The video is approximately one hour in length, and from my own experience following the tutorial myself, I can tell you that accomplishing the stated goal of having a hand-crafted, functioning neural network during video runtime is attainable. The short comment section also contains some useful discussion, including a reference to this online deep learning book. You can find Miller's own resulting neural network code here.

2. Fujitsu Achieves 96.7% Recognition Rate for Handwritten Chinese Characters +151

According to the article, the apparent human equivalent recognition rate for handwritten Chinese characters is 96.1%, which Fujitsu has surpassed with an accuracy rate of 96.7%. In the spirit of much recent machine learning research, deep neural networks were the tool of choice for the researchers. This isn't your father's handwritten digit recongition task, though, given that there are 3800 Chinese characters to recognize. An innovative method was also derived by the researchers to automatically deform handwritten samples, in order to increase the number of training samples.

3. CheatSheet - Python & R Codes for Common Machine Learning Algorithms +150

This post, via Analytics Vidhya, is a collection of machine learning algorithm implementations (via libraries) implemented in both Python and R, side-by-side. The corresponding code covers the entire modeling process (data loading, training, testing) for 10 popular algorithms, including Decision Trees, Support Vector Machines, and Linear Regression. The cheat sheet is equally useful for newbies to one language or the other (or both) and experienced implementers looking to brush up or for a quick copy and paste solution.

4. Neural Networks, Types, and Functional Programming +142

Last month Christopher Olah helped us understand LSTM Networks, and this month his topic of choice is Deep Learning: its youth, its projected and altered form in the not-so-distant future, and its connection to functional programming. Central to this, Olah puts forth the speculative theory that "deep learning studies a connection between optimization and functional programming," equates representations to types, and goes on to compare various different neural networks to their perceived functional equivalents. While Olah himself states that "this is a pretty strange article and I feel a bit weird posting it," the article is a great read and helps us look at 2 familiar, unrelated concepts in a new light. Here is a direct link to the paper.

5. Curated List of Neural Network Papers +139

Here it is! Neural networks and deep learning are everywhere, and software engineer and NN enthusiast Robert S. Dionne has done everyone a favor and compiled, organized, and shared a list of papers relevant to our current collective obsession. It's neatly presented in 30 categories such as Restricted Boltzmann Machines, Convolutional Neural Networks, and Parallel Training. There are a lot of deep learning papers emerging, and it's nice to have people willing to both organize and explain them.

Bio: Matthew Mayo is a computer science graduate student currently working on his thesis parallelizing machine learning algorithms. He is also a student of data mining, a data enthusiast, and an aspiring machine learning scientist.

Related:

In September on /r/MachineLearning, we find a neural network tutorial video for C++, read that deep learning based Chinese character handwriting recognition beats humans, grab ourselves a machine learning algorithm cheat sheet, connect the dots between functional programming and deep learning, and discover a neural network paper repository.

1. Neural Net in C++ for Absolute Beginners +154

This post is a video of Dave Miller explaining a simple back propagation neural network, and demonstrating how to code one in C++. The video is approximately one hour in length, and from my own experience following the tutorial myself, I can tell you that accomplishing the stated goal of having a hand-crafted, functioning neural network during video runtime is attainable. The short comment section also contains some useful discussion, including a reference to this online deep learning book. You can find Miller's own resulting neural network code here.

2. Fujitsu Achieves 96.7% Recognition Rate for Handwritten Chinese Characters +151

According to the article, the apparent human equivalent recognition rate for handwritten Chinese characters is 96.1%, which Fujitsu has surpassed with an accuracy rate of 96.7%. In the spirit of much recent machine learning research, deep neural networks were the tool of choice for the researchers. This isn't your father's handwritten digit recongition task, though, given that there are 3800 Chinese characters to recognize. An innovative method was also derived by the researchers to automatically deform handwritten samples, in order to increase the number of training samples.

3. CheatSheet - Python & R Codes for Common Machine Learning Algorithms +150

This post, via Analytics Vidhya, is a collection of machine learning algorithm implementations (via libraries) implemented in both Python and R, side-by-side. The corresponding code covers the entire modeling process (data loading, training, testing) for 10 popular algorithms, including Decision Trees, Support Vector Machines, and Linear Regression. The cheat sheet is equally useful for newbies to one language or the other (or both) and experienced implementers looking to brush up or for a quick copy and paste solution.

4. Neural Networks, Types, and Functional Programming +142

Last month Christopher Olah helped us understand LSTM Networks, and this month his topic of choice is Deep Learning: its youth, its projected and altered form in the not-so-distant future, and its connection to functional programming. Central to this, Olah puts forth the speculative theory that "deep learning studies a connection between optimization and functional programming," equates representations to types, and goes on to compare various different neural networks to their perceived functional equivalents. While Olah himself states that "this is a pretty strange article and I feel a bit weird posting it," the article is a great read and helps us look at 2 familiar, unrelated concepts in a new light. Here is a direct link to the paper.

5. Curated List of Neural Network Papers +139

Here it is! Neural networks and deep learning are everywhere, and software engineer and NN enthusiast Robert S. Dionne has done everyone a favor and compiled, organized, and shared a list of papers relevant to our current collective obsession. It's neatly presented in 30 categories such as Restricted Boltzmann Machines, Convolutional Neural Networks, and Parallel Training. There are a lot of deep learning papers emerging, and it's nice to have people willing to both organize and explain them.

Bio: Matthew Mayo is a computer science graduate student currently working on his thesis parallelizing machine learning algorithms. He is also a student of data mining, a data enthusiast, and an aspiring machine learning scientist.

Related: