The Five Capability Levels of Deep Learning Intelligence

Deep learning writer Carlos Perez gives his own classification for deep learning-based AI, which is aimed at practitioners. This classification gives us a sense of where we currently are and where we might be heading.

Arend Hintze has a good short article on “Understanding the four types of AI, from reactive robots to self-aware beings” where he outlines the following types of AI:

Reactive Machine - The most basic type that is unable to form memories and use past experiences to inform decisions. They can’t function outside the the specific tasks that they were designed for.

Limited Memory - Are able to look into the past to inform current decisions. The memory however is transient and aren’t used for future experiences.

Theory of Mind - These systems are able to form representations of the world as well as other agents that it interacts with.

Self-Awareness - Mostly speculative description here.

I like this classification much better than the “Narrow AI” and “General AI” dichotomy. This classification makes an attempt to break down Narrow AI into 3 categories. This gives us more concepts to differentiate different AI implementations. My reservation though of the definition is that they appear to come from a GOFAI mindset. Furthermore, the leap from limited memory able to employ the past to theory of mind seems to be an extremely vast leap.

I however would like to take this opportunity to come up with my own classification, more targeted towards the field of Deep Learning. I hope my classification is a bit more concrete and helpful for practitioners. This classification gives us a sense of where we currently are and where we might be heading.

We are inundated with all the time with AI hype that we fail to good conceptual framework for making a precise assessment of the current situation. This may simply be due to the fact that many writers have trouble keeping up with the latest development in Deep Learning research. There’s too much to read to keep up and the latest discoveries continue to change our current understanding. See “Rethinking Generalization” as one of those surprising discoveries.

Here I introduce a pragmatic classification of Deep Learning capabilities:

1. Classification Only (C)

This level includes the fully connected neural network (FCN) and the convolution network (CNN) and various combinations of them. These system take a high dimensional vector as input and arrive at a single result, typically a classification of the input vector. You can consider these systems as being stateless functions, meaning that their behavior is only a function of the current input. Generative models are one of those hotly researched areas and these also belong to this category. In short, these systems are quite capable by themselves.

2. Classification with Memory (CM)

This level includes memory elements incorporated with the C level networks. LSTMs are example of these with the memory units are embedded inside the LSTM node. Other variants of these are the Neural Turing Machine (NMT) and the Differentiable Neural Computer (DNC) from DeepMind. These systems maintain state as they compute their behavior.

3. Classification with Knowledge (CK)

This level is somewhat similar to the CM level, however rather than raw memory, the information that the C level network is able to access is a symbolic knowledge base. There are actually three kinds of symbolic integration that I have found, a transfer learning approach, a top-down approach, a bottom up approach. The first approach uses a symbolic system that acts as a regularizer. The second approach has the symbolic elements at the top of the hierarchy that are composed at the bottom by neural representations. The last approach has it reversed, where a C level network is actually attached to a symbolic knowledge base.

4. Classification with Imperfect Knowledge (CIK)

At this level, we have a system that is built on top of CK, however is able to reason with imperfect information. An example of this kind of system would be AlphaGo and Poker playing systems. AlphaGo however does not employ CK but rather CM level capability. Like AlphaGo, these kind of systems can train itself by running simulation of it against itself.

5. Collaborative Classification with Imperfect Knowledge (CCIK)

This level is very similar to the “theory of mind” where we actually have multiple agent neural networks combining to solve problems. Theses systems are designed to solve multiple objectives. We actually do se primitive versions of this in adversarial networks, that learn to perform generalization with competing discriminator and generative networks Expand that concept further into game-theoretic driven networks that are able to perform strategically and tactically solving multiple objectives and you have the making of these kind of extremely adaptive systems. We aren’t at this level yet and there’s still plenty of research to be done in the previous levels.

Different level bring about capabilities that don’t exist in the previous level. C level systems for example are only capable of predicting anti-causal relationships. CM level systems are capable of very good translation. CIK level systems are capable of strategic game play.

We can see how this classification somewhat aligns with Hinzte classification, with the exception of course of self-awareness. That’s a capability that I really have not explored and don’t intend to until the pre-requisite capabilities have been addressed. I’ve also not addressed zero-shot or one-shot learning or unsupervised learning. This is still one of the fundamental problems, as Yann LeCun has said:

If intelligence was a cake, unsupervised learning would be the cake, supervised learning would be the icing on the cake, and reinforcement learning would be the cherry on the cake. We know how to make the icing and the cherry, but we don’t know how to make the cake.

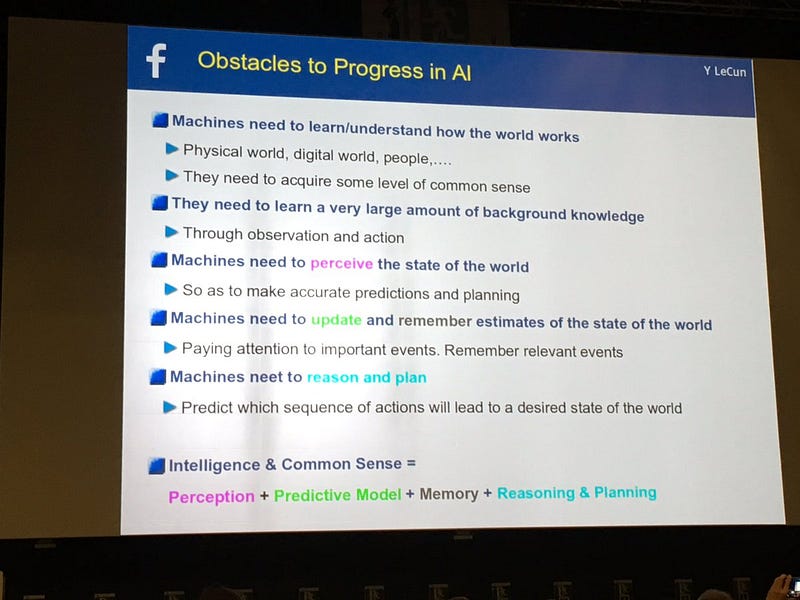

LeCun has also recently started using the phrase “predictive learning” in substitution of “unsupervised learning”. This is an interesting change and indicates a subtle change in his perspective as to what he believes is required to implement the “cake”. In LeCun’s view, the foundation needs to be built before we can make substantial progress in AI. In other words, building off current supervised learning by adding more capabilities like memory, knowledge bases and cooperating agents will be a slog until we are all able to build that “predictive foundational layer”. In the most recent NIPS 2016 conference he posted this slide:

One accelerator technology in all of this however is that when the capabilities are used in a feedback loop. We actually have seen instance of this kind of ‘meta-learning’ or ‘learning to optimize’ in current research. I cover these developments in another article “Deep Learning can Now Design Itself!” The key take away with meta-methods is that our own research methods become much more powerful when we can train machines to actually discover better solutions that we otherwise could find.

This is why, despite formidable problems in Deep Learning research, we can’t really be sure how rapid progress may proceed.

To understand better how Deep Learning capabilities fit with your enterprise, visit Intuition Machine or discuss on the FaceBook Group on Deep Learning.

Bio: Carlos Perez is a software developer presently writing a book on "Design Patterns for Deep Learning". This is where he sources his ideas for his blog posts.

Original. Reposted with permission.

Related:

- Why Deep Learning is Radically Different From Machine Learning

- Shortcomings of Deep Learning

- Deep Learning Key Terms, Explained