OpenAI is Adopting PyTorch… They Aren’t Alone

OpenAI is moving to PyTorch for the bulk of their research work. This might be a high-profile adoption, but it is far from the only such example.

In deep learning research-related news, OpenAI has recently made the decision to standardize its research modeling by using PyTorch. They state that many of their teams have already internally made the switch, and are looking forward to contributing to the greater PyTorch community.

The stated reason for this move was primarily to increase research productivity on GPUs at scale. PyTorch's general ease of use, and quick research generative modeling iteration specifically, were noted as further reasoning, as was the excitement of joining the fast growing PyTorch developer community.

Until now, OpenAI had used a variety of frameworks for its deep learning research projects and implementations. OpenAI states that while they will mainly standardize on PyTorch — they note doing so will also make it easier to share models internally — they do not rule out the possibility of using other frameworks on occasion when technical limits of PyTorch or some other reason may warrant doing so, implicitly recognizing that such frameworks are tools for the expression of their research as opposed to some quasi-religious movement.

Alongside this announcement, OpenAI has released a PyTorch version of its deep reinforcement learning educational resource, Spinning Up in Deep RL. They are also in the process of preparing PyTorch bindings for their blocksparse GPU kernels for release in the coming months.

OpenAI may be one of the latest and highest profile adopters of PyTorch, but they aren't the only ones who have been giving it a serious look.

Over the past 3.5 years, PyTorch has transitioned from an internal Facebook tool to one of the most widely-used open source deep learning libraries in existence. Virtually any discussion of top deep learning frameworks includes PyTorch, with many of these discussions boiling down to simply TensorFlow versus PyTorch.

Jeff Hale has been continually researching the deep learning landscape, and has concluded that TensorFlow and PyTorch have, indeed, emerged as the frameworks of choice, despite TensorFlow's two year head start as an open source product.

Hale's most recent article on the topic puts it in no uncertain terms:

PyTorch and TensorFlow are the two games in town if you want to learn a popular deep learning framework. I’m not looking at other frameworks because no other framework has been widely adopted.

He focuses on 4 adoption metrics in this article comparing these 2 frameworks: job listings, research use, online search results, and self-reported use. For a realistic comparison of PyTorch adoption versus TensorFlow, you should have a look at the full article.

Here are a few of the key takeaways:

- Jobs - 10 months ago, TensorFlow appeared in three times more job listings as PyTorch; it is now down to a 2x advantage.

- Research - At NeurIPS 2018, PyTorch was used in fewer papers than TensorFlow; at NeurIPS 2019, PyTorch was used in 166 papers, TensorFlow was in 74, more than double the mentions.

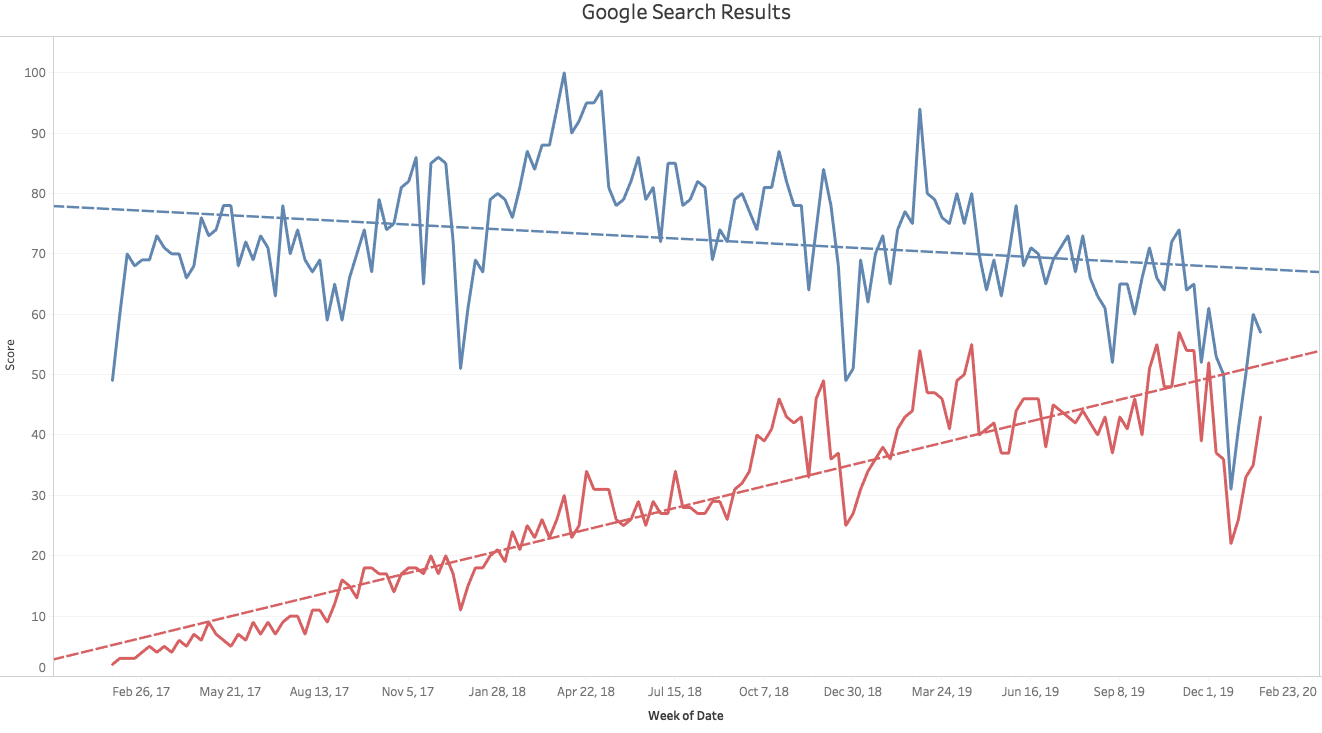

- Google Trends - the trends for TensorFlow and PyTrorch searches seem to be on opposite paths; see the trends below

- Self-reported Use: In the 2019 Stack Overflow Developer Survey (released April 2019), 10.3% of respondents used TensorFlow, 3.3% reported used Torch/PyTorch; no trend to report here, but a good marker to compare against when the followup SODS is released

Be sure to read Jeff Hale's full article here.

From the PyTorch community's point of view, it is encouraging to see big events like the adoption of the framework by research powerhouse OpenAI. What should be just as encouraging, however, is the determined, continued increase in market share that PyTorch seemingly exhibits month over month. Given the current trends there may come a time in the very near future where PyTorch and TensorFlow hold adoption positions of parity in the machine learning world.

This isn't to say that PyTorch is "better" than TensorFlow, but having options in terms of tools, tools which have relative strengths and differing implementation philosophies, is always a good thing.

Related:

- Which Deep Learning Framework is Growing Fastest?

- The Deep Learning Toolset — An Overview

- The Most in Demand Skills for Data Scientists