Learning by Forgetting: Deep Neural Networks and the Jennifer Aniston Neuron

Learning by Forgetting: Deep Neural Networks and the Jennifer Aniston Neuron

DeepMind’s research shows how to understand the role of individual neurons in a neural network.

Have you ever heard about the Jennifer Aniston neuron? In 2005, a group of neuroscientists led by Rodrigo Quian Quiroga published a paper (pdf) detailing his discovery of a type of neuron that steadily fired whenever she was shown a photo of Jennifer Aniston. The neuron in question was not activated when presented with photos of other celebrities. Obviously, we don’t all have Jennifer Aniston neurons and those specialized neurons can be activated in response to pictures of other celebrities. However, the Aniston neuron has become one of the most powerful metaphors in neuroscience to describe neurons that focus on a very specific task.

The fascinating thing about the Jennifer Aniston neuron is that it was discovered while Quiroga was researching areas of the brain that caused epileptic seizures. It is a well known fact that epilepsy causes damages across different areas of the brain but determining those specific areas is still an active area of research. Quiroga’s investigation into damaged brain regions led to the discovery in the functionality of other neurons. Some of Quiroga’s thought are brilliantly captured in his recent book The Forgetting Machine.

Extrapolating Quiroga’s methodology to the world of deep learning, data scientists from DeepMind published a paper that proposes a technique to learn about the impact of specific neurons in a neural network by causing damages to it. Sounds crazy? Not so much, in software development as in neuroscience, simulating arbitrarily failure is one of the most powerful methods to understand the functionality of code. DeepMind’s new algorithm can be seen as a version of chaos monkey for deep neural networks.

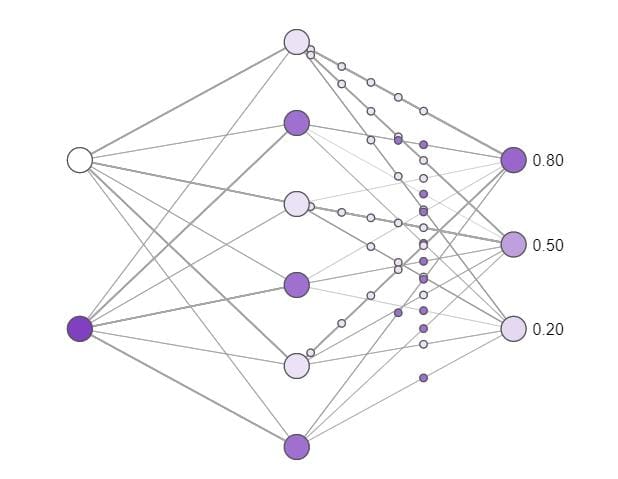

How does the DeepMind neuron deletion method really works? Very simply, the algorithm randomly deletes groups of neurons in a deep neural network and tries to understand their specific impact by running the modified network against the trained dataset.

When evaluating DeepMind’s new technique in image recognition scenarios, it produced some surprising results:

- Although many previous studies have focused on understanding easily interpretable individual neurons (e.g. “cat neurons”, or neurons in the hidden layers of deep networks which are only active in response to images of cats), DeepMind found that these interpretable neurons are no more important than confusing neurons with difficult-to-interpret activity.

- Networks which correctly classify unseen images are more resilient to neuron deletion than networks which can only classify images they have seen before. In other words, networks which generalize well are much less reliant on single directions than those which memorize.

Jennifer Aniston Neurons Are Not That Hot

Just like in neuroscience, deep learning models include a lot of highly specialized nodes such as the Jennifer Aniston neurons. Deep learning research has categorized many types of neurons based on their functionality. Google’s famous cat neurons or OpenAI’s sentiment neurons are some of the most famous specialized neurons in deep learning models. Common wisdom has suggested that the impact of those specialized neurons in a neural network is more relevant than common neurons. DeepMind’s research proved exactly the opposite.

Surprisingly, DeepMind’s neuron deletion experiments found that there was little relationship between selectivity and importance. In other words, specialized neurons were no more important than confusing neurons. This finding echoes recent work in neuroscience which has demonstrated that confusing neurons can actually be quite informative, and suggests that we must look beyond the most easily interpretable neurons in order to understand deep neural networks.

Generalization-Resiliency Correlation

The other big discovery by the DeepMind team is related to the correlation between the ability of a deep learning models to generalize well and its resiliency. In experiments that deleted different groups of nodes in a neural network, DeepMind researchers found out that networks which generalize well were much more robust to deletions than networks which simply memorized images that were previously seen during training. In other words, networks which generalize better are harder to break.

Original. Reposted with permission.

Related:

- Uber’s Ludwig is an Open Source Framework for Low-Code Machine Learning

- Crop Disease Detection Using Machine Learning and Computer Vision

- DeepMind Unveils Agent57, the First AI Agents that Outperforms Human Benchmarks in 57 Atari Games

Learning by Forgetting: Deep Neural Networks and the Jennifer Aniston Neuron

Learning by Forgetting: Deep Neural Networks and the Jennifer Aniston Neuron