Machine Learning Model Deployment

Read this article on machine learning model deployment using serverless deployment. Serverless compute abstracts away provisioning, managing severs and configuring software, simplifying model deployment.

By Asha Ganesh, Data Scientist

What is serverless deployment

Serverless is the next step in Cloud Computing. This means that servers are simply hidden from the picture. In serverless computing, this separation of server and application is managed by using a platform. The responsibility of the platform or serverless provider is to manage all the needs and configurations for your application. These platforms manage the configuration of your server behind the scenes. This is how in serverless computing, one can simply focus on the application or code itself being built or deployed.

Machine Learning Model Deployment is not exactly the same as software development. In ML models a constant stream of new data is needed to keep models working well. Models need to adjust in the real world because of various reasons like adding new categories, new levels and many other reasons. Deploying models is just the beginning, as many times models need to retrain and check their performance. So, using serverless deployment can save time and effort and for retraining models every time, which is cool!

Models are performing worse in production than in development, and the solution needs to be sought in deployment. So, its easy to deploy ML models through serverless deployment.

Prerequisites to understand serverless deployment

- Basic understanding of cloud computing

- Basic understanding of cloud functions

- Machine Learning

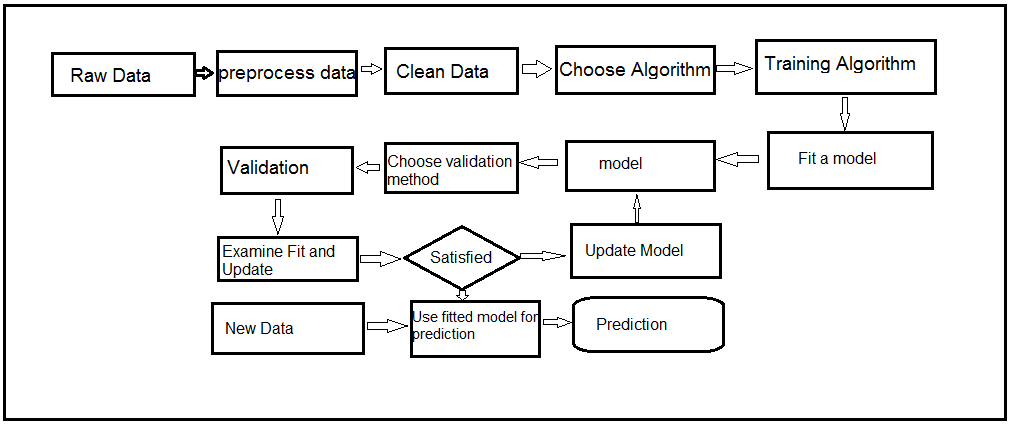

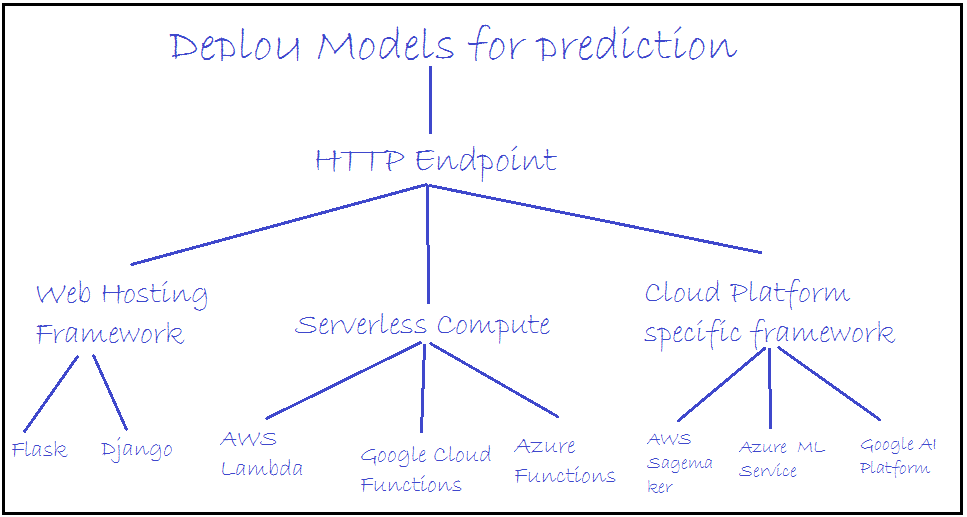

Deployment Models for prediction

We can deploy our ML model in 3 ways:

- web hosting frameworks like Flask and Django, etc.

- Server less compute AWS lambda, Google Cloud Functions,Azure Functions

- Cloud Platform specific frameworks like AWS Sagemaker, Google AI Platform, Azure Functions

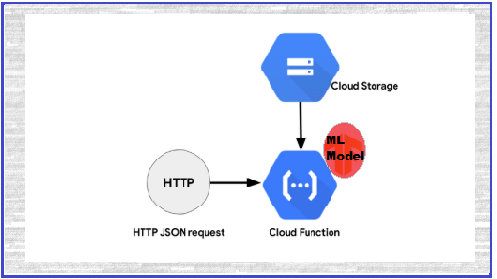

Server less deployment architecture overview

Store models in Google Cloud Storage buckets then write Google Cloud Functions. Using Python for retrieving models from the bucket and by using HTTP JSON requests we can get predicted values for the given inputs with the help of Google Cloud Function.

Steps to start model deployment

1. About Data, code and models

Taking the movie reviews dataset for sentiment analysis, see the solution here in my GitHub repository and data, models also available in the same repository.

2. Create storage bucket

By executing the “ServerlessDeployment.ipynb“ file you will get 3 ML models: DecisionClassifier, LinearSVC, and Logistic Regression.

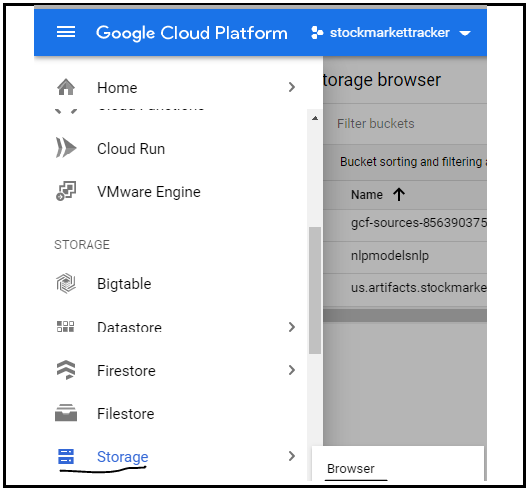

Click on the Browser in Storage option for creating new bucket as shown in the image:

3. Create new function

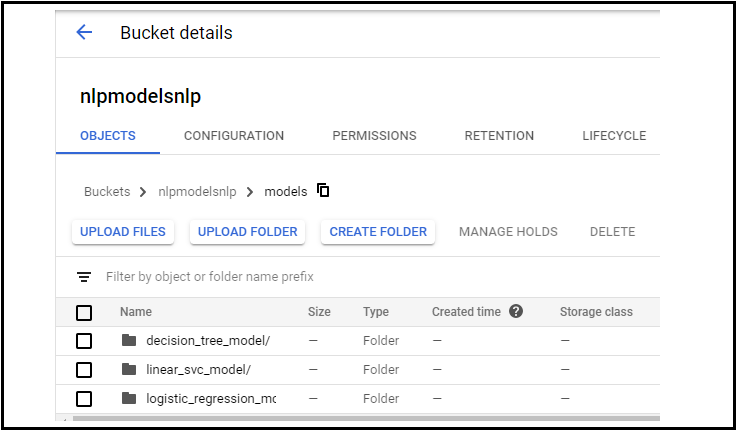

Create a new bucket, then create a folder and upload the 3 models in that folder by creating 3 sub folders as shown.

Here models is my main folder name and my sub folders are:

- decision_tree_model

- linear_svc_model

- logistic_regression_model

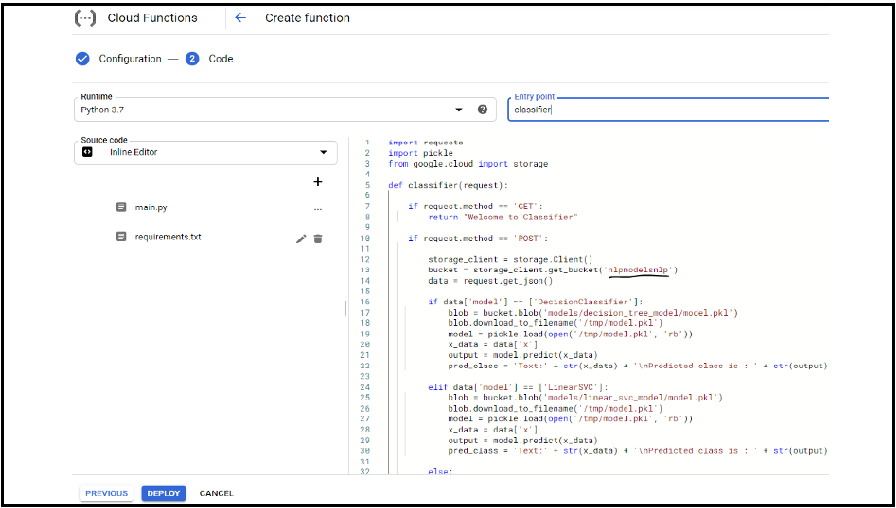

4. Create function

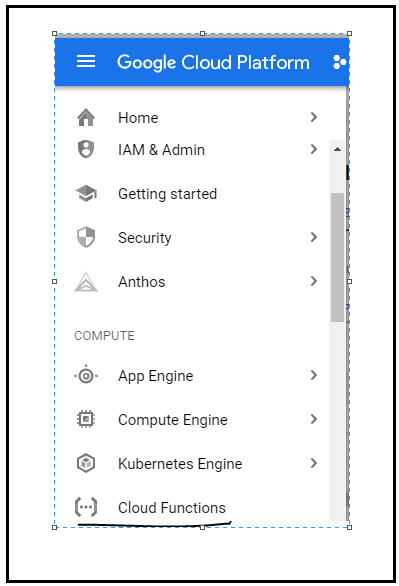

Then go to Google Cloud Functions and create a function, then select trigger type as HTTP and select language as Python (you can choose any language):

5. Write cloud function in the editor

Check the cloud function in my repository. Here I have imported the required libraries for calling models from Google Cloud Bucket and other libraries for HTTP request.

- GET method used to test the URL response and POST method

- Delete default template and paste our code then pickel is used for deserializing our model

- google.cloud — access our cloud storage function.

- If incoming request is GET we simply return “welcome to classifier”

- If incoming request is POST access the json data in the body request

- GET JSON instantiates the storage client object and accesses models from the bucket; here we have 3 classification models in the bucket

- If the user specifies “DecisionClassifier” we access the the model from the respective folder respectively with other models

- If the user does not specify any model, the default model is Logistic Regression

- The blob variable contains a reference to the model.pkl file for the correct model

- We download the .pkl file on to the local machine where this cloud function is running. Now every invocation might be running on a different VM and we only access the /temp folder on the VM, which is why we save our model.pkl file

- we deserialize the model by invoking pkl.load to access the prediction instances from the incoming request and call model.predict on the prediction data

- the response that will send back from the serverless function is the original text that is the review that we want to classify and our pred class

- after main.py write requirement.txt with required libraries and versions

6. Deploy the model

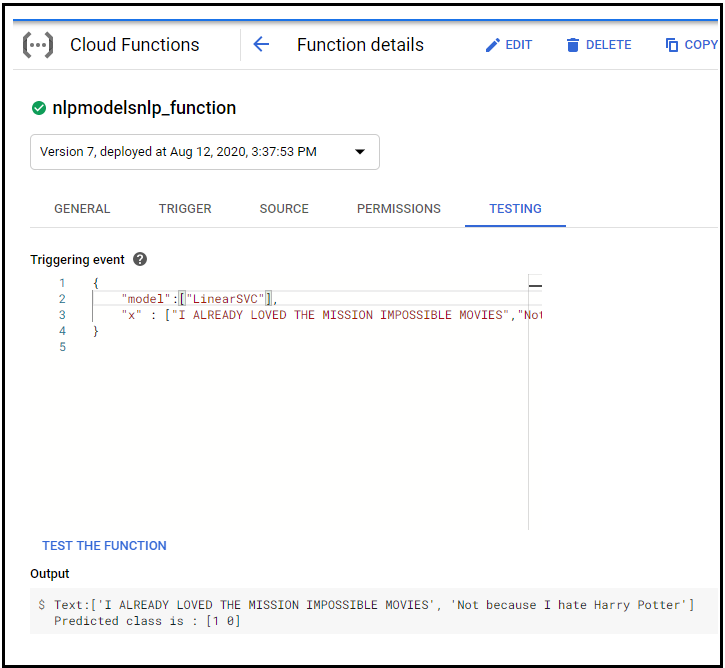

7. Test the model

Test the function with the other model.

Will meet you with complete UI details with this model deployment.

Code References:

My GitHub Repository: https://github.com/Asha-ai/ServerlessDeployment

Don’t hesitate to give more & more claps :)

Bio: Asha Ganesh is a data scientist.

Original. Reposted with permission.

Related:

- Create and Deploy your First Flask App using Python and Heroku

- Why would you put Scikit-learn in the browser?

- Stop training more models, start deploying them