7 Steps to Mastering Retrieval-Augmented Generation

As language model applications evolved, they increasingly became one with so-called RAG architectures: learn 7 key steps deemed essential to mastering their successful development.

Image by Author

# Introduction

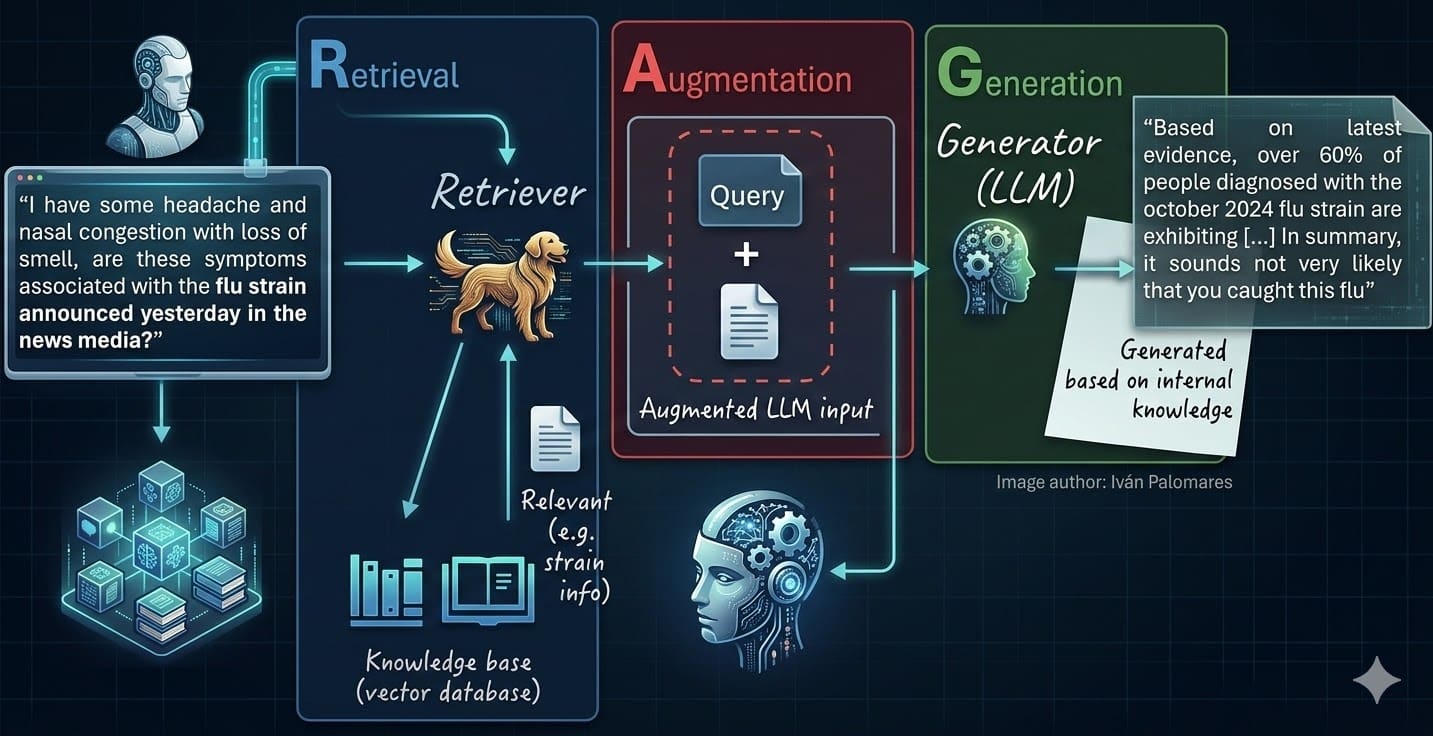

Retrieval-augmented generation (RAG) systems are, simply put, the natural evolution of standalone large language models (LLMs). RAG addresses several key limitations of classical LLMs, like model hallucinations or a lack of up-to-date, relevant knowledge needed to generate grounded, fact-based responses to user queries.

In a related article series, Understanding RAG, we provided a comprehensive overview of RAG systems, their characteristics, practical considerations, and challenges. Now we synthesize part of those lessons and combine them with the latest trends and techniques to describe seven key steps deemed essential to mastering the development of RAG systems.

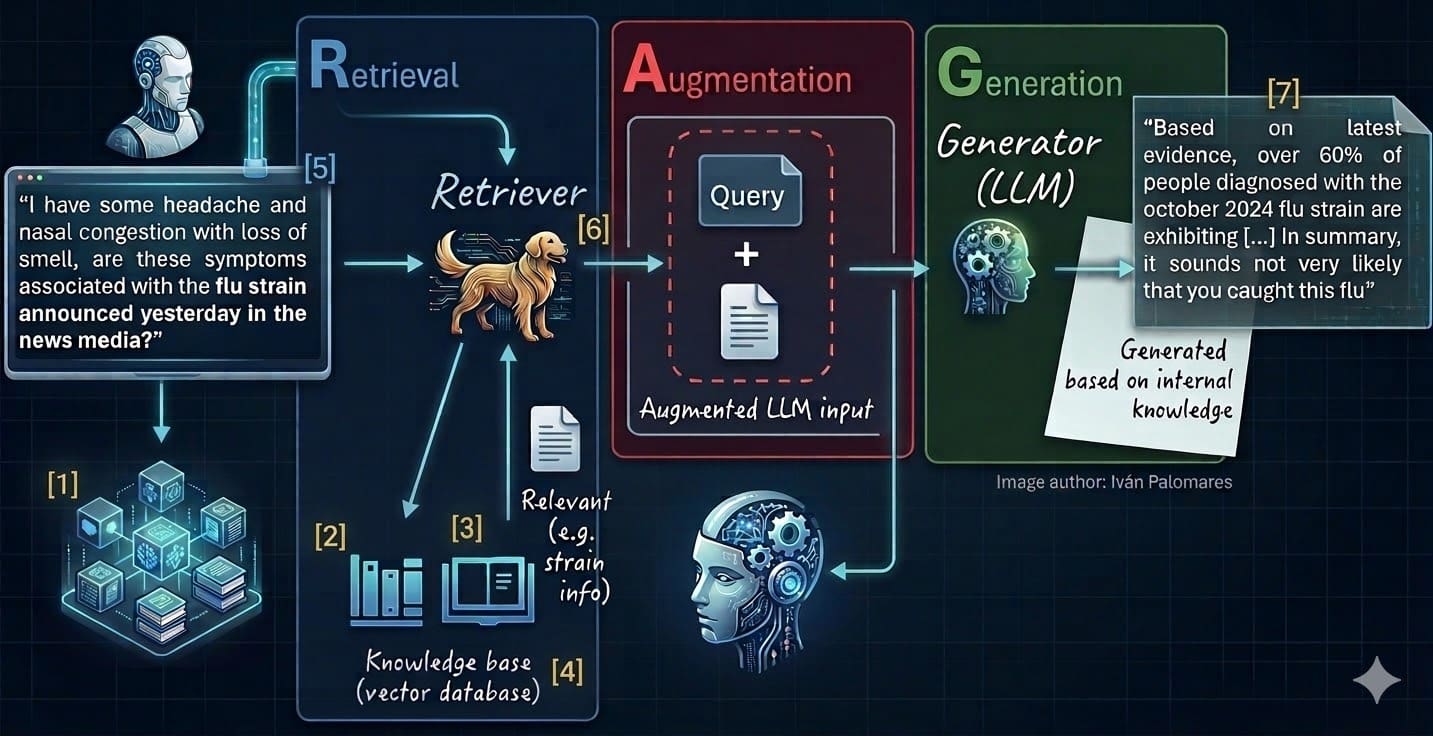

These seven steps are related to different stages or components of a RAG environment, as shown in the numeric labels ([1] to [7]) in the diagram below, which illustrates a classical RAG architecture:

7 Steps to Mastering RAG Systems (see numbered labels 1-7 and list below)

- Select and clean data sources

- Chunking and splitting

- Embedding/vectorization

- Populate vector databases

- Query vectorization

- Retrieve relevant context

- Generate a grounded answer

# 1. Selecting and Cleaning Data Sources

The "garbage in, garbage out" principle takes its maximum significance in RAG. Its value is directly proportional to the relevance, quality, and cleanliness of the source text data it can retrieve. To ensure high-quality knowledge bases, identify high-value data silos and periodically audit your bases. Before ingesting raw data, perform an effective cleaning process through robust pipelines that apply critical steps like removing personally identifiable information (PII), eliminating duplicates, and addressing other noisy elements. This is a continuous engineering process to be applied every time new data is incorporated.

You can read through this article to get an overview of data cleaning techniques.

# 2. Chunking and Splitting Documents

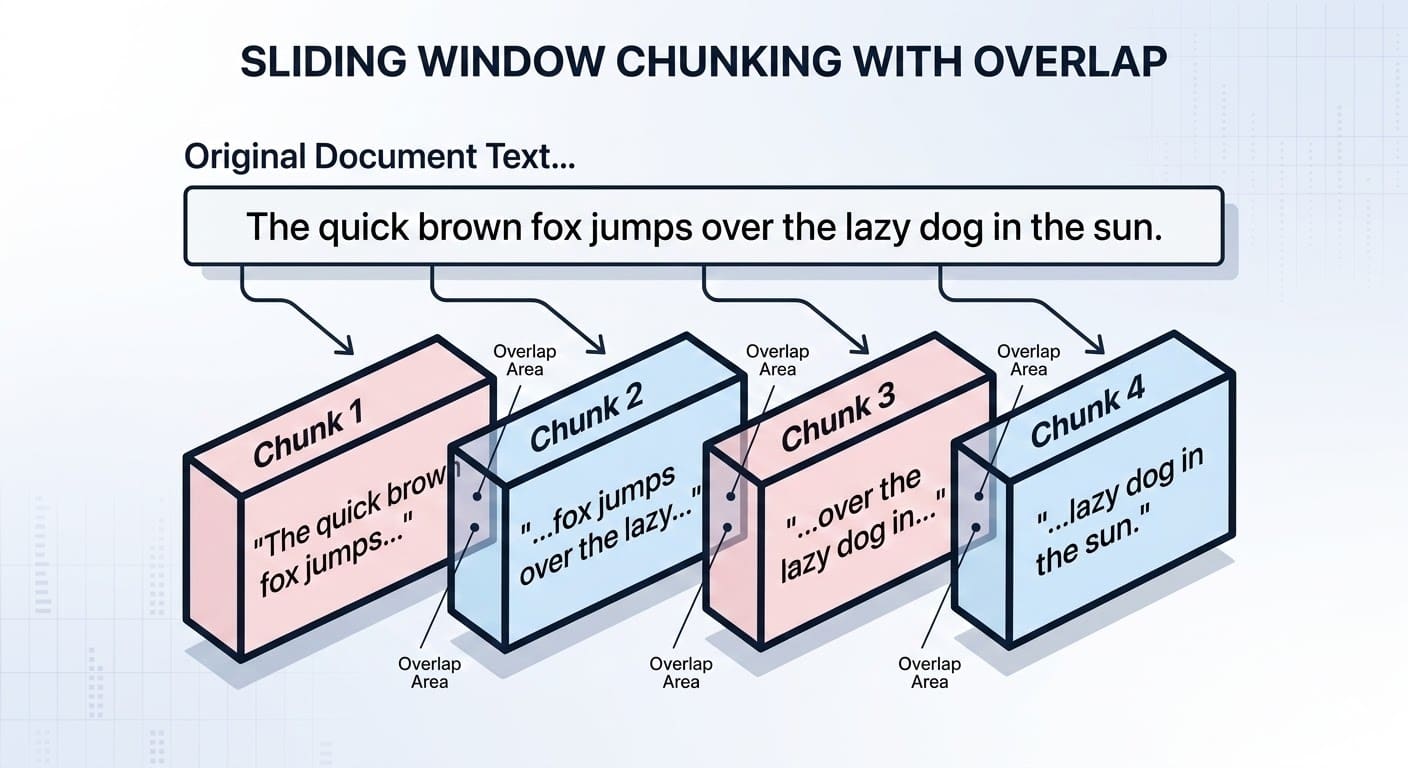

Many instances of text data or documents, like literature novels or PhD theses, are too large to be embedded as a single data instance or unit. Chunking consists of splitting long texts into smaller parts that retain semantic significance and keep contextual integrity. It requires a careful approach: not too many chunks (incurring possible loss of context), but not too few either — oversized chunks affect semantic search later on!

There are diverse chunking approaches: from those based on character count to those driven by logical boundaries like paragraphs or sections. LlamaIndex and LangChain, with their associated Python libraries, can certainly help with this task by implementing more advanced splitting mechanisms.

Chunking may also consider overlap among parts of the document to preserve consistency in the retrieval process. For the sake of illustration, this is what such chunking may look like over a small, toy-sized text:

Chunking documents in RAG systems with overlap | Image by Author

In this installment of the RAG series, you can also learn the extra role of document chunking processes in managing the context size of RAG inputs.

# 3. Embedding and Vectorizing Documents

Once documents are chunked, the next step before having them securely stored in the knowledge base is to translate them into "the language of machines": numbers. This is typically done by converting each text into a vector embedding — a dense, high-dimensional numeric representation that captures semantic characteristics of the text. In recent years, specialized LLMs to do this task have been built: they are called embedding models and include well-known open-source options like Hugging Face's all-MiniLM-L6-v2.

Learn more about embeddings and their advantages over classical text representation approaches in this article.

# 4. Populating the Vector Database

Unlike traditional relational databases, vector databases are designed to effectively enable the search process through high-dimensional arrays (embeddings) that represent text documents — a critical stage of RAG systems for retrieving relevant documents to the user's query. Both open-source vector stores like FAISS or freemium alternatives like Pinecone exist, and can provide excellent solutions, thereby bridging the gap between human-readable text and math-like vector representations.

This code excerpt is used to split text (see point 2 earlier) and populate a local, free vector database using LangChain and Chroma — assuming we have a long document to store in a file called knowledge_base.txt:

from langchain_community.document_loaders import TextLoader

from langchain_text_splitters import RecursiveCharacterTextSplitter

from langchain_community.embeddings import HuggingFaceEmbeddings

from langchain_community.vectorstores import Chroma

# Load and chunk the data

docs = TextLoader("knowledge_base.txt").load()

chunks = RecursiveCharacterTextSplitter(chunk_size=500, chunk_overlap=50).split_documents(docs)

# Create text embeddings using a free open-source model and store in ChromaDB

embedding_model = HuggingFaceEmbeddings(model_name="all-MiniLM-L6-v2")

vector_db = Chroma.from_documents(documents=chunks, embedding=embedding_model, persist_directory="./db")

print(f"Successfully stored {len(chunks)} embedded chunks.")

Read more about vector databases here.

# 5. Vectorizing Queries

User prompts expressed in natural language are not directly matched to stored document vectors: they must be translated too, using the same embedding mechanism or model (see step 3). In other words, a single query vector is built and compared against the vectors stored in the knowledge base to retrieve, based on similarity metrics, the most relevant or similar documents.

Some advanced approaches for query vectorization and optimization are explained in this part of the Understanding RAG series.

# 6. Retrieving Relevant Context

Once your query is vectorized, the RAG system's retriever performs a similarity-based search to find the closest matching vectors (document chunks). While traditional top-k approaches often work, advanced methods like fusion retrieval and reranking can be used to optimize how retrieved results are processed and integrated as part of the final, enriched prompt for the LLM.

Check out this related article for more about these advanced mechanisms. Likewise, managing context windows is another important process to apply when LLM capabilities to handle very large inputs are limited.

# 7. Generating Grounded Answers

Finally, the LLM comes into the scene, takes the augmented user's query with retrieved context, and is instructed to answer the user's question using that context. In a properly designed RAG architecture, by following the previous six steps, this usually leads to more accurate, defensible responses that may even include citations to our own data used to build the knowledge base.

At this point, evaluating the quality of the response is vital to measure how the overall RAG system behaves, and signaling when the model may need fine-tuning. Evaluation frameworks for this end have been established.

# Conclusion

RAG systems or architectures have become an almost indispensable aspect of LLM-based applications, and commercial, large-scale ones rarely miss them nowadays. RAG makes LLM applications more reliable and knowledge-intensive, and they help these models generate grounded responses based on evidence, sometimes predicated on privately owned data in organizations.

This article summarizes seven key steps to mastering the process of constructing RAG systems. Once you have this fundamental knowledge and skills down, you will be in a good position to develop enhanced LLM applications that unlock enterprise-grade performance, accuracy, and transparency — something not possible with well-known models used on the Internet.

Iván Palomares Carrascosa is a leader, writer, speaker, and adviser in AI, machine learning, deep learning & LLMs. He trains and guides others in harnessing AI in the real world.