Introducing Gen: MIT’s New Language That Wants to be the TensorFlow of Programmable Inference

Introducing Gen: MIT’s New Language That Wants to be the TensorFlow of Programmable Inference

Researchers from MIT recently unveiled a new probabilistic programming language named Gen, a language which allow researchers to write models and algorithms from multiple fields where AI techniques are applied without having to deal with equations or manually write high-performance code.

The field of probabilistic programming languages (PPL) is experiencing a marvelous renaissance carried by the rapid growth of machine learning technologies. In just a few years, PPLs have gone from an obscure area of statistical research to counting over a dozen of really active open source initiatives. Recently, researchers from the Massachusetts Institute of Technology(MIT) unveiled a new probabilistic programming language named Gen. The new language allow researchers to write models and algorithms from multiple fields where AI techniques are applied — such as computer vision, robotics, and statistics — without having to deal with equations or manually write high-performance code.

PPLs are a regular component of machine learning pipelines but its implementation remains challenging. While there have been a notable increase of PPLs in the market, most of them remain constrained to research efforts and impractical for general-purpose applications. A similar challenge was experienced in the deep learning space until Google open sourced TensorFlow in 2015. With TensorFlow, developers were able to build highly sophisticated and yet efficient deep learning models using a consistent framework. In some sense, Gen is looking to do for probabilistic programming what TensorFlow did for deep learning. However, in order to do that, Gen needs to address a fine balance about two key characteristics of PPLs

Expressiveness vs. Efficiency

The biggest challenge of modern PPLs is to balance both modeling expressiveness and inference efficiency. While many PPLs are syntactically rich that can be used to represent almost any model, they tend to support a limited set of inference algorithms that converge prohibitively slowly. Other PPLs are rich in inference algorithms but remained constrained to specific domains making it impractical for general-purpose applications.

Expanding into the expressiveness-Efficiency friction, a general-purpose PPL should enable two fundamental efficiency vectors:

1) Inference Algorithm Efficiency: A general purpose PPL should allow developers to create custom and highly sophisticated models without sacrificing the performance of the underlying components. The more expressive the PPL syntax is, the more challenging the optimization process will be.

2) Implementation Efficiency: A general purpose PPL requires a system to run inference algorithms that go beyond the algorithm itself. Implementation efficiency is determined by factors such as the data structures used to store algorithm state, and whether the system exploits caching and incremental computation.

Gen

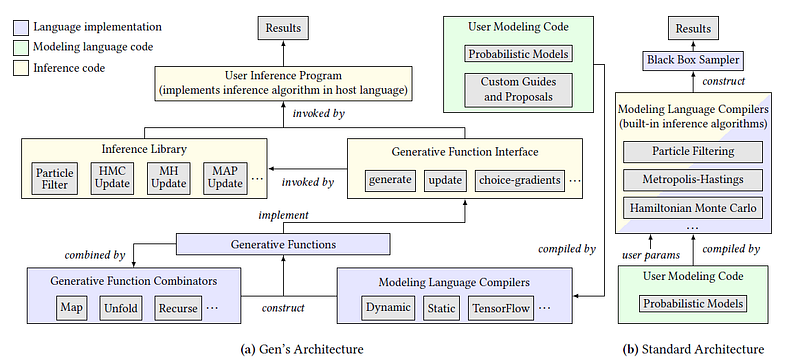

Gen addresses some of the challenges mentioned in the previous section by leveraging a novel architecture that improves upon some of the traditional PPL techniques. Based on the Julia programming language, Gen introduces an architecture that represent models not as program code in a Turing-complete modeling language, but as black boxes that expose capabilities useful for inference via a common interface. These black boxes are called generative functions and include an interface with the following capabilities:

I. Tools for Constructing Models: Gen provides multiple interoperable modeling languages, each striking a different flexibility/efficiency trade-off. A single model can combine code from multiple modeling languages. The resulting generative functions leverage data structures well-suited to the model as well as incremental computation.

II. Tools for Tailoring Inference: Gen provides a high-level library for inference programming, which implements inference algorithm building blocks that interact with models only through generative functions.

III. Evaluation: Gen provides an empirical model to evaluate its performance against alternatives across well-known inference problems.

The following figure illustrates the Gen architecture. As you can see, the frameworks supports difference inference algorithms as well as a layer of abstraction based on the concept of generative functions.

Using Gen

Starting with Gen is fundamentally simple. The language can be installed using the Julia package manager.

pkg> add https://github.com/probcomp/Gen

Authoring a generative function in Gen is as simple as writing a Julian function with a few extensions.

@gen function foo(prob::Float64)

z1 = @trace(bernoulli(prob), :a)

z2 = @trace(bernoulli(prob), :b)

return z1 || z2

end

Gen also includes a visualization framework that allows to plot the inference models and evaluate its efficiency.

# Start a visualization server on port 8000

server = VizServer(8000)

# Initialize a visualization with some parameters

viz = Viz(server, joinpath(@__DIR__, "vue/dist"),

Dict("xs" => xs, "ys" => ys, "num" => length(xs), "xlim" => [minimum(xs), maximum(xs)], "ylim" => [minimum(ys), maximum(ys)])) # Open the visualization in a browser

openInBrowser(viz)

The Competition

Gen is not the only language trying to address the challenges of programmable inference. In recent years, the PPL space has exploded producing a number of strong alternatives:

Edward is a Turing-complete probabilistic programming language(PPL) written in Python. Edward was originally championed by the Google Brain team but now has an extensive list of contributors. The original research paper of Edward was published in March 2017 and since then the stack has seen a lot of adoption within the machine learning community. Edward fuses three fields: Bayesian statistics and machine learning, deep learning, and probabilistic programming. The library integrates seamlessly with deep learning frameworks such as Keras and TensorFlow.

Pyro is a deep probabilistic programming language(PPL) released by Uber AI Labs. Pyro is built on top of PyTorch and is based on four fundamental principles:

- Universal: Pyro is a universal PPL — it can represent any computable probability distribution. How? By starting from a universal language with iteration and recursion (arbitrary Python code), and then adding random sampling, observation, and inference.

- Scalable: Pyro scales to large data sets with little overhead above hand-written code. How? By building modern black box optimization techniques, which use mini-batches of data, to approximate inference.

- Minimal: Pyro is agile and maintainable. How? Pyro is implemented with a small core of powerful, composable abstractions. Wherever possible, the heavy lifting is delegated to PyTorch and other libraries.

- Flexible: Pyro aims for automation when you want it and control when you need it. How? Pyro uses high-level abstractions to express generative and inference models, while allowing experts to easily customize inference.

Microsoft recently open sourced Infer.Net a framework that simplifies probabilistic programming for .Net developers. Microsoft Research has been working on Infer.Net since 2004 but it has been only recently, with the emergence of deep learning, that the framework has become really popular. Infer.Net provides some strong differentiators that makes it a strong choice for developers venturing into the Deep PPL space.

Gen is one of the newest but also one of the most interesting additions to the PPL space. The combination of statistics and deep learning is a key element of the future of the artificial intelligence space. Efforts like Gen are attempting to democratize PPLs in the same way TensorFlow did for deep learning.

Bio: Jesus Rodriguez is a technology expert, executive investor, and startup advisor. A software scientist by background, Jesus is an internationally recognized speaker and author with contributions that include hundreds of articles and presentations at industry conferences.

Original. Reposted with permission.

Related:

- Seven Key Dimensions to Help You Understand Artificial Intelligence Environments

- How the Lottery Ticket Hypothesis is Challenging Everything we Knew About Training Neural Networks

- PySyft and the Emergence of Private Deep Learning

Introducing Gen: MIT’s New Language That Wants to be the TensorFlow of Programmable Inference

Introducing Gen: MIT’s New Language That Wants to be the TensorFlow of Programmable Inference