Bayesian Hyperparameter Optimization with tune-sklearn in PyCaret

PyCaret, a low code Python ML library, offers several ways to tune the hyper-parameters of a created model. In this post, I'd like to show how Ray Tune is integrated with PyCaret, and how easy it is to leverage its algorithms and distributed computing to achieve results superior to default random search method.

By Antoni Baum, Core Contributor to PyCaret and Contributor to Ray Tune

Here’s a situation every PyCaret user is familiar with: after selecting a promising model or two from compare_models(), it’s time to tune its hyperparameters to squeeze out all of the model’s potential with tune_model().

from pycaret.datasets import get_data

from pycaret.classification import *

data = get_data("juice")

exp = setup(

data,

target = "Purchase",

)

best_model = compare_models()

tuned_best_model = tune_model(best_model)(If you would like to learn more about PyCaret — an open-source, low-code machine learning library in Python, this guide is a good place to start.)

By default, tune_model() uses the tried and tested RandomizedSearchCV from scikit-learn. However, not everyone knows about the various advanced options tune_model()provides.

In this post, I will show you how easy it is to use other state-of-the-art algorithms with PyCaret thanks to tune-sklearn, a drop-in replacement for scikit-learn’s model selection module with cutting edge hyperparameter tuning techniques. I’ll also report results from a series of benchmarks, showing how tune-sklearn is able to easily improve classification model performance.

Random search vs Bayesian optimization

Hyperparameter optimization algorithms can vary greatly in efficiency.

Random search has been a machine learning staple and for a good reason: it’s easy to implement, understand and gives good results in reasonable time. However, as the name implies, it is completely random — a lot of time can be spent on evaluating bad configurations. Considering that the amount of iterations is limited, it’d make sense for the optimization algorithm to focus on configurations that it considers promising by taking into account already evaluated configurations.

That is, in essence, the idea of Bayesian optimization (BO). BO algorithms keep track of all evaluations and use the data to construct a “surrogate probability model”, which can be evaluated a lot faster than a ML model. The more configurations have been evaluated, the more informed the algorithm becomes, and the closer the surrogate model becomes to the actual objective function. That way the algorithm can make an informed choice about which configurations to evaluate next, instead of merely sampling random ones. If you would like to learn more about Bayesian optimization, check out this excellent article by Will Koehrsen.

Fortunately, PyCaret has built-in wrappers for several optimization libraries, and in this article, we’ll be focusing on tune-sklearn.

tune-sklearn in PyCaret

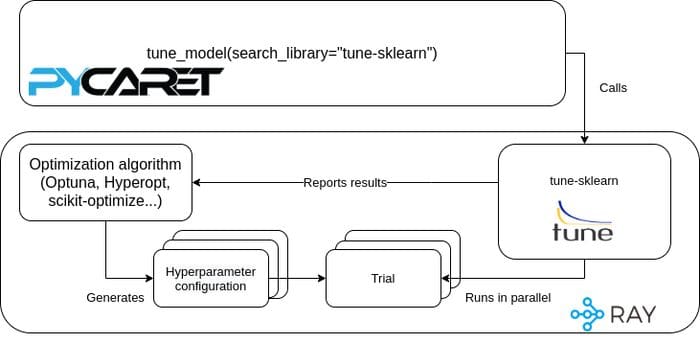

tune-sklearn is a drop-in replacement for scikit-learn’s model selection module. tune-sklearn provides a scikit-learn based unified API that gives you access to various popular state of the art optimization algorithms and libraries, including Optuna and scikit-optimize. This unified API allows you to toggle between many different hyperparameter optimization libraries with just a single parameter.

tune-sklearn is powered by Ray Tune, a Python library for experiment execution and hyperparameter tuning at any scale. This means that you can scale out your tuning across multiple machines without changing your code.

To make things even simpler, as of version 2.2.0, tune-sklearn has been integrated into PyCaret. You can simply do pip install "pycaret[full]" and all of the optional dependencies will be taken care of.

How it all works together

!pip install "pycaret[full]"

from pycaret.datasets import get_data

from pycaret.classification import *

data = get_data("juice")

exp = setup(

data,

target = "Purchase",

)

best_model = compare_models()

tuned_best_model_hyperopt = tune_model(

best_model,

search_library="tune-sklearn",

search_algorithm="hyperopt",

n_iter=20

)

tuned_best_model_optuna = tune_model(

best_model,

search_library="tune-sklearn",

search_algorithm="optuna",

n_iter=20

)Just by adding two arguments to tune_model()you can switch from random search to tune-sklearn powered Bayesian optimization through Hyperopt or Optuna. Remember that PyCaret has built-in search spaces for all of the included models, but you can always pass your own, if you wish.

But how well do they compare to random search?

A simple experiment

In order to see how Bayesian optimization stacks up against random search, I have conducted a very simple experiment. Using the Kaggle House Prices dataset, I have created two popular regression models using PyCaret — Random Forest and Elastic Net. Then, I tuned both of them using scikit-learn’s Random Search and tune-sklearn’s Hyperopt and Optuna Searchers (20 iterations for all, minimizing RMSLE). The process was repeated three times with different seeds and the results averaged. Below is an abridged version of the code — you can find the full code here.

from pycaret.datasets import get_data

from pycaret.regression import *

data = get_data("house")

exp = setup(

data,

target = "SalePrice",

test_data=data, # so that the entire dataset is used for cross validation - do not normally do this!

session_id=42,

fold=5

)

rf = create_model("rf")

en = create_model("en")

tune_model(rf, search_library = "scikit-learn", optimize="RMSLE", n_iter=20)

tune_model(rf, search_library = "tune-sklearn", search_algorithm="hyperopt", n_iter=20)

tune_model(rf, search_library = "tune-sklearn", search_algorithm="optuna", optimize="RMSLE", n_iter=20)

tune_model(en, search_library = "scikit-learn", optimize="RMSLE", n_iter=20)

tune_model(en, search_library = "tune-sklearn", search_algorithm="hyperopt", n_iter=20)

tune_model(en, search_library = "tune-sklearn", search_algorithm="optuna", optimize="RMSLE", n_iter=20)Isn’t it great how easy PyCaret makes things? Anyway, here are the RMSLE scores I have obtained on my machine:

Experiment’s RMSLE scores

And to put it into perspective, here’s the percentage improvement over random search:

Percentage improvement over random search

All of that using the same number of iterations in comparable time. Remember that given the stochastic nature of the process, your mileage may vary. If your improvement is not noticeable, try increasing the number of iterations (n_iter)from the default 10. 20–30 is usually a sensible choice.

What’s great about Ray is that you can effortlessly scale beyond a single machine to a cluster of tens, hundreds or more nodes. While PyCaret doesn’t support full Ray integration yet, it is possible to initialize a Ray cluster before tuning — and tune-sklearn will automatically use it.

exp = setup(

data,

target = "SalePrice",

session_id=42,

fold=5

)

rf = create_model("rf")

tune_model(rf, search_library = "tune-sklearn", search_algorithm="optuna", optimize="RMSLE", n_iter=20) # Will run on Ray cluster!Provided all of the necessary configuration is in place ( RAY_ADDRESSenvironment variable), nothing more is needed in order to leverage the power of Ray’s distributed computing for hyperparameter tuning. Because hyperparameter optimization is usually the most performance-intensive part of creating an ML model, distributed tuning with Ray can save you a lot of time.

Conclusion

In order to speed up hyperparameter optimization in PyCaret, all you need to do is install the required libraries and change two arguments in tune_model() — and thanks to built-in tune-sklearn support, you can easily leverage Ray’s distributed computing to scale up beyond your local machine.

Make sure to check out the documentation for PyCaret, Ray Tune and tune-sklearn as well as the GitHub repositories of PyCaret and tune-sklearn. Finally, if you have any questions or want to connect with the community, join PyCaret’s Slack and Ray’s Discourse.

Thanks to Richard Liaw and Moez Ali for proofreading and advice.

Bio: Antoni Baum is a Computer Science and Econometrics MSc student, as well as a core contributor to PyCaret and contributor to Ray Tune.

Original. Reposted with permission.

Related:

- Algorithms for Advanced Hyper-Parameter Optimization/Tuning

- 5 Tools for Effortless Data Science

- 5 Things You Are Doing Wrong in PyCaret