Deep Learning for Visual Question Answering

Here we discuss about the Visual Question Answering problem, and I’ll also present neural network based approaches for same.

By Avi Singh, IIT.

An year or so ago, a chatbot named Eugene Goostman made it to the mainstream news, after having been reported as the first computer program to have passed the famed Turing Test in an event organized at the University of Reading. While the organizers hailed it as a historical achievement, most of the scientific community wasn’t impressed. This leads us to the question: Is the Turing Test, in its original form, a suitable test for AI in the modern day?

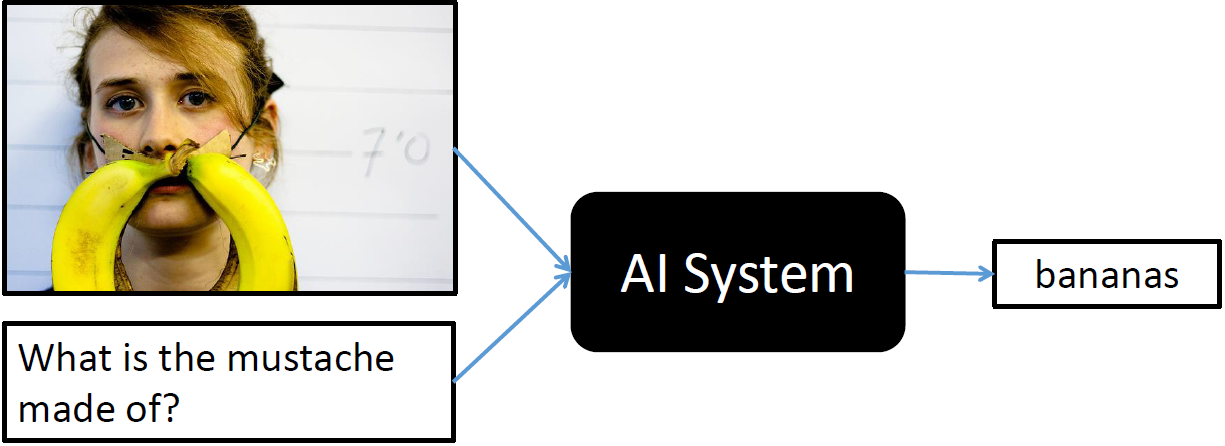

In the last couple of years, a number of papers (like this paper from JHU/Brown, and this one from MPI) have suggested that the task of Visual Question Answering (VQA, for short) can be used as an alternative Turing Test. The task involves answering an open-ended question (or a series of questions) about an image. An example is shown below:

Image from visualqa.org

The AI system needs to solve a number of sub-problems in Natural Language Processing and Computer Vision, in addition to being able to perform some kind of “common-sense” reasoning. It needs to localize the subject being referenced (the woman’s face, and more specifically the region around her lips), needs to detect objects (the banana), and should also have some common-sense knowledge that the word mustache is often used to refer to markings or objects on the face that are not actually mustaches (like milk mustaches). Since the problem cuts through two two very different modalities (vision and text), and requires high-level understanding of the scene, it appears to be an ideal candidate for a true Turing Test. The problem also has real world applications, like helping the visually impaired.

A few days ago, the Visual QA Challenge was launched, and along with it came a large dataset (~750K questions on ~250K images). After the MS COCO Image Captioning Challenge sparked a lot of interest in problem of image captioning (or was it the interest that led to the challenge?), the time seems ripe to move onto a much harder problem at the intersection of NLP and Vision.

This post will present ways to model this problem using Neural Networks, exploring both Feedforward Neural Networks, and the much more exciting Recurrent Neural Networks (LSTMs, to be specific). If you do not know much about Neural Networks, then I encourage you to check these two awesome blogs: Colah’s Blog and Karpathy’s Blog. Specifically, check out the posts on Recurrent Neural Nets, Convolutional Neural Nets and LSTM Nets. The models in this post take inspiration from this ICCV 2015 paper, this ICCV 2015 paper, and this NIPS 2015 paper.

Generating Answers

An important aspect of solving this problem is to have a system that can generate new answers. While most of the answers in the VQA dataset are short (1-3 words), we would still like to a have a system that can generate arbitrarily long answers, keeping up with our spirit of the Turing test. We can perhaps take inspiration from papers on Sequence to Sequence Learning using RNNs, that solve a similar problem when generating translations of arbitrary length. Multi-word methods have been presented for VQA too. However, for the purpose of this blog post, we will ignore this aspect of the problem. We will select the 1000 most frequent answers in the VQA training dataset, and solve the problem in a multi-class classification setting. These top 1000 answers cover over 80% of the answers in the VQA training set, so we can still expect to get reasonable results.

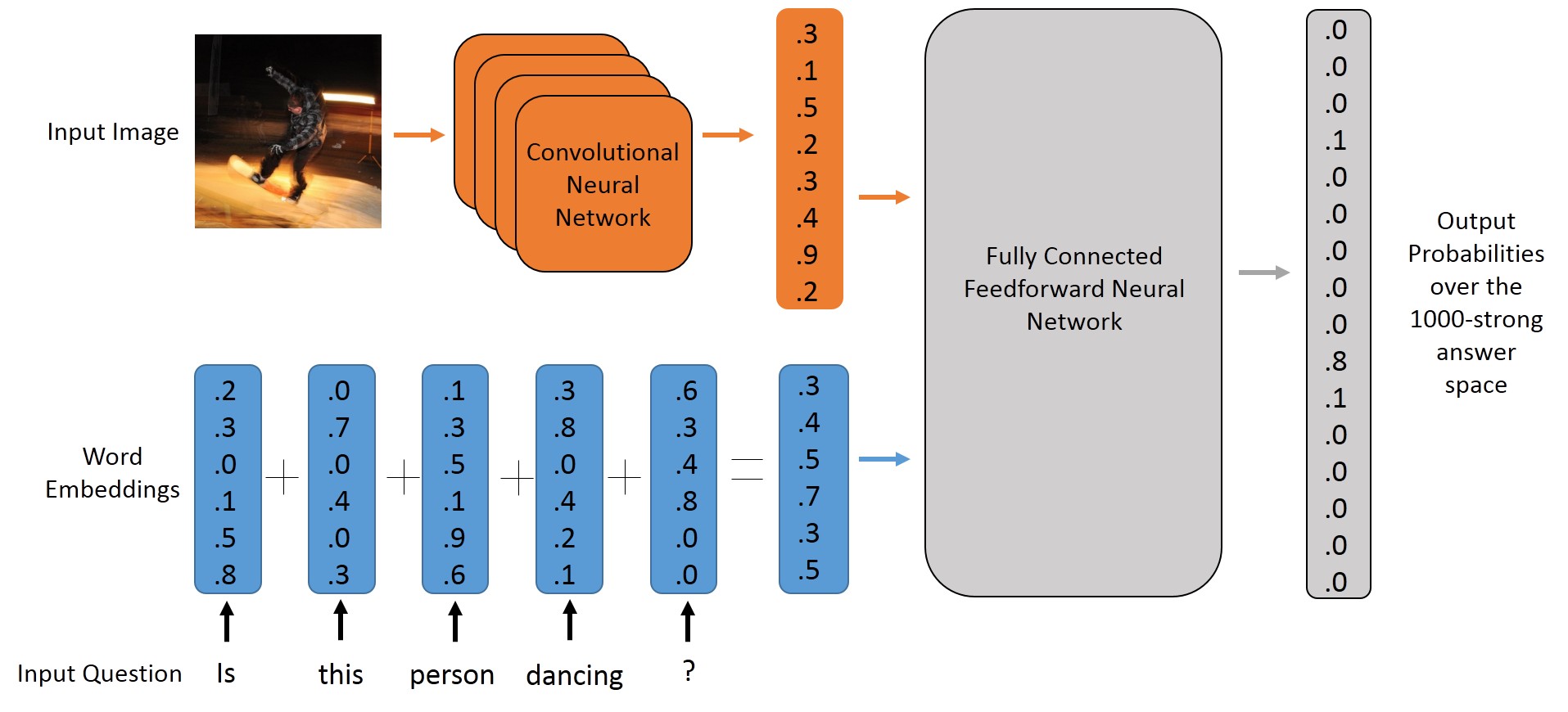

The Feedforward Neural Model

To get started, let’s first try to model the problem using a MultiLayer Perceptron. An MLP is a simple feedforward neural net that maps a feature vector (of fixed length) to an appropriate output. In our problem, this output will be a probability distribution over the set of possible answers. We will be using Keras, an awesome deep learning library based on Theano, and written in Python. Setting up Keras is fairly easy, just have a look at their readme to get started.

In order to use the MLP model, we need to map all our input questions and images to a feature vector of fixed length. We perform the following operations to achieve this:

- For the question, we transform each word to its word vector, and sum up all the vectors. The length of this feature vector will be same as the length of a single word vector, and the word vectors (also called embeddings) that we use have a length of

300. - For the image, we pass it through a Deep Convolutional Neural Network (the well-known VGG Architecture), and extract the activation from the second last layer (before the softmax layer, that is). Size of this feature vector is

4096.