Must-Know: How to evaluate a binary classifier

Binary classification is a basic concept which involves classifying the data into two groups. Read on for some additional insight and approaches.

Editor's note: This post was originally included as an answer to a question posed in our 17 More Must-Know Data Science Interview Questions and Answers series earlier this year. The answer was thorough enough that it was deemed to deserve its own dedicated post.

Binary classification involves classifying the data into two groups, e.g. whether or not a customer buys a particular product or not (Yes/No), based on independent variables such as gender, age, location etc.

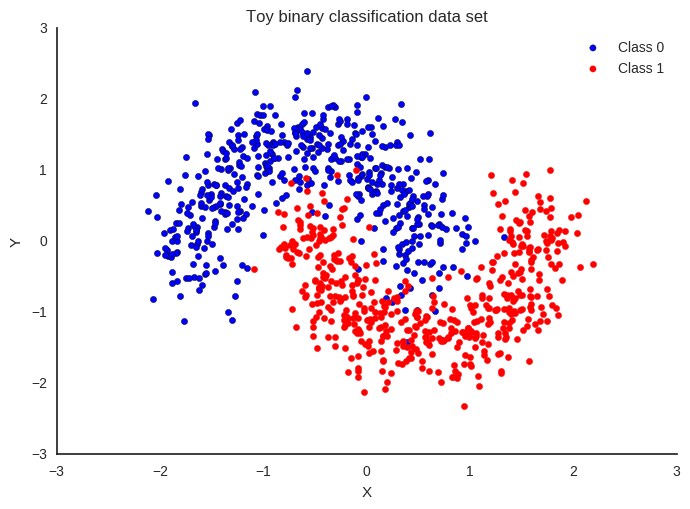

Toy binary classification dataset (source).

As the target variable is not continuous, binary classification model predicts the probability of a target variable to be Yes/No. To evaluate such a model, a metric called the confusion matrix is used, also called the classification or co-incidence matrix. With the help of a confusion matrix, we can calculate important performance measures:

- True Positive Rate (TPR) or Hit Rate or Recall or Sensitivity = TP / (TP + FN)

- False Positive Rate(FPR) or False Alarm Rate = 1 - Specificity = 1 - (TN / (TN + FP))

- Accuracy = (TP + TN) / (TP + TN + FP + FN)

- Error Rate = 1 – accuracy or (FP + FN) / (TP + TN + FP + FN)

- Precision = TP / (TP + FP)

- F-measure: 2 / ( (1 / Precision) + (1 / Recall) )

- ROC (Receiver Operating Characteristics) = plot of FPR vs TPR

- AUC (Area Under the Curve)

- Kappa statistics

You can find more details about these measures here: The Best Metric to Measure Accuracy of Classification Models.

All of these measures should be used with domain skills and balanced, as, for example, if you only get a higher TPR in predicting patients who don’t have cancer, it will not help at all in diagnosing cancer.

In the same example of cancer diagnosis data, if only 2% or less of the patients have cancer, then this would be a case of class imbalance, as the percentage of cancer patients is very small compared to rest of the population. There are main 2 approaches to handle this issue:

- Use of a cost function: In this approach, a cost associated with misclassifying data is evaluated with the help of a cost matrix (similar to the confusion matrix, but more concerned with False Positives and False Negatives). The main aim is to reduce the cost of misclassifying. The cost of a False Negative is always more than the cost of a False Positive. e.g. wrongly predicting a cancer patient to be cancer-free is more dangerous than wrongly predicting a cancer-free patient to have cancer.

Total Cost = Cost of FN * Count of FN + Cost of FP * Count of FP

- Use of different sampling methods: In this approach, you can use over-sampling, under-sampling, or hybrid sampling. In over-sampling, minority class observations are replicated to balance the data. Replication of observations leading to overfitting, causing good accuracy in training but less accuracy in unseen data. In under-sampling, the majority class observations are removed causing loss of information. It is helpful in reducing processing time and storage, but only useful if you have a large data set.

Find more about class imbalance here.

If there are multiple classes in the target variable, then a confusion matrix of dimensions equal to the number of classes is formed, and all performance measures can be calculated for each of the classes. This is called a multiclass confusion matrix. e.g. there are 3 classes X, Y, Z in the response variable, so recall for each class will be calculated as below:

Recall_X = TP_X/(TP_X+FN_X) Recall_Y = TP_Y/(TP_Y+FN_Y) Recall_Z = TP_Z/(TP_Z+FN_Z)

Related: