Trustworthy Online Controlled Experiments: A Practical Guide to A/B Testing

The book Trustworthy Online Controlled Experiments: A Practical Guide to A/B Testing by Ron Kohavi (Microsoft, Airbnb), Diane Tang (Google) and Ya Xu (LinkedIn) is available for purchase, with the authors proceeds from the book being donated to charity.

By Ron Kohavi (Microsoft, Airbnb), Diane Tang (Google) and Ya Xu (LinkedIn)

The book Trustworthy Online Controlled Experiments: A Practical Guide to A/B Testing by Ron Kohavi (Microsoft, Airbnb), Diane Tang (Google) and Ya Xu (LinkedIn) is available for purchase. The book’s website is http://experimentguide.com/, where you can find links to Kindle and paperback copies on Amazon, endorsements and additional material. You can also download chapter 1.

The book is #1 new release in Data Mining https://smile.amazon.com/gp/new-releases/books/3654/ and has 17 5-star reviews.

All the authors proceeds from the book will be donated to charity.

Here is an excerpt from the book’s opening, telling the story of one of the most surprising controlled experiments in Microsoft’s history, where tens of thousands of controlled experiments ran.

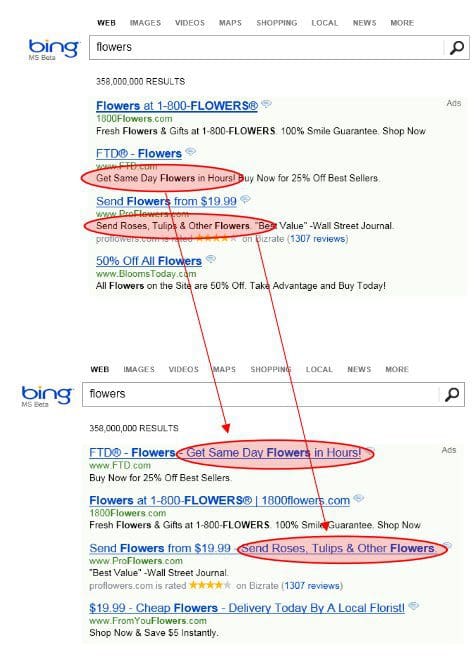

In 2012, an employee working on Bing, Microsoft’s search engine, suggested changing how ad headlines display. The idea was to lengthen the title line of ads by combining it with the text from the first line below the title, as shown below:

Nobody thought this simple change, among the hundreds suggested, would be the best revenue-generating idea in Bing’s history!

The feature was prioritized low and languished in the backlog for more than six months until a software developer decided to try the change, given how easy it was to code. He implemented the idea and began evaluating the idea on real users, randomly showing some of them the new title layout and others the old one. User interactions with the website were recorded, including ad clicks and the revenue generated from them. This is an example of an A/B test, the simplest type of controlled experiment that compares two variants: A and B, or a Control and a Treatment.

A few hours after starting the test, a revenue-too-high alert triggered, indicating that something was wrong with the experiment. The Treatment, that is, the new title layout, was generating too much money from ads. Such “too good to be true” alerts are very useful, as they usually indicate a serious bug, such as cases where revenue was logged twice (double billing) or where only ads displayed, and the rest of the web page was broken.

For this experiment, however, the revenue increase was valid. Bing’s revenue increased by a whopping 12%, which at the time translated to over $100M annually in the US alone, without significantly hurting key user-experience metrics. The experiment was replicated multiple times over a long period.