In Data We Trust: Data Centric AI

Learn how data-centric AI can improve your model's overall performance.

Image by Author

In 2012, Authors Björn Bloching, Lars Luck, and Thomas Ramge published In Data We Trust: How Customer Data is Revolutionising Our Economy. The book goes into detail about how a lot of companies have all the information they need at their fingertips. Companies no longer need to make decisions based on their gut feeling and the market, they can use streams of data to give them a better understanding of what the future looks like and what their next move should be.

As the world of data, in particular, Artificial Intelligence continues to grow - more and more people are skeptical. Some may say that the use of data and autonomous features have improved our day-to-day lives. Whereas some are weary of how their data is being used and how the development of AI can have severe implications for us as humans.

Although AI has proven to produce some impressive outcomes, it has also failed - even the big hyperscalers such as Google and Amazon. In the year of 2019, Amazon's Rekognition software conducted by the ACLU of Massachusetts using facial recognition falsely matched 27 professional athletes to mugshots of the Super Bowl champions.

These failures can have a major impact on the continuous development of AI. People will naturally lose trust and want to steer away from it. The failure does not come from AI in general, it comes from the data inputted and used in these models to generate these false outputs.

This is where we need to trust data and implement Data Centric AI.

What is Data-Centric AI?

If you’ve ever worked in the tech industry or with machine learning models, you see people focus on the building of software, a model, etc. However, this can be the death of this software if the correct input is producing incorrect outputs.

For example, no point in spending years are trying to build a car that looks aesthetically pleasing, has a crazy engine, and has all the new tech pieces inside if your fuel supplied at the moment is of bad quality that you can’t even start your car let alone finish your destination.

What’s the point right? Garbage in, garbage out.

It’s the same with AI. What’s the point in spending hours on end building a model when once you put the data inside, it produces errors?

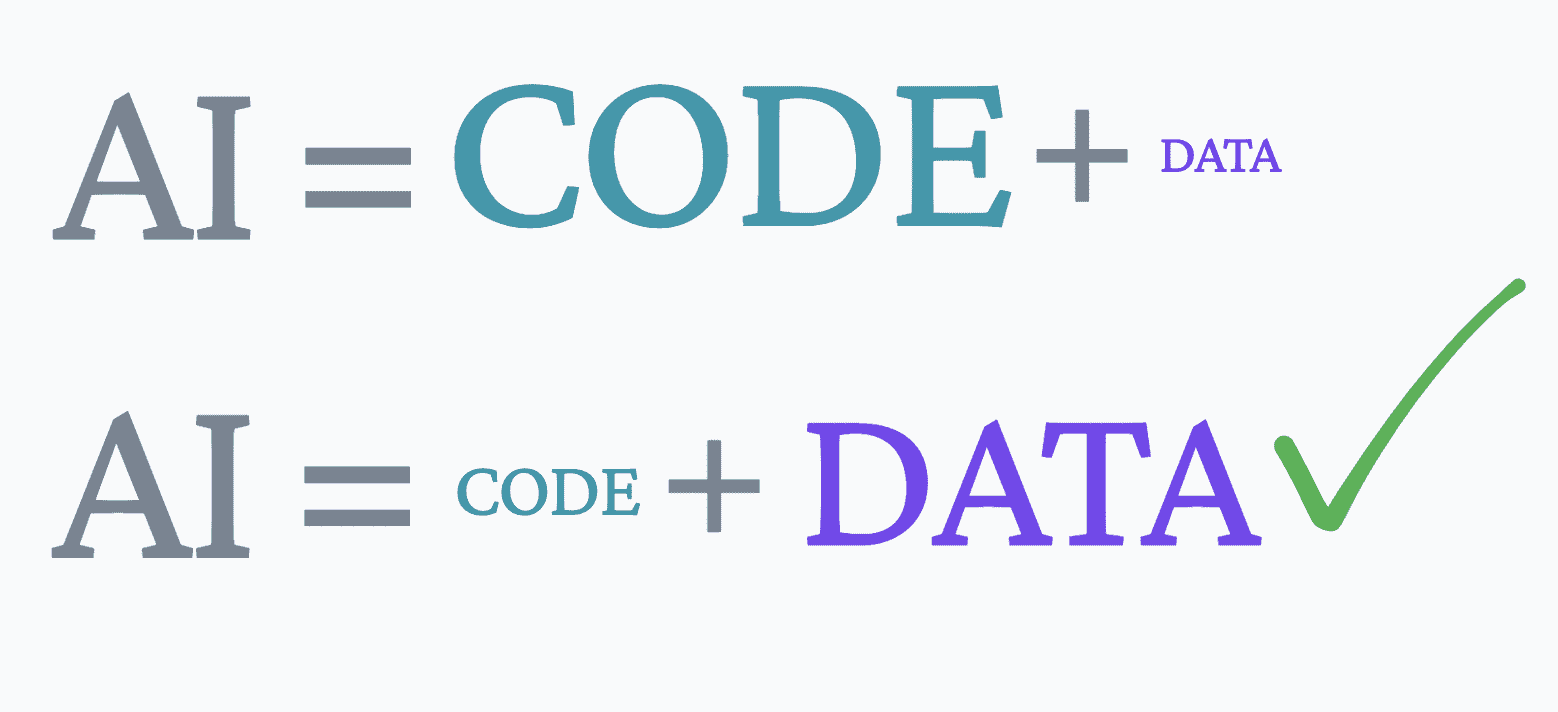

Data-Centric AI is a system that focuses solely on data, rather than code. I’m not saying that it doesn’t use code, of course, it does. It systematically engineers the data which is used to build the AI systems and combines that with the valuable elements of code.

What Type of Data Do We Need?

For AI models to be trustworthy and produce accurate outputs, it needs clean data and diverse data. Without these two elements of data, you will probably not be able to make accurate decision-making processes in the future. Quality > Quantity.

If your data is not clean or diverse enough, it will naturally decrease the performance and produce errors in your output. Data that is neither clean nor diverse will confuse a model as it has to work 10x harder to understand the data. So what tools can we use to ensure we have clean, diverse data?

Data Labelling

Labeling data is an important element to turn unclean data into clean data and to achieve this you can use data labeling tools. Labeling tools can quickly annotate images and other forms of data, such as Named-entity recognition (NER) for document classification.

Data labeling tools help Data Scientists, Engineers, and other experts working with data in improving the overall accuracy and performance of their model

The labeling tool can be and is advised to be used in relation to the ontology, which is described below.

Ontology

Having an ontology. An ontology is a specification of the meanings of the symbols in an information system. It is a virtual catalog of definitions, which acts as your dictionary. The ontology acts like a reference point or a rich repository during the data labeling process.

Human-in-the-loop

As it says, implementing a human in the loop of your process can help you achieve clean data. AI overall is trying to imitate human intelligence into computers, so what’s a better way to improve this process than actually bringing a human into the process?

Human-in-the-loop uses human expertise to train good AI by getting them involved with the building of the systems, fine-tuning, and testing the model. This will help to ensure that the data labeling tools have been working effectively, that the outputs produced are improving in accuracy, and that the decision-making is better overall.

Data quality management

Is this an additional cost? Yes. Will it help you in the long run? Of course. If you’re going to do something, it’s best to do it properly the first time then having to go over it a few times to get it right. Although you may see it as an additional cost initially, over time quality management can save you a lot of time and money.

Through data quality management you will be able to identify errors within the data and resolve these earlier on in the process before too much damage has been done.

Data augmentation

Data augmentation is a set of techniques that are used to artificially increase the amount of data available by generating new data points from existing data. This is done by making small changes to existing data points to create new data points.

By creating these variations in the existing data and creating new data points, the model becomes more robust and can learn to make predictions that fit the real world. The more data points you have, the more diverse your data is - the more your model can learn to improve its overall accuracy and performance.

Conclusion

All of the above are good tools to help us against the current challenges the world is facing regarding artificial intelligence. This is a paradigm shift, and companies every day are getting on board. Tech experts know how to build a model and what AI can do, but the focus now is how we can improve it and we’ve understood that it’s based on the data we use.

Nisha Arya is a Data Scientist and Freelance Technical Writer. She is particularly interested in providing Data Science career advice or tutorials and theory based knowledge around Data Science. She also wishes to explore the different ways Artificial Intelligence is/can benefit the longevity of human life. A keen learner, seeking to broaden her tech knowledge and writing skills, whilst helping guide others.