K-Means & Other Clustering Algorithms: A Quick Intro with Python

In this intro cluster analysis tutorial, we'll check out a few algorithms in Python so you can get a basic understanding of the fundamentals of clustering on a real dataset.

Nikos Koufos, LearnDataSci Author.

Clustering is the grouping of objects together so that objects belonging in the same group (cluster) are more similar to each other than those in other groups (clusters). In this intro cluster analysis tutorial, we'll check out a few algorithms in Python so you can get a basic understanding of the fundamentals of clustering on a real dataset.

The Dataset

For the clustering problem, we will use the famous Zachary’s Karate Club dataset. The story behind the data set is quite simple: There was a Karate Club that had an administrator “John A” and an instructor “Mr. Hi” (both pseudonyms). Then a conflict arose between them, causing the students (Nodes) to split into two groups. One that followed John and one that followed Mr. Hi.

Source: Wikipedia

Getting Started with Clustering in Python

But enough with the introductory talk, let’s get to main reason you are here, the code itself. First of all, you need to install both scikit-learn and networkx libraries to complete this tutorial. If you don’t know how, the links above should help you. Also, feel free to follow along by grabbing the source code for this tutorial over on Github.

Usually, the datasets that we want to examine are available in text form (JSON, Excel, simple txt file, etc.) but in our case, networkx provide it for us. Also, to compare our algorithms, we want the truth about the members (who followed whom) which unfortunately is not provided. But with these two lines of code, you will be able to load the data and store the truth (from now on we will refer it as ground truth):

# Load and Store both data and groundtruth of Zachary's Karate Club G = nx.karate_club_graph() groundTruth = [0,0,0,0,0,0,0,0,1,1,0,0,0,0,1,1,0,0,1,0,1,0,1,1,1,1,1,1,1,1,1,1,1,1]

The final step of the data preprocessing, is to transform the graph into a matrix (desirable input for our algorithms). This is also quite simple:

def graphToEdgeMatrix(G): # Initialize Edge Matrix edgeMat = [[0 for x in range(len(G))] for y in range(len(G))] # For loop to set 0 or 1 ( diagonal elements are set to 1) for node in G: tempNeighList = G.neighbors(node) for neighbor in tempNeighList: edgeMat[node][neighbor] = 1 edgeMat[node][node] = 1 return edgeMat

Before we get going with the Clustering Techniques, I would like you to get a visualization on our data. So, let’s compile a simple function to do that:

def drawCommunities(G, partition, pos): # G is graph in networkx form # Partition is a dict containing info on clusters # Pos is base on networkx spring layout (nx.spring_layout(G)) # For separating communities colors dictList = defaultdict(list) nodelist = [] for node, com in partition.items(): dictList[com].append(node) # Get size of Communities size = len(set(partition.values())) # For loop to assign communities colors for i in range(size): amplifier = i % 3 multi = (i / 3) * 0.3 red = green = blue = 0 if amplifier == 0: red = 0.1 + multi elif amplifier == 1: green = 0.1 + multi else: blue = 0.1 + multi # Draw Nodes nx.draw_networkx_nodes(G, pos, nodelist=dictList[i], node_color=[0.0 + red, 0.0 + green, 0.0 + blue], node_size=500, alpha=0.8) # Draw edges and final plot plt.title("Zachary's Karate Club") nx.draw_networkx_edges(G, pos, alpha=0.5)

What that function does is to simply extract the number of clusters that are in our result and then assign a different color to each of them (up to 10 for the given time is fine) before plotting them.

Clustering Algorithms

Some clustering algorithms will cluster your data quite nicely and others will end up failing to do so. That is one of the main reasons why clustering is such a difficult problem. But don’t worry, we won’t let you drown in an ocean of choices. We'll go through a few algorithms that are known to perform very well.

K-Means Clustering

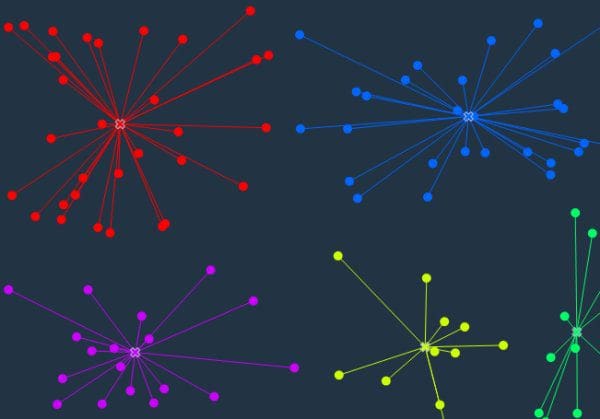

Source: github.com/nitoyon/tech.nitoyon.com

K-means is considered by many the gold standard when it comes to clustering due to its simplicity and performance, and it's the first one we'll try out. When you have no idea at all what algorithm to use, K-means is usually the first choice. Bear in mind that K-means might under-perform sometimes due to its concept: spherical clusters that are separable in a way so that the mean value converges towards the cluster center. To simply construct and train a K-means model, use the follow lines:

# K-means Clustering Model kmeans = cluster.KMeans(n_clusters=kClusters, n_init=200) kmeans.fit(edgeMat) # Transform our data to list form and store them in results list results.append(list(kmeans.labels_))

Agglomerative Clustering

The main idea behind agglomerative clustering is that each node starts in its own cluster, and recursively merges with the pair of clusters that minimally increases a given linkage distance. The main advantage of agglomerative clustering (and hierarchical clustering in general) is that you don’t need to specify the number of clusters. That of course, comes with a price: performance. But, in scikit’s implementation, you can specify the number of clusters to assist the algorithm’s performance. To create and train an agglomerative model use the following code:

# Agglomerative Clustering Model agglomerative = cluster.AgglomerativeClustering(n_clusters=kClusters, linkage="ward") agglomerative.fit(edgeMat) # Transform our data to list form and store them in results list results.append(list(agglomerative.labels_))

Spectral

The Spectral clustering technique applies clustering to a projection of the normalized Laplacian. When it comes to image clustering, spectral clustering works quite well. See the next few lines of Python for all the magic:

# Spectral Clustering Model spectral = cluster.SpectralClustering(n_clusters=kClusters, affinity="precomputed", n_init= 200) spectral.fit(edgeMat) # Transform our data to list form and store them in results list results.append(list(spectral.labels_))

Affinity Propagation

Well this one is a bit different. Unlike the previous algorithms, you can see AF does not require the number of clusters to be determined before running the algorithm. AF, performs really well on several computer vision and biology problems, such as clustering pictures of human faces and identifying regulated transcripts:

# Affinity Propagation Clustering Model affinity = cluster.affinity_propagation(S=edgeMat, max_iter=200, damping=0.6) # Transform our data to list form and store them in results list results.append(list(affinity[1]))

Metrics & Plotting

Well, it is time to choose which algorithm is more suitable for our data. A simple visualization of the result might work on small datasets, but imagine a graph with one thousand, or even ten thousand, nodes. That would be slightly chaotic for the human eye. So, let me show how to calculate the Adjusted Rand Score (ARS) and the Normalized Mutual Information (NMI):

# Append the results into lists for x in results: nmiResults.append(normalized_mutual_info_score(groundTruth, x)) arsResults.append(adjusted_rand_score(groundTruth, x))

If you're unfamiliar with these metrics, here's a quick explanation:

Normalized Mutual Information (NMI)

Mutual Information of two random variables is a measure of the mutual dependence between the two variables. Normalized Mutual Information is a normalization of the Mutual Information (MI) score to scale the results between 0 (no mutual information) and 1 (perfect correlation). In other words, 0 means dissimilar and 1 means perfect match.

Adjusted Rand Score (ARS)

Adjusted Rand Score on the other hand, computes a similarity measure between two clusters by considering all pairs of samples and counting pairs that are assigned in the same or different clusters in the predicted and true clusters. If that's a little weird to think about, have in mind that, for now, 0 is the lowest similarity and 1 is the highest.

So, to get a combination of these metrics (the NMI and ARS), we simply calculate the average value of their sum. And remember, the higher the number, the better the result.

Below, I have plotted the score evaluation so we can get a better understanding of our results. We could plot them in many ways, as points, as a straight line, but I think a bar chart is the better choice for our case. To do so, just use the following code:

# Code for plotting results # Average of NMI and ARS y = [sum(x) / 2 for x in zip(nmiResults, arsResults)] xlabels = ['Spectral', 'Agglomerative', 'Kmeans', 'Affinity Propagation'] fig = plt.figure() ax = fig.add_subplot(111) # Set parameters for plotting ind = np.arange(len(y)) width = 0.35 # Create barchart and set the axis limits and titles ax.bar(ind, y, width,color='blue', error_kw=dict(elinewidth=2, ecolor='red')) ax.set_xlim(-width, len(ind)+width) ax.set_ylim(0,2) ax.set_ylabel('Average Score (NMI, ARS)') ax.set_title('Score Evaluation') # Add the xlabels to the chart ax.set_xticks(ind + width / 2) xtickNames = ax.set_xticklabels(xlabels) plt.setp(xtickNames, fontsize=12) # Add the actual value on top of each chart for i, v in enumerate(y): ax.text( i, v, str(round(v, 2)), color='blue', fontweight='bold') # Show the final plot plt.show()

As you can see in the chart below, K-means and Agglomerative clustering have the best results for our dataset (best possible outcome). That of course, does not mean that Spectral and AF are low-performing algorithms, just that the did not fit in our data.

Well, that's it for this one!

Thanks for joining me in this clustering intro. I hope you found some value in seeing how we can easily manipulate a public dataset and apply several different clustering algorithms in Python. Let me know if you have any questions in the comments below, and feel free to attach a clustering project you've experimented with!

Bio: Nikos Koufos is a LearnDataSci Author, postgraduate in Computer Science & Engineering at the University Ioannina, Greece, and Computer Science undergraduate teaching assistant.

Original. Reposted with permission.

Related: