The Evolution of IoT Edge Analytics: Strategies of Leading Players

This article explores the significance and evolution of IoT edge analytics. Since the author believes that hardware capabilities will converge for large vendors, IoT analytics will be the key differentiator.

IoT Edge Analytics

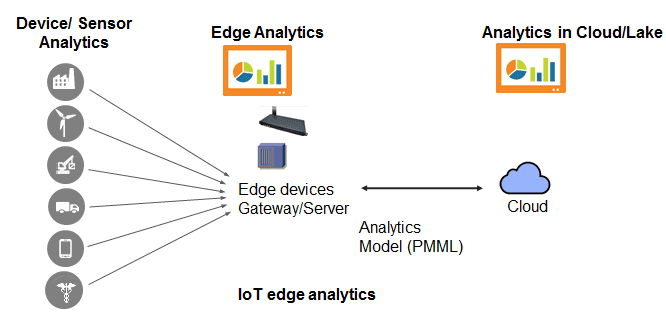

Edge Computing/ Edge Analytics is a relatively new approach for many companies. Most architectures are used to sending all data to the Cloud/Lake. But in Edge computing, that does not happen. I.e. data can be processed near the source and not all data is sent back to the Cloud. For large-scale IoT deployments, this functionality is critical because of the sheer volumes of Data being generated.

In this article, I explore the significance and evolution of IoT edge analytics. I believe that hardware capabilities will converge for large vendors (Cisco, Dell, HPE, Intel, IBM and others). Hence, IoT analytics will be the key differentiator.

I explore these ideas as part of the Data Science for Internet of Things (IoT) course.

Companies like Cisco, Intel and others were early proponents of Edge Computing by positioning their Gateways as Edge devices. Historically, gateways performed the function of traffic aggregation and routing. In the Edge computing model, the core gateway functionality has evolved. Gateways do not just route data but also store data and to perform computations on the data as well. Edge analytics allows us to do some pre-processing or filtering of the data closer to where the data is being created. Thus, data that falls within normal parameters can be ignored or stored in a low cost storage and abnormal readings may be sent to the Lake or the in-memory database.

Now, a new segment of the market is developing driven by vendors like Dell. HPE and others. These vendors are positioning their servers as Edge devices by adding additional storage, computing power and analytics capabilities. This has implications for Edge Analytics for IoT.

IoT Edge Analytics is typically applicable for Oil Rigs, Mines and Factories which operate in low bandwidth, low latency environments. Edge Analytics could apply not just to sensor data but also to richer forms of data such as Video analytics. IoT datasets are massive. A typical Formula One car carries 150-300 sensors. An airlines for example, the current Airbus A350 model has close to 6,000 sensors and generates 2.5 Tb of data per day,. A city (for example the Smart city of Santander in Spain) includes a network comprising more than 25,000 sensors. To avoid these sensors from constantly pinging the Cloud, we need some form of interim processing. Hence, the need for Edge processing in IoT analytics. We can consider Edge devices from two perspectives: Evolution of the traditional Gateway vendors and Evolution of the traditional server vendors.

Evolution of the Gateway

Both Intel and Cisco have been working with IoT Edge analytics for some time.

Cisco incubated / acquired a company called Parstream. ParStream created a lightweight (sub-50MB) database platform primarily for use in IoT platforms like Wind turbines etc. Cisco also includes IoT analytics products for specific verticals such as : Cisco Connected Analytics for Events, Retail, Service Providers, IT, Network Deployment, Mobility, Collaboration, Contact Center etc. More recently, Cisco and IBM have started working together to bring Watson capabilities to the Edge.

Intel’s product suite is based on a series of acquisitions such as mashery (APIs), MacAfee (security) etc. Deployed through the IoT Developer Kits, the end to end platform includes: the Wind River Edge Management System, IoT Gateway, Cloud Analytics, McAfee Security for IoT Gateways, Privacy Identity (EPID) modules, API and Traffic Management(based on Mashery) and possibly with synergies with Cloudera where Intel is an investor.

There are ofcourse other players on the Edge who can also incorporate Edge analytics. For instance: the Access netfront browser used widely in set top boxes and Automotive applications, could also perform Edge analytics functions. This brings the possibilities of deploying IoT analytics using web technology i.e. JavaScript engines based on node.js or PhantomJS. Similarly, SAP has deployed features in HANA (in-memory database), which enables synchronization of data between the enterprise and remote locations at the edge of the network.

Evolution of the server

More recently, we are seeing traditional server vendors deploying their servers as Edge devices. On the hardware side, devices such as the Dell Edge Gateway 5000 series are built specifically for building and industrial automation. Similarly, the HPE Edgeline series is positioned in the same space. Much more interesting is the analytics strategy - for example the use of Statistica by Dell. Dell is using Dell Statistica. As a middleware solution to deploy analytics at the Edge. This allows Dell to use a hardware/software solution for analytics (especially relevant for IoT analytics). More specifically, this allows an analytics model to be created on one location(ex on the Cloud) and deployed to other parts of the ecosystem (ex on the Edge device or the actual sensor location itself – ex a windmill) using technologies like PMML (more on this below).

Impact on IoT analytics

So, the real question is: What does it mean for IoT Analytics?

- Firstly, we need to distinguish between two stages: Creating the analytics model and Executing the Analytics model. Creating the analytics model involves : collecting data, storing data, preparing the data for analytics(some ETL functions), choosing the analytics algorithm, training the algorithms, validating the analytic goodness of fit etc. The output of this trained model will be rules, recommendations, scores etc. Only then, can we deploy this model. So, when we say, we are implementing analytics at the ‘edge’ – what exactly are we doing? If you follow the examples above ex from Dell, the more general case is: creating the model in one location and potentially deploying the model at multiple points (ex from Cloud to gateway, server, factory etc)

- PMML becomes important for the ability to deploy models in multiple locations: Predictive Model Markup Language (PMML) PMML is an XML-based predictive model interchange format. PMML provides a way for analytic applications to describe and exchange predictive models produced by data mining and machine learning algorithms. It supports common models such as logistic regression and feedforward neural networks. (Wikipedia)

- Decentralized processing has inherent complexity: When you decentralize processing, you face some inherently complex situations – ex management and replication of master data, security, storage etc. In addition, the process of creating the analytics model on one machine and deploying it on another machine is new

- Peer to Peer node communication may be a real possibility over time: IoT is currently deployed through silos. Edge networks offer the possibility of Peer to Peer communication by the Edge devices if they have sufficient processing capability

Conclusions

- IoT edge analytics is an exciting space. It is also a nascent space with many more developments yet to come.

- Concepts such as PMML(deploying models), Peer edge nodes etc could be key drivers of IoT deployments in future

- I see the hardware capabilities of Dell, HPE, Cisco, IBM and Intel to converge. Hardware will not provide real differentiation. The key driver will be IoT analytics and algorithmic capabilities.

I explore these ideas as part of the Data Science for Internet of Things(IoT) course and my teaching at Oxford University.

Related: