Recreating Imagination: DeepMind Builds Neural Networks that Spontaneously Replay Past Experiences

DeepMind researchers created a model to be able to replay past experiences in a way that simulate the mechanisms in the hippocampus.

The ability to use knowledge abstracted from previous experiences is one of the magical qualities of human learning. Our dreams are often influenced by past experiences and anybody that has suffered a traumatic experience in the past can tell you how constantly see flashes of it in new situations. The human brain is able to make rich inferences in the absence of data by generalizing past experiences. This replay of experiences is has puzzled neuroscientists for decades as its an essential component of our learning processes. In artificial intelligence(AI), the idea of neural networks that can spontaneously replay learned experiences seems like a fantasy. Recently, a team of AI researchers from DeepMind published a fascinating paper describing a method focused on doing precisely that.

In neuroscience, the ability of the brain to draw inferences from past experiences is called replay. Although many of the mechanisms behind experience replay are still unknown, neuroscience research have made a lot of progress explaining the cognitive phenomenon. Understanding the neuroscience roots of experience replay is essential in order to recreate its mechanics in AI agents.

The Neuroscience Theory of Replay

The origins of neural replay can be attributed to the work of researchers such as Nobel Prize in Medicine winner John O’Keefe. Dr. O’Keefe focus a lot of his work on explaining the role of the hippocampus in the creation of experiences. The hippocampus is a curved formation in the brain that is part of the limbic system and is typically associated with the formation of new memories and emotions. Because the brain is lateralized and symmetrical, you actually have two hippocampus. They are located just above each ear and about an inch-and-a-half inside your head.

Leading neuroscientific theories suggests that different areas of the hippocampus are related to different types of memories. For example, the rear part of the hippocampus is involved in the processing of spatial memories. Using a software architecture analogy, the hippocampus acts as a cache system for memories; taking in information, registering it, and temporarily storing it before shipping it off to be filed and stored in long-term memory.

Going back to Dr. O’Keefe’s work, one of his key contributions to neurociense research was the discovery of place cell, which are hippocampal cells that fire based on a specific environmental conditions such as a given place. One of Dr. O’Keefe’s experiments have, rats ran the length of a single corridor or circular track, so researchers could easily determine which neuron coded for each position within the corridor.

Following that experiment, the scientists recorded from the same neurons while the rats rested. During rest, the cells sometimes spontaneously fired in rapid sequences demarking the same path the animal ran earlier, but at a greatly accelerated speed. They called these sequences experience replay.

Despite we know that experience replay is a key part of the learning process, its mechanics are particularly difficult to recreated in AI systems. This is partly because experience replay depends on other cognitive mechanisms such as concept abstractions that are just started to make inroads in the world of AI. However, the team of DeepMind thinks that we have enough to get started.

Replay in AI

From the different fields of AI, reinforcement learning seems particularly well suited for the incorporation of experience replay mechanisms. A reinforcement learning agent, builds knowledge by constantly interacting with an environment which allows it to record and replay past experiences in a more efficient way than traditional supervised models. Some of the early works in trying to recreate experience replay in reinforcement learning agents dates back to a seminal 1992 paper that was influential in the creation of DeepMind’s DQN networks that mastered Atari games in 2015.

From an architecture standpoint, adding replay experiences to a reinforcement learning network seems relatively simple. Most solutions in the space relied on an additional replay buffer that records the experiences learned by the agent and plays them back at specific times. Some architectures choose to replay the experiences randomly while others use a specific preferred order that will optimize the learning experiences of the agent.

The way in which experiences are replayed in a reinforcement learning model play a key role in the learning experience of an AI agent. At the moment, two of the most actively experimented modes are known as the movie and imagination replays. To explain both modes let’s use an analogy from the DeepMind paper:

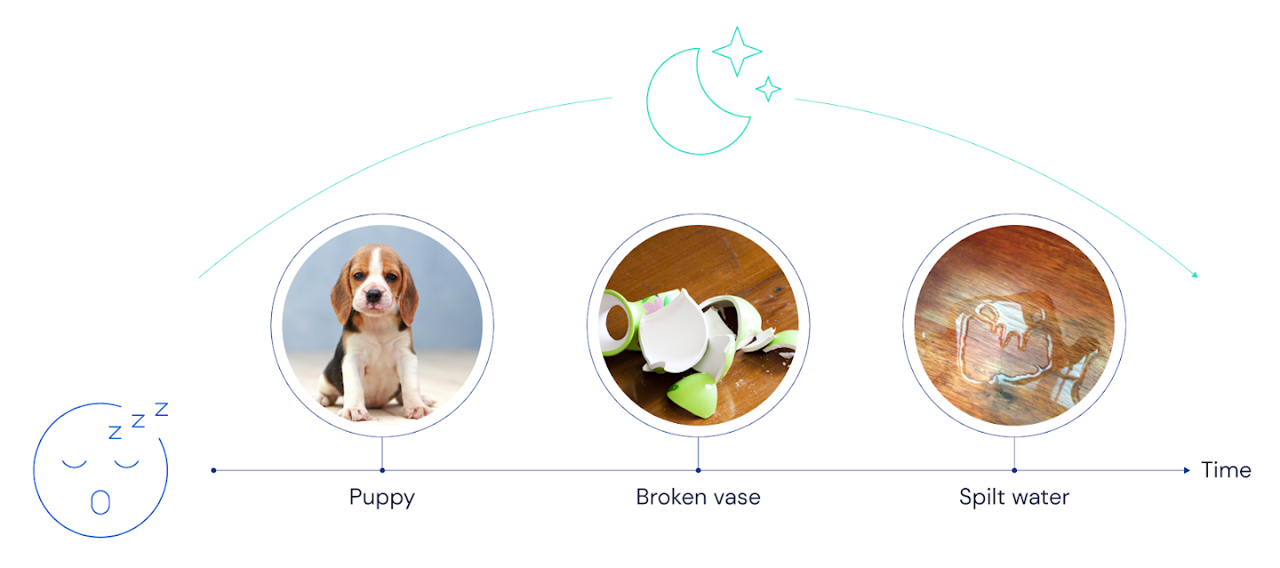

Suppose you come home and, to your surprise and dismay, discover water pooling on your beautiful wooden floors. Stepping into the dining room, you find a broken vase. Then you hear a whimper, and you glance out the patio door to see your dog looking very guilty.

A reinforcement learning agent based on the previous architecture will record the following sequence in the replay buffer.

The movie replay experience will replay the stored memories in the exact order in which they happened in the past. In this case, the replay buffer will replay the sequence e: “water, vase, dog” in that exact order. Architecturally, our model will use an offline learner agent to replay those experiences.

In the imagination strategy, replay doesn’t literally rehearse events in the order they were experienced. Instead, it infers or imagines the real relationships between events, and synthesizes sequences that make sense given an understanding of how the world works. The imagination theory skips the exact order of events and instead, infers the most correct association between experiences. In terms of the architecture of the agent, the replay sequence will depends on the current learned model.

Conceptually, neuroscience research suggests that that movie replay would be useful to strengthen the connections between neurons that represent different events or locations in the order they were experienced. However, the imagination replay might be foundational to the creation of new sequences. The DeepMind team pushed on this imagination replay theory and that the reinforcement learning agent was able to make generate remarkable new sequences based on previous experiences.

The current implementations of experience replay mostly follow the movie strategy based on its simplicity but researchers are starting to make inroads in models that resemble the imagination strategy. Certainly, the incorporation of experience replay modules can be a great catalyzer to the learning experiences of reinforcement learning agents. Even more fascinating is the fact that by observing how AI agents replay experiences we can develop new insights about our own human cognition.

Original. Reposted with permission.

Related:

- DeepMind Has Quietly Open Sourced Three New Impressive Reinforcement Learning Frameworks

- How DeepMind and Waymo are Using Evolutionary Competition to Train Self-Driving Vehicles

- Introducing IceCAPS: Microsoft’s Framework for Advanced Conversation Modeling