20 AI, Data Science, Machine Learning Terms You Need to Know in 2020 (Part 2)

20 AI, Data Science, Machine Learning Terms You Need to Know in 2020 (Part 2)

We explain important AI, ML, Data Science terms you should know in 2020, including Double Descent, Ethics in AI, Explainability (Explainable AI), Full Stack Data Science, Geospatial, GPT-2, NLG (Natural Language Generation), PyTorch, Reinforcement Learning, and Transformer Architecture.

This is the 2nd part of our list of 20 AI, Data Science, Machine Learning terms to know for 2020. Here is 20 AI, Data Science, Machine Learning Terms You Need to Know in 2020 (Part 1).

Those definitions were compiled by KDnuggets Editors Matthew Dearing, Matthew Mayo, Asel Mendis, and Gregory Piatetsky.

In this installment, we explain

- Double Descent

- Ethics in AI

- Explainability (Explainable AI)

- Full Stack Data Science

- Geospatial

- GPT-2

- NLG (Natural Language Generation)

- PyTorch

- Reinforcement Learning

- Transformer Architecture

Double Descent

This is a really interesting concept, which Pedro Domingos, a leading AI researcher, called one of the most significant advances in ML theory in 2019. The phenomenon is shown in Figure 1.

Fig. 1: Test/Train Error vs Model Size (Source OpenAI blog)

The error first declines as the model gets larger, then the error increases as the model begins to overfit, but then the error declines again with the increasing model size, data size, or training time.

The classical statistical theory says that the bigger model will be worse because of overfitting. However, the modern ML practice shows that a very big Deep Learning model is usually better than a smaller one.

OpenAI blog notes that this occurs in CNNs, ResNets, and transformers. OpenAI researchers observed that when model is not large enough to fit the training set, larger models had higher test error. However, after passing this threshold, larger models with more data started performing better.

Read the original OpenAI blog and a longer explanation by Rui Aguiar.

Written by Gregory Piatetsky.

Ethics in AI

Ethics in AI is concerned with the ethics of practical artificial intelligence technology.

AI ethics is a very broad field, and encompasses a wide variety of seemingly very different aspects of ethical concern. Concerns over the use of AI, and of all types of technology, more generally, have existed for as long as these technologies were first conceived of. Yet given the recent explosion of AI, machine learning, and related technologies, and their increasingly quick adoption and integration into society at large, these ethical concerns have risen to the forefront of many minds both within and outside of the AI community.

While esoteric and currently abstract ethical concerns such as the potential future rights of sentient robots can also be included under the umbrella of AI ethics, more pressing contemporary concerns such as AI system transparency, the potential biases of these systems, and the representative inclusion of all categories of society participants in the engineering of said systems are likely of much greater and immediate concern to most people. How are decisions being made in AI systems? What assumptions are these systems making about the world and the people in it? Are these systems crafted by a single dominant majority class, gender, and race of society at large?

Rachel Thomas, Director of the USF Center for Applied Data Ethics, has stated the following about what constitutes working on AI ethics, which goes beyond concerns related directly and solely to the lower-level creation of AI systems, and takes into account the proverbial bigger picture:

founding tech companies and building products in ethical ways;

advocating and working for more just laws and policies;

attempting to hold bad actors accountable;

and research, writing, and teaching in the field.

The dawn of autonomous vehicles has presented additional specific challenges related to AI ethics, as have the potential weaponization of AI systems, and a growing international AI arms race. Contrary to what some would have us believe, these aren't problems predestined for a dystopian future, yet they are problems which will require some critical thought, proper preparation, and extensive cooperation. Even with what we may believe to be adequate consideration, AI systems might still prove to be uniquely and endemically problematic, and the unintended consequences of AI systems, another aspect of AI ethics, will need to be considered. Written by Matthew Mayo

Explainability (Explainable AI)

As AI and Machine Learning become a larger part of our lives, with smartphones, medical diagnostics, self-driving cars, intelligent search, automated credit decisions, etc. having decisions made by AI, one important aspect this decision making comes to the forefront - explainability. Humans can usually explain their knowledge-based decisions (whether such explanations are accurate is a separate question) and that contributes to trust by other humans for such decisions. Can AI and ML algorithms explain their decisions? This is important for

- improving understanding and trust in the decision

- deciding accountability or liability in case something goes wrong.

- avoiding discrimination and societal bias in decisions

We note that some form of explainability is required by GDPR.

Explainable AI (XAI) is becoming a major field, with DARPA launching XAI program in 2018.

Fig. 2: Explainable AI Venn Diagram. (Source).

Explainability is a multifaceted topic. It encompasses both individual models and the larger systems that incorporate them. It refers not only to whether the decisions a model outputs are interpretable, but also whether or not the whole process and intention surrounding the model can be properly accounted for. The goal is to have an efficient trade-off between accuracy and explainability along with a great human-computer interface which can help translate the model to understandable representation for the end users.

Some of the more popular methods for Explainable AI include LIME and SHAP.

Explainability tools are now offered by Google (Explainable AI service), IBM AIX 360 and other vendors.

See also a KDnuggets blog on Explainable AI by Preet Gandhi and Explainable Artificial Intelligence (XAI): Concepts, Taxonomies, Opportunities and Challenges toward Responsible AI (arxiv 1910.10045). Written by Gregory Piatetsky.

Full Stack Data Science

The Full Stack Data Scientist is the epitome of the Data Science Unicorn. Someone who possesses the skills of a Statistician that can model a real life scenario, a Computer Scientist that can manage databases and deploy model to the web, and a businessman that translates the insights and the models to actionable insights to the end users who are typically senior management that does not care about the backend work.

Below are two great talks that can give you an idea about the different nuances of an End-to-End Data Science product.

1. Going Full Stack with Data Science: Using Technical Readiness, by Emily Gorcenski

2. Video: #42 Full Stack Data Science (with Vicki Boykis) - DataCamp.

Read #42 Full Stack Data Science (with Vicki Boykis) - Transcript.

Written by Asel Mendis.

Geospatial

Geospatial is a term for any data that has a spatial/location/geographical component to it. Geospatial analysis has been gaining in popularity due to the onset of technology that tracks user movements and creates geospatial data as a by-product. The most famous technologies (Geographic Information Systems – GIS) used for spatial analysis are ArcGIS, QGIS, CARTO and MapInfo.

The current epidemic of Coronavirus is tracked by ARCGIS dashboard, developed by Johns Hopkins U. Center for Systems Science and Engineering.

Fig. 3: Coronavirus stats as of March 2, 2020, according to Johns Hopkins CSSE dashboard.

Geospatial data can be used in applications from sales prediction modelling to assessing government funding initiative. Because the data is in reference to a specific location, there are many insights we can gather. Different countries record and measure their spatial data differently and to varying degrees. The geographic boundaries of a country are different and must be treated as unique to each country. Written by Asel Mendis.

GPT-2

GPT-2 is a transformer-based language model created by OpenAI. GPT-2 is a generative language model, meaning that it generates text by predicting word by word which word comes next in a sequence, based on what the model has previously learned. In practice, a user-supplied prompt is presented to the model, following which subsequent words are generated. GPT-2 was trained to predict the next word on a massive amount (40 GB) of internet text, and is built solely using transformer decoder blocks (contrast this with BERT, which uses encoder blocks). For more information on transformers see below.

GPT-2 isn't a particularly novel undertaking; what sets it apart from similar models, however, is the number of its trainable parameters, and the storage size required for these trained parameters. While OpenAI initially released a scaled down version of the trained model — out of concerns that there could be malicious uses for it — the full model contains 1.5 billion parameters. This 1.5 billion trainable parameter model requires 6.5 GB of trained parameter (synonymous to "trained model") storage.

Upon release, GPT-2 generated a lot of hype and attention in large part due to the selected examples which accompanied it, the most famous of which — a news report documenting the discovery of English speaking unicorns in the Andes — can be read here. A unique application of the GPT-2 model has surfaced in form of the AI Dungeon, an online text adventure game which treats user-supplied text as prompts for input into the model, and generated output is used to advance the game and user experience. You can try out AI Dungeon here.

While text generation via next word prediction is the bread and butter (and pizzazz) of GPT-2 and decoder block transformers more generally, they have shown promise in additional related areas, such as language translation, text summarization, music generation, and more. For technical details on the GPT-2 model and additional information, see Jay Alammar's fantastic Illustrated GPT-2. Written by Matthew Mayo.

NLG (Natural Language Generation)

Significant progress has been made in natural language understanding – getting a computer to interpret human input and provide a meaningful response. Many people enjoy this technology every day through personal devices, such as Amazon Alexa and Google Home. Not unexpectedly, kids really like asking for jokes.

The tech here is that the machine learning backend is trained on a wide variety of inputs, such as “please tell me a joke,’ to which it can select one from a prescribed list of available responses. What if Alexa or Google Home could tell an original joke, one that was created on the fly based on training from a large set of human authored jokes. That’s natural language generation.

Original jokes are only the beginning (can a trained machine learning model even be funny?), as powerful applications of NLG are being developed for analytics that generate human-understandable summaries of data sets. The creative side of a computer can also be explored through NLG techniques that output original movie scripts, even ones that star David Hasselhoff, as well as text-based stories, similar to a tutorial you can follow that leverages long short-term memory, the recurrent neural network architecture with feedback, that is another hot research topic today.

While business analysis and entertainment applications of computer-generated language might be appealing and culture-altering, ethical concerns are already boiling over. NLG's capability to deliver “fake news” that is autonomously generated and dispersed is causing distress, even if its intentions were not programmed to be evil. For example, OpenAI has been carefully releasing their GPT-2 language model for which studies have shown can generate text output that is convincing to humans, difficult to detect as synthetic, and can be fine-tuned for misuse. Now, they are using this research on the development of AI that can be troublesome for humanity as a way to understand better how to control these worrisome biases and potentials for malicious use of text generators. Written by Matthew Dearing.

PyTorch

First released in 2002 and implemented in C, the Torch package is a tensor library developed with a range of algorithms to support deep learning. Facebook's AI Research lab took a liking to Torch and open sourced the library in early 2015 that also incorporated many of its machine learning tools. The following year, they released a Python implementation of the framework, called PyTorch, optimized for GPU-acceleration.

With the powerful Torch tools now accessible to Python developers, many major players integrated PyTorch into their development stack. Today, this once Facebook-internal machine learning framework is now one of the most used deep learning libraries with OpenAI being the latest to join a growing slate of corporations and researchers leveraging PyTorch. The competing package released by Google in 2017, TensorFlow, has dominated the deep learning community since its inception and is now clearly trending toward being outpaced by PyTorch later in 2020.

If you are looking for your first machine learning package to study or are a seasoned TensorFlow user, you can get started with PyTorch to find out for yourself which is the best framework for your development needs. Written by Matthew Dearing.

Reinforcement Learning

Along with supervised and unsupervised learning, reinforcement learning (RL) is a fundamental approach in machine learning. The essential idea is a training algorithm that provides a reward feedback to a trial-and-error decision-making “agent” that attempts to perform some computational task. In other words, if you toss a stick across the yard for Rover to fetch, and your new puppy decides to return it to you for a treat, then it will repeat the same decision faster and more efficiently next time. The exciting feature of this approach is that labeled data is not necessary – the model can explore known and unknown data with guidance toward an optimal solution through an encoded reward.

RL is the foundation of the incredible, record-breaking, and human-defeating competitions in chess, video games, and AlphaGo’s crushing blow that learned the game of Go without any instructions hardcoded into its algorithms. However, while these developments in AI’s superhuman capabilities are significant, they perform within well-defined computer representations, such as games with unchanging rules. RL is not directly generalizable to the messiness of the real world, as seen with OpenAI’s Rubik’s Cube model that could solve the puzzle in simulation, but took years to see much-less-than-perfect results when translated through robotic arms.

So, a great deal is yet to be developed and improved in the area of reinforcement learning, and 2019 witnessed that a potential renaissance is underway. Expanding RL to real-world applications will be a hot topic in 2020, with important implementations already underway. Written by Matthew Dearing.

Transformer

The Transformer is a novel neural network architecture based on self-attention mechanism that is especially well-suited to NLP and Natural Language Understanding. It was proposed in Attention Is All You Need, 2017 paper by Google AI researchers. The Transformer is an architecture for "transforming" one sequence to another with the help of Encoder and Decoder, but it does not use recurrent networks or LSTM. Instead it uses the attention mechanism which allows it to look at other positions in the input sequence to help improve encoding.

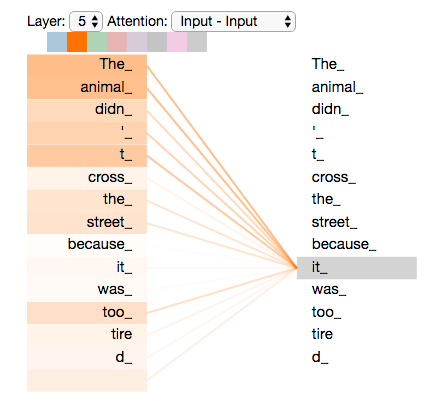

Here is an example, well-explained by Jay Alammar. Suppose we want to translate

"The animal didn't cross the street because it was too tired"

What does "it" refer to? Humans understand that "it" refers to the animal, not the street, but this question is hard for computers. When encoding the word "it", the self-attention mechanism focuses on "The Animal" and associates these words with "it".

Fig. 4: As transformer is encoding the word "it", part of the attention mechanism was focusing on "The Animal", and connected its representation into the encoding of "it". (Source.)

Google reports that Transformer has significantly outperformed other approaches on translation tasks. Transformer architecture was used in many NLP frameworks, such as BERT (Bidirectional Encoder Representations from Transformers) and its descendants.

For a great visual illustration, see The Illustrated Transformer, by Jay Alammar. Written by Gregory Piatetsky.

Related:

- 20 AI, Data Science, Machine Learning Terms You Need to Know in 2020 (Part 1)

- 277 Data Science Key Terms, Explained

- What is Data Science?

20 AI, Data Science, Machine Learning Terms You Need to Know in 2020 (Part 2)

20 AI, Data Science, Machine Learning Terms You Need to Know in 2020 (Part 2)